Chapter 5 Simulation - Using Derivatives

In this last section concerning simulated data, we will focus on the same data as in Chapter 1 (and possibly also in Chapters 2 or 3), specifically generating functional data from functions calculated using interpolation polynomials. As we generated data with a random vertical shift with a standard deviation parameter \(\sigma_{shift}\) in Section 1, we could attempt to remove this shift and classify the data after its removal. We observed in Section 3 that the accuracy of especially classical classification methods deteriorates rather dramatically as the value of the standard deviation parameter \(\sigma_{shift}\) increases. In contrast, classification methods that account for the functional nature of the data generally behave quite stably, even as \(\sigma_{shift}\) increases.

One way to remove vertical shifts, which we will use in the following section, is to classify data based on the estimate of the first derivative of the generated and smoothed curve, since it is known that \[ \frac{\text d}{\text d t} \big( x(t) + c \big) = \frac{\text d}{\text d t} x(t)= x'(t). \]

5.1 Classification Based on the First Derivative

First, we will simulate functions that we will subsequently want to classify. For simplicity, we will consider two classification classes.

To simulate, we will:

- Choose appropriate functions,

- Generate points from the chosen interval that contain, for example, Gaussian noise,

- Smooth the obtained discrete points into a functional object using a suitable basis system.

This procedure will yield functional objects along with the value of the categorical variable \(Y\), which distinguishes membership in a classification class.

Code

Let’s consider two classification classes, \(Y \in \{0, 1\}\), with the same number of n generated functions for each class. First, we define two functions, each corresponding to one class, on the interval \(I = [0, 6]\).

Now, we will create the functions using interpolation polynomials. First, we define the points through which our curve will pass and then fit an interpolation polynomial through these points, which we will use to generate the curves for classification.

Code

# Defining points for class 0

x.0 <- c(0.00, 0.65, 0.94, 1.42, 2.26, 2.84, 3.73, 4.50, 5.43, 6.00)

y.0 <- c(0, 0.25, 0.86, 1.49, 1.1, 0.15, -0.11, -0.36, 0.23, 0)

# Defining points for class 1

x.1 <- c(0.00, 0.51, 0.91, 1.25, 1.51, 2.14, 2.43, 2.96, 3.70, 4.60,

5.25, 5.67, 6.00)

y.1 <- c(0.1, 0.4, 0.71, 1.08, 1.47, 1.39, 0.81, 0.05, -0.1, -0.4,

0.3, 0.37, 0)Code

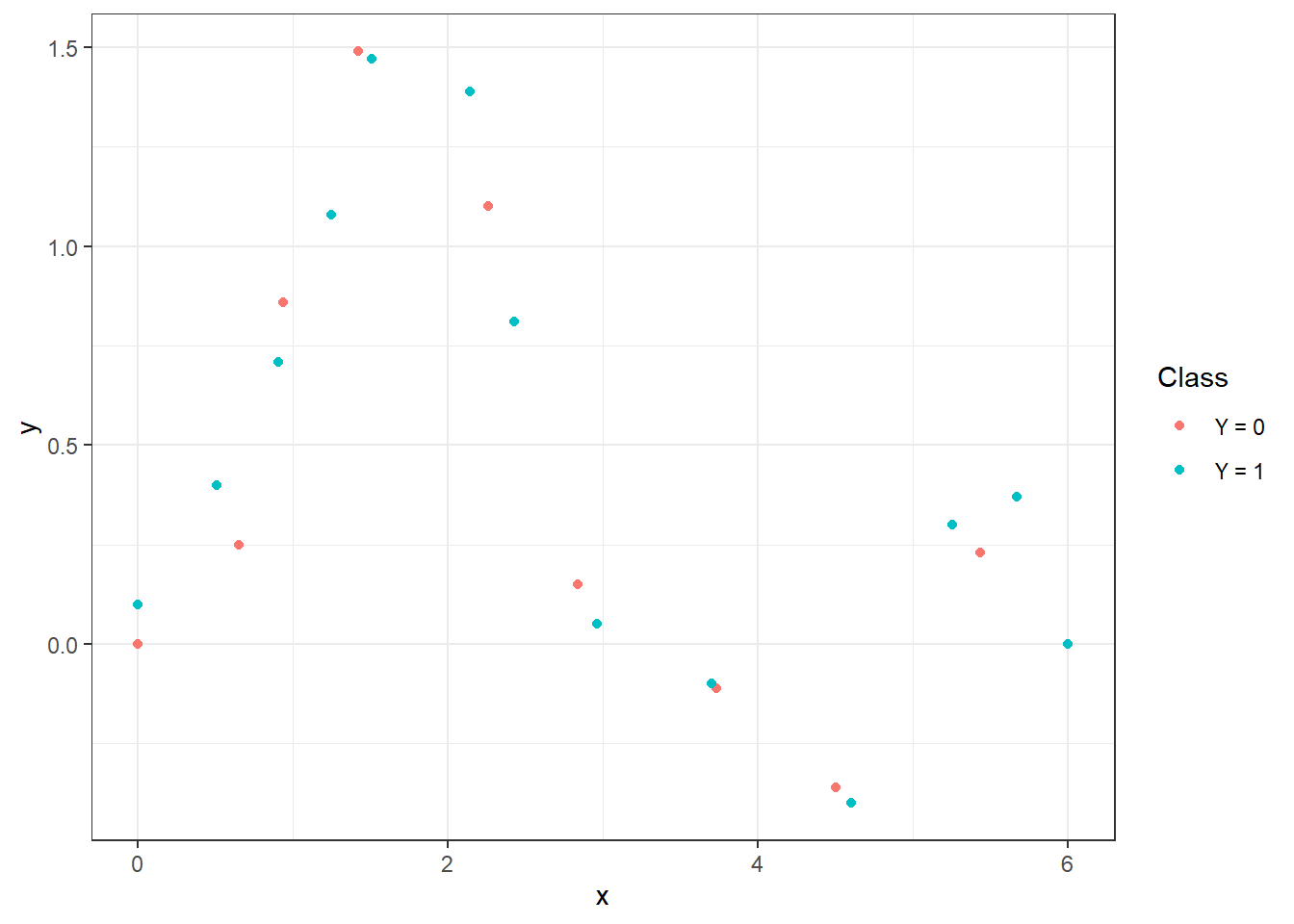

Figure 1.1: Points defining both interpolation polynomials.

To calculate the interpolation polynomials, we will use the poly.calc() function from the polynom library. We will also define the functions poly.0() and poly.1(), which will compute the values of the polynomials at a given point in the interval. We will use the predict() function for this, where we input the corresponding polynomial and the point at which we want to evaluate the polynomial.

Code

Code

# Plotting the polynomials

xx <- seq(min(x.0), max(x.0), length = 501)

yy.0 <- poly.0(xx)

yy.1 <- poly.1(xx)

dat_poly_plot <- data.frame(x = c(xx, xx),

y = c(yy.0, yy.1),

Class = rep(c('Y = 0', 'Y = 1'),

c(length(xx), length(xx))))

ggplot(dat_points, aes(x = x, y = y, colour = Class)) +

geom_point(size=1.5) +

theme_bw() +

geom_line(data = dat_poly_plot,

aes(x = x, y = y, colour = Class),

linewidth = 0.8) +

labs(colour = 'Class')![Illustration of two functions on the interval $I = [0, 6]$, from which we generate observations for classes 0 and 1.](05-Simulace_deriv2_files/figure-html/unnamed-chunk-6-1.png)

Figure 1.2: Illustration of two functions on the interval \(I = [0, 6]\), from which we generate observations for classes 0 and 1.

Now, we will create a function to generate random functions with added noise (i.e., points on a predetermined grid) from the chosen generating function. The argument t represents the vector of values at which we want to evaluate the functions, fun denotes the generating function, n is the number of functions, and sigma is the standard deviation \(\sigma\) of the normal distribution \(\text{N}(\mu, \sigma^2)\) from which we randomly generate Gaussian white noise with \(\mu = 0\). To demonstrate the advantage of using methods that work with functional data, we will also add a random component to each simulated observation that represents a vertical shift of the entire function (the parameter sigma_shift). This shift will be generated from a normal distribution with parameter \(\sigma^2 = 4\).

Code

generate_values <- function(t, fun, n, sigma, sigma_shift = 0) {

# Arguments:

# t ... vector of values, where the function will be evaluated

# fun ... generating function of t

# n ... the number of generated functions / objects

# sigma ... standard deviation of normal distribution to add noise to data

# sigma_shift ... parameter of normal distribution for generating shift

# Value:

# X ... matrix of dimension length(t) times n with generated values of one

# function in a column

X <- matrix(rep(t, times = n), ncol = n, nrow = length(t), byrow = FALSE)

noise <- matrix(rnorm(n * length(t), mean = 0, sd = sigma),

ncol = n, nrow = length(t), byrow = FALSE)

shift <- matrix(rep(rnorm(n, 0, sigma_shift), each = length(t)),

ncol = n, nrow = length(t))

return(fun(X) + noise + shift)

}Now we can generate functions. In each of the two classes, we will consider 100 observations, thus n = 100.

Code

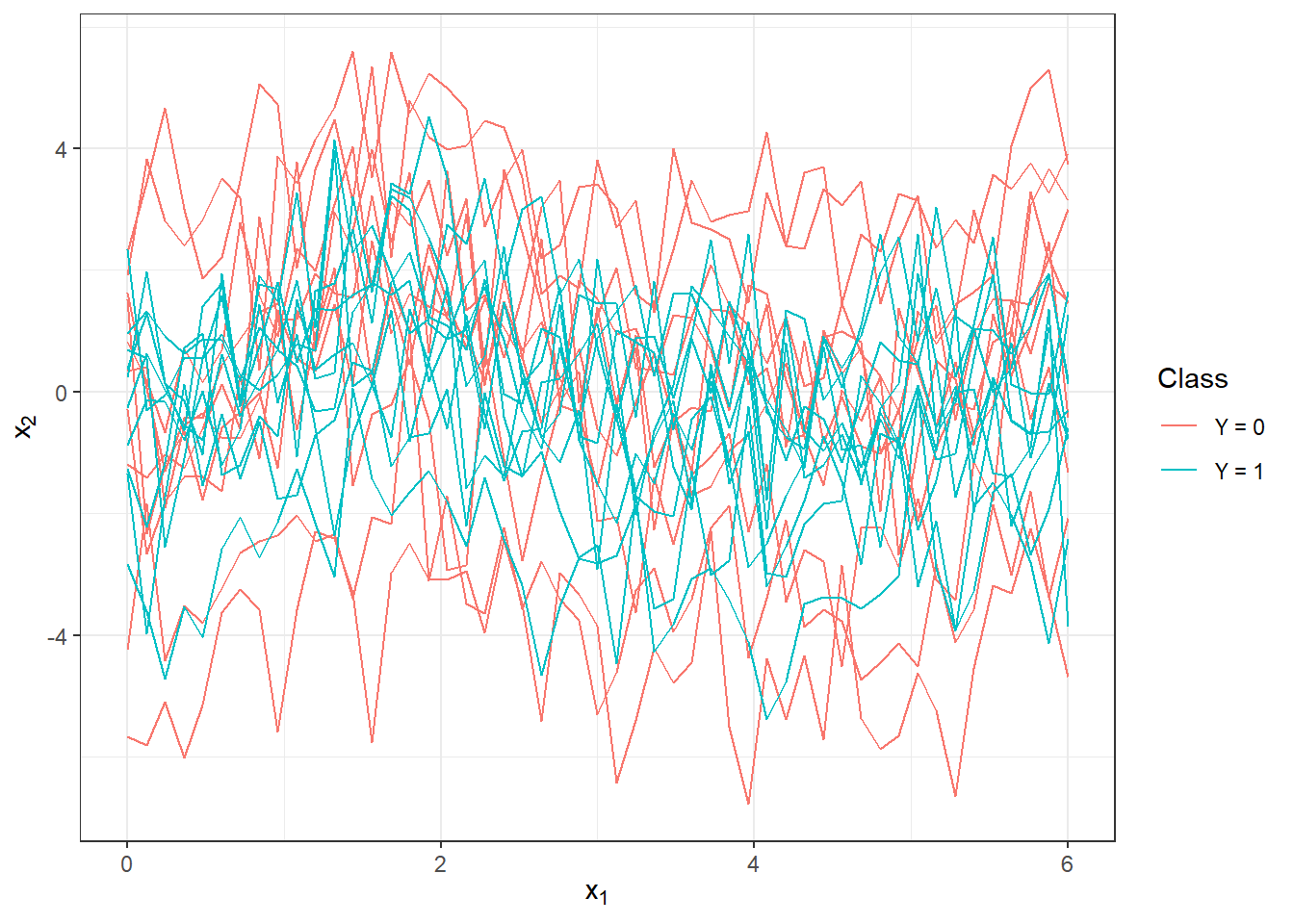

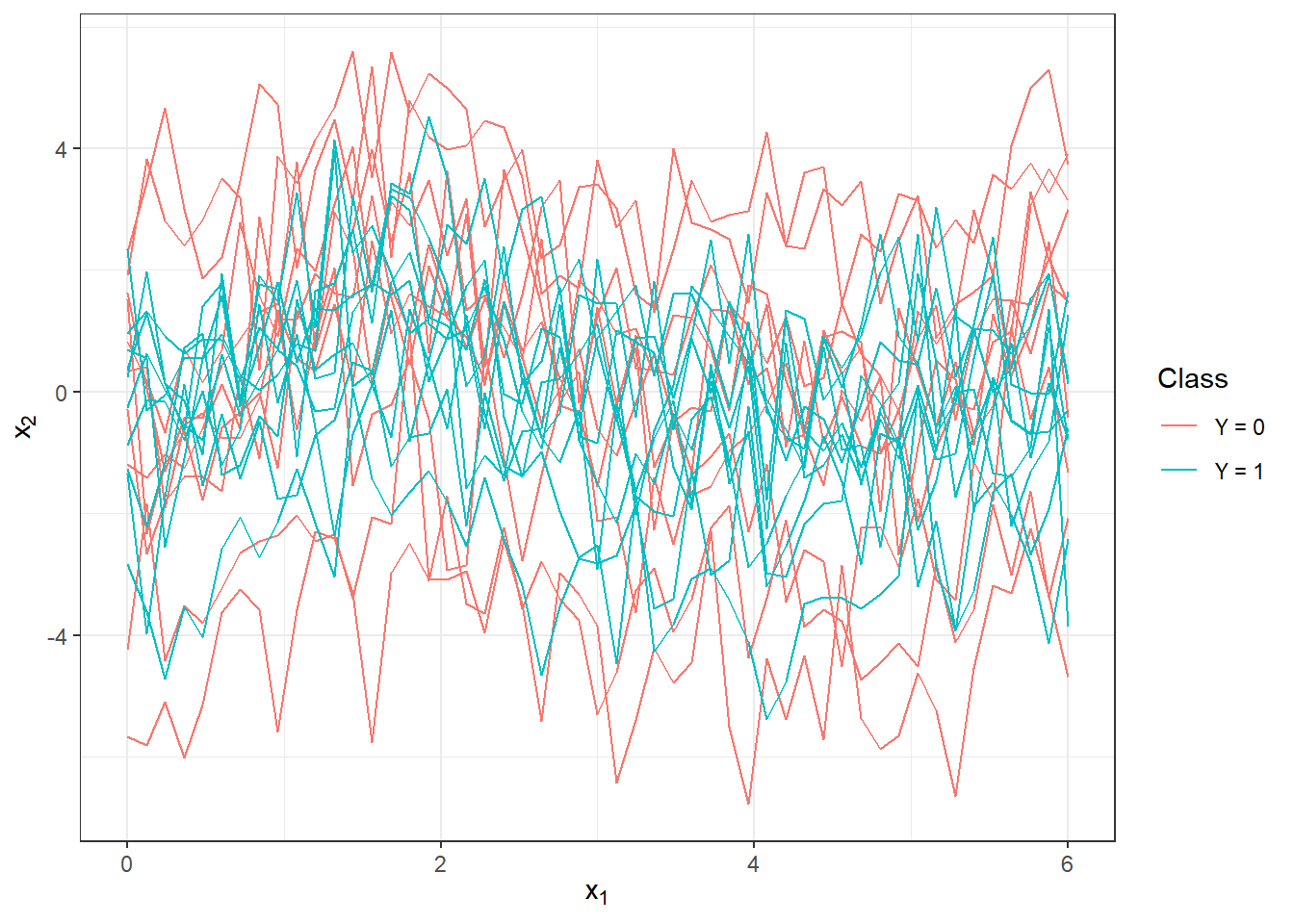

We will plot the generated (not yet smoothed) functions colored by class (only the first 10 observations from each class for clarity).

Code

n_curves_plot <- 10 # number of curves we want to plot from each group

DF0 <- cbind(t, X0[, 1:n_curves_plot]) |>

as.data.frame() |>

reshape(varying = 2:(n_curves_plot + 1), direction = 'long', sep = '') |>

subset(select = -id) |>

mutate(

time = time - 1,

group = 0

)

DF1 <- cbind(t, X1[, 1:n_curves_plot]) |>

as.data.frame() |>

reshape(varying = 2:(n_curves_plot + 1), direction = 'long', sep = '') |>

subset(select = -id) |>

mutate(

time = time - 1,

group = 1

)

DF <- rbind(DF0, DF1) |>

mutate(group = factor(group))

DF |> ggplot(aes(x = t, y = V, group = interaction(time, group),

colour = group)) +

geom_line(linewidth = 0.5) +

theme_bw() +

labs(x = expression(x[1]),

y = expression(x[2]),

colour = 'Class') +

scale_colour_discrete(labels=c('Y = 0', 'Y = 1'))

Figure 2.1: The first 10 generated observations from each of the two classification classes. The observed data are not smoothed.

5.1.1 Smoothing Observed Curves

Now we will convert the observed discrete values (vectors of values) into functional objects that we will subsequently work with. Again, we will use B-spline basis for smoothing.

We take the entire vector t as knots, and since we consider the first derivative, we choose norder = 5. We will penalize the third derivative of the function, as we now require smooth first derivatives as well.

Code

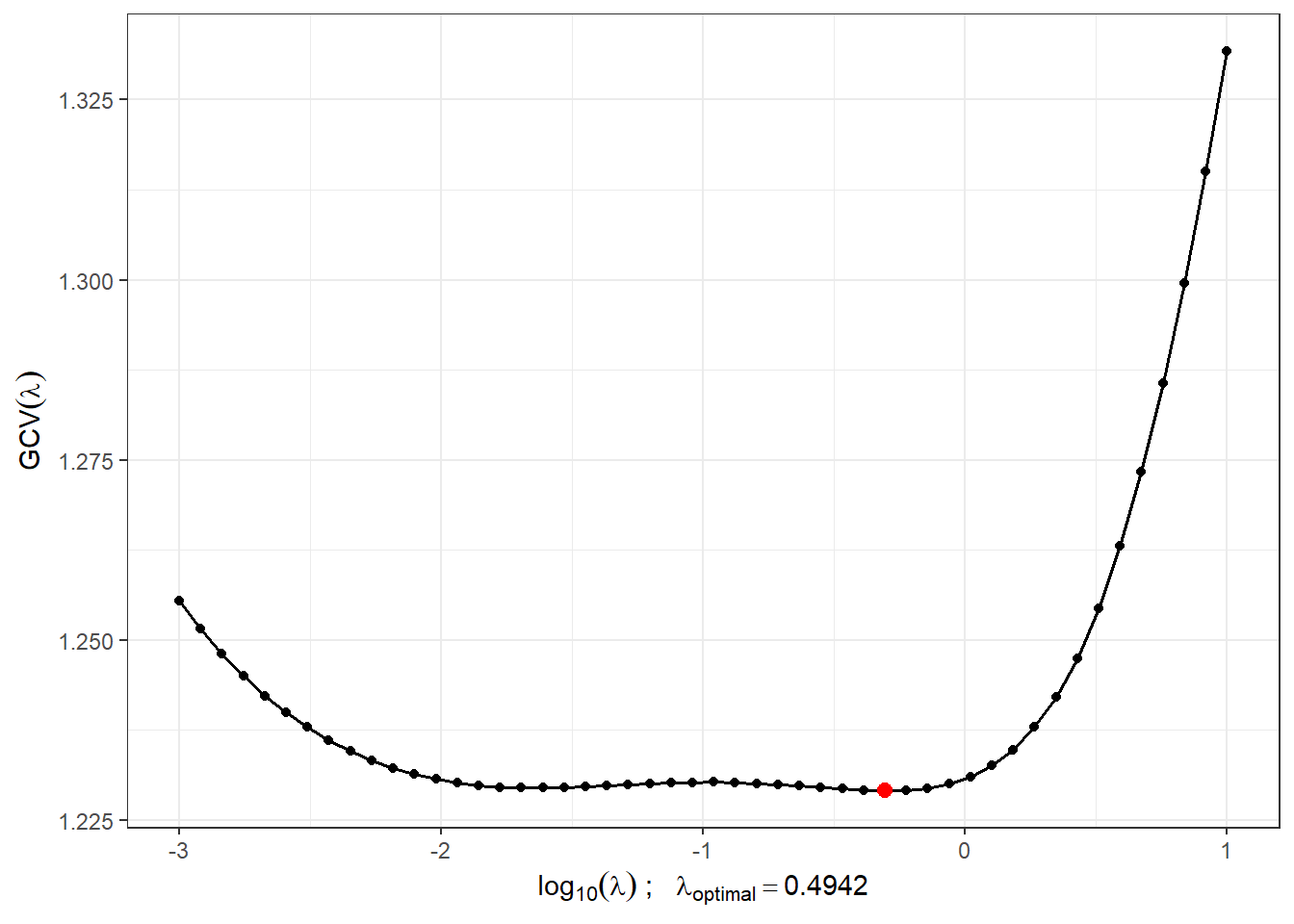

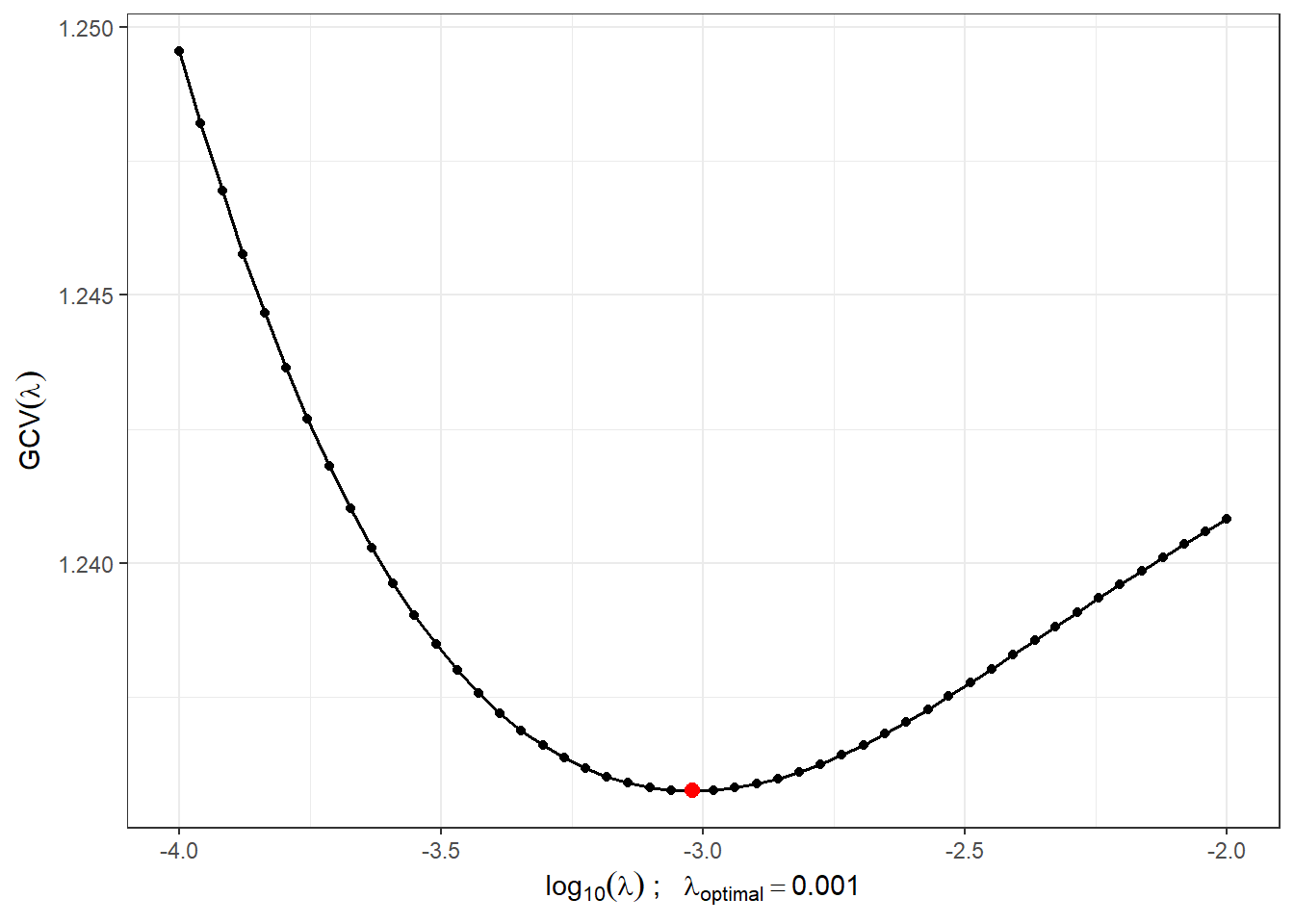

We will find a suitable value of the smoothing parameter \(\lambda > 0\) using \(GCV(\lambda)\), that is, generalized cross-validation. We will consider the value of \(\lambda\) to be the same for both classification groups, as we would not know in advance which value of $to choose for test observations if different values were chosen for each class.

Code

# combining observations into one matrix

XX <- cbind(X0, X1)

lambda.vect <- 10^seq(from = -3, to = 1, length.out = 50) # vector of lambdas

gcv <- rep(NA, length = length(lambda.vect)) # empty vector for storing GCV

for(index in 1:length(lambda.vect)) {

curv.Fdpar <- fdPar(bbasis, curv.Lfd, lambda.vect[index])

BSmooth <- smooth.basis(t, XX, curv.Fdpar) # smoothing

gcv[index] <- mean(BSmooth$gcv) # average across all observed curves

}

GCV <- data.frame(

lambda = round(log10(lambda.vect), 3),

GCV = gcv

)

# find the minimum value

lambda.opt <- lambda.vect[which.min(gcv)]For better illustration, we will plot the progression of \(GCV(\lambda)\).

Code

GCV |> ggplot(aes(x = lambda, y = GCV)) +

geom_line(linetype = 'solid', linewidth = 0.6) +

geom_point(size = 1.5) +

theme_bw() +

labs(x = bquote(paste(log[10](lambda), ' ; ',

lambda[optimal] == .(round(lambda.opt, 4)))),

y = expression(GCV(lambda))) +

geom_point(aes(x = log10(lambda.opt), y = min(gcv)), colour = 'red', size = 2.5)## Warning in geom_point(aes(x = log10(lambda.opt), y = min(gcv)), colour = "red", : All aesthetics have length 1, but the data has 50 rows.

## ℹ Please consider using `annotate()` or provide this layer with data containing

## a single row.

Figure 1.5: The progression of \(GCV(\lambda)\) for the chosen vector \(\boldsymbol\lambda\). The x-axis values are plotted on a logarithmic scale. The optimal value of the smoothing parameter \(\lambda_{optimal}\) is shown in red.

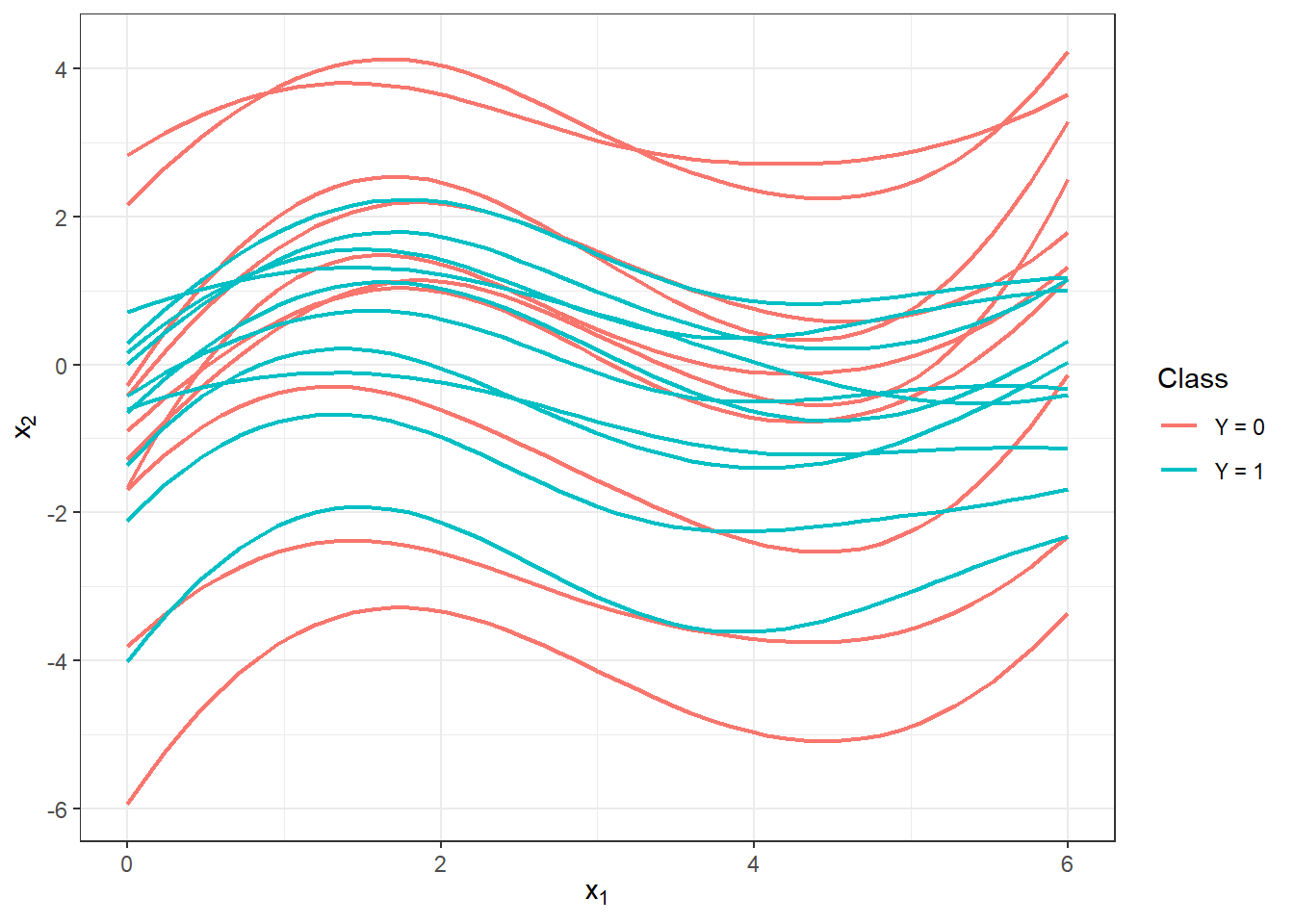

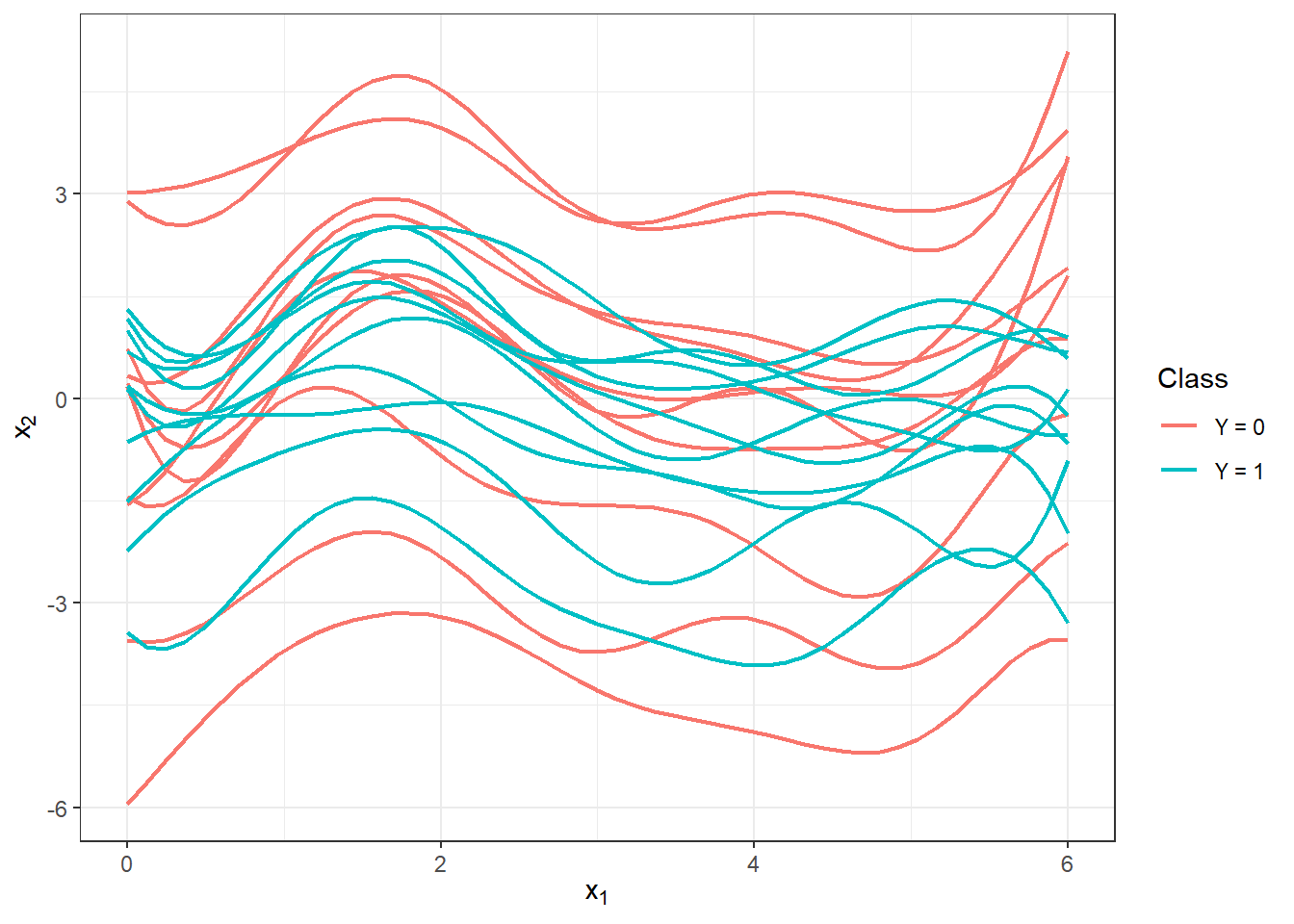

With this optimal choice of the smoothing parameter \(\lambda\), we will now smooth all functions and again graphically represent the first 10 observed curves from each classification class.

Code

curv.fdPar <- fdPar(bbasis, curv.Lfd, lambda.opt)

BSmooth <- smooth.basis(t, XX, curv.fdPar)

XXfd <- BSmooth$fd

fdobjSmootheval <- eval.fd(fdobj = XXfd, evalarg = t)

DF$Vsmooth <- c(fdobjSmootheval[, c(1 : n_curves_plot,

(n + 1) : (n + n_curves_plot))])

DF |> ggplot(aes(x = t, y = Vsmooth, group = interaction(time, group),

colour = group)) +

geom_line(linewidth = 0.75) +

theme_bw() +

labs(x = expression(x[1]),

y = expression(x[2]),

colour = 'Class') +

scale_colour_discrete(labels=c('Y = 0', 'Y = 1'))

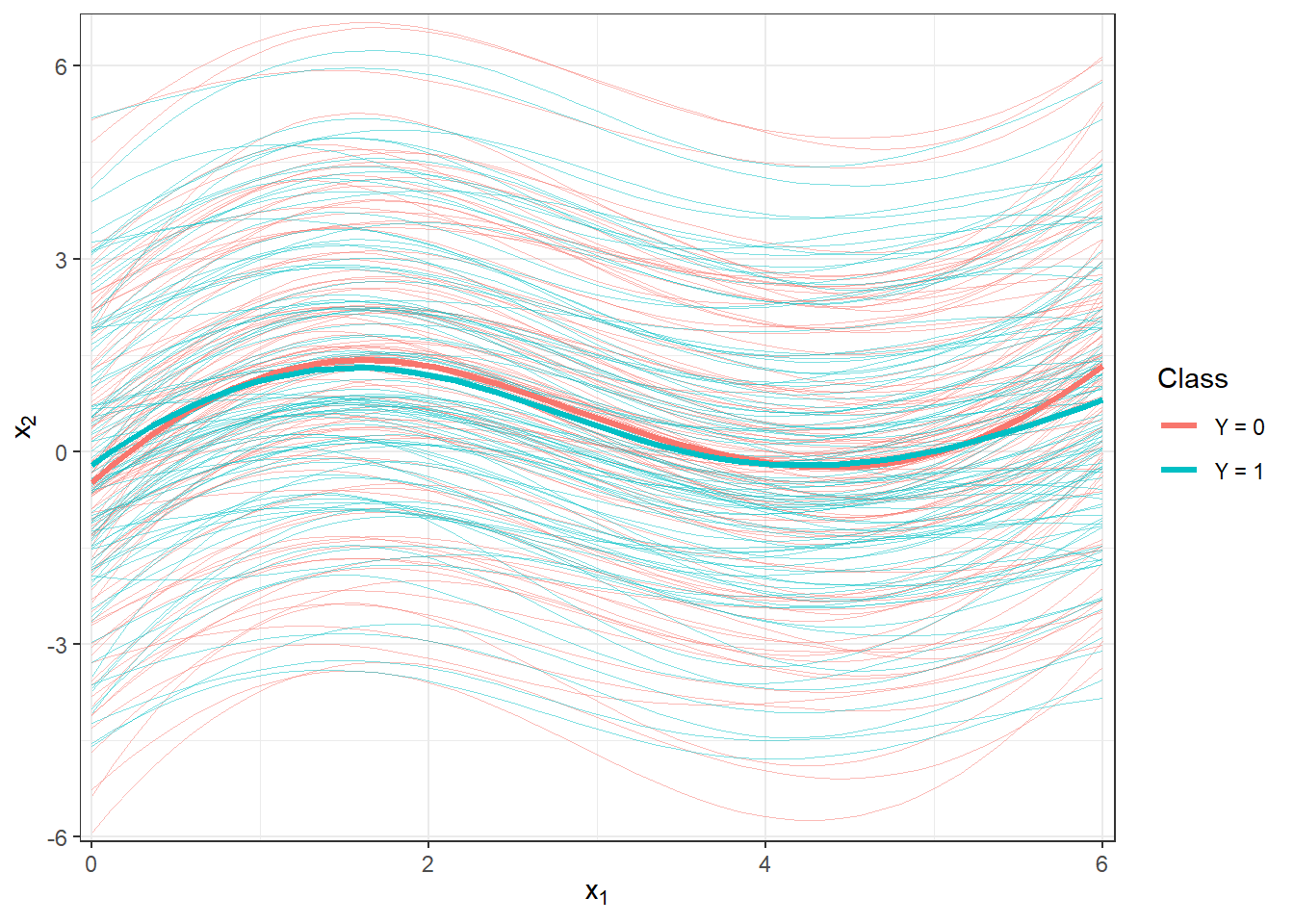

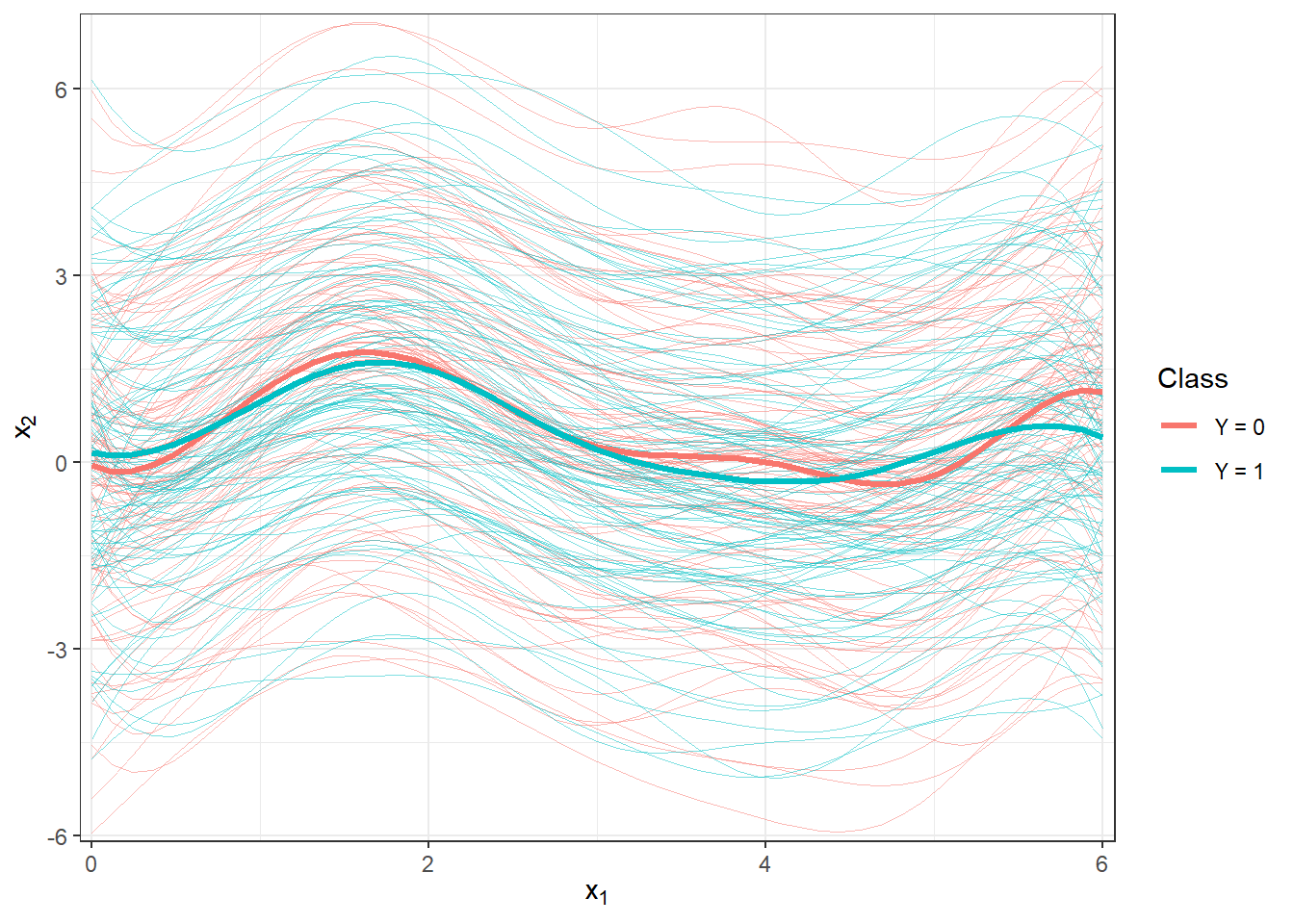

Figure 1.6: The first 10 smoothed curves from each classification class.

Let’s visualize all the smoothed curves along with the mean for each class.

Code

DFsmooth <- data.frame(

t = rep(t, 2 * n),

time = rep(rep(1:n, each = length(t)), 2),

Smooth = c(fdobjSmootheval),

Mean = c(rep(apply(fdobjSmootheval[ , 1 : n], 1, mean), n),

rep(apply(fdobjSmootheval[ , (n + 1) : (2 * n)], 1, mean), n)),

group = factor(rep(c(0, 1), each = n * length(t)))

)

DFmean <- data.frame(

t = rep(t, 2),

Mean = c(apply(fdobjSmootheval[ , 1 : n], 1, mean),

apply(fdobjSmootheval[ , (n + 1) : (2 * n)], 1, mean)),

group = factor(rep(c(0, 1), each = length(t)))

)

DFsmooth |> ggplot(aes(x = t, y = Smooth, group = interaction(time, group),

colour = group)) +

geom_line(linewidth = 0.25, alpha = 0.5) +

theme_bw() +

labs(x = expression(x[1]),

y = expression(x[2]),

colour = 'Class') +

scale_colour_discrete(labels = c('Y = 0', 'Y = 1')) +

geom_line(aes(x = t, y = Mean, colour = group),

linewidth = 1.2, linetype = 'solid') +

scale_x_continuous(expand = c(0.01, 0.01)) +

#ylim(c(-1, 2)) +

scale_y_continuous(expand = c(0.01, 0.01)) #, limits = c(-1, 2))

Figure 1.7: Plot of all smoothed observed curves, colored by their classification class. The mean for each class is shown with a thick line.

Code

DFsmooth <- data.frame(

t = rep(t, 2 * n),

time = rep(rep(1:n, each = length(t)), 2),

Smooth = c(fdobjSmootheval),

Mean = c(rep(apply(fdobjSmootheval[ , 1 : n], 1, mean), n),

rep(apply(fdobjSmootheval[ , (n + 1) : (2 * n)], 1, mean), n)),

group = factor(rep(c(0, 1), each = n * length(t)))

)

DFmean <- data.frame(

t = rep(t, 2),

Mean = c(apply(fdobjSmootheval[ , 1 : n], 1, mean),

apply(fdobjSmootheval[ , (n + 1) : (2 * n)], 1, mean)),

group = factor(rep(c(0, 1), each = length(t)))

)

DFsmooth |> ggplot(aes(x = t, y = Smooth, group = interaction(time, group),

colour = group)) +

geom_line(linewidth = 0.25, alpha = 0.5) +

theme_bw() +

labs(x = expression(x[1]),

y = expression(x[2]),

colour = 'Class') +

scale_colour_discrete(labels = c('Y = 0', 'Y = 1')) +

geom_line(aes(x = t, y = Mean, colour = group),

linewidth = 1.2, linetype = 'solid') +

scale_x_continuous(expand = c(0.01, 0.01)) +

#ylim(c(-1, 2)) +

scale_y_continuous(expand = c(0.01, 0.01), limits = c(-1, 2))

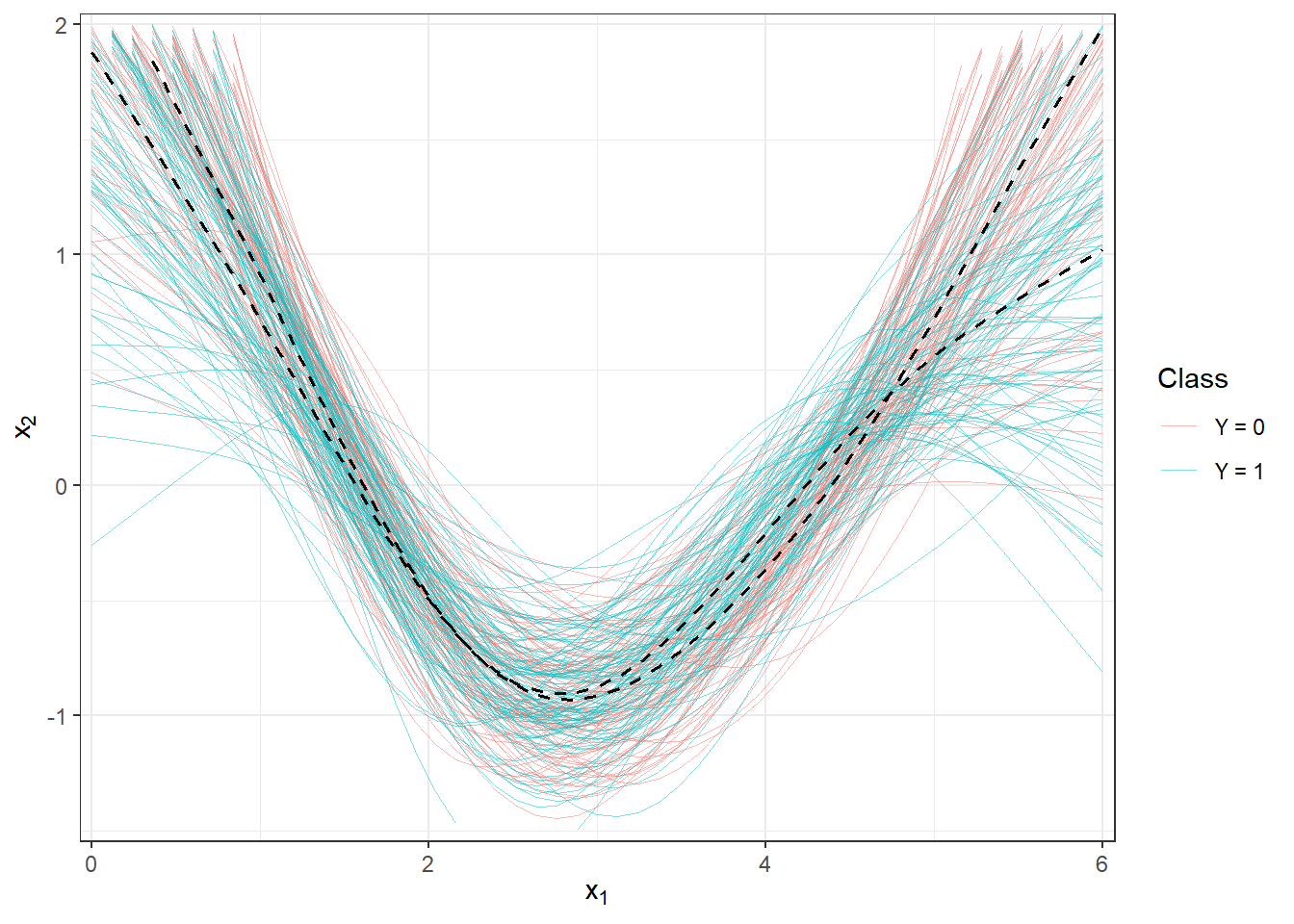

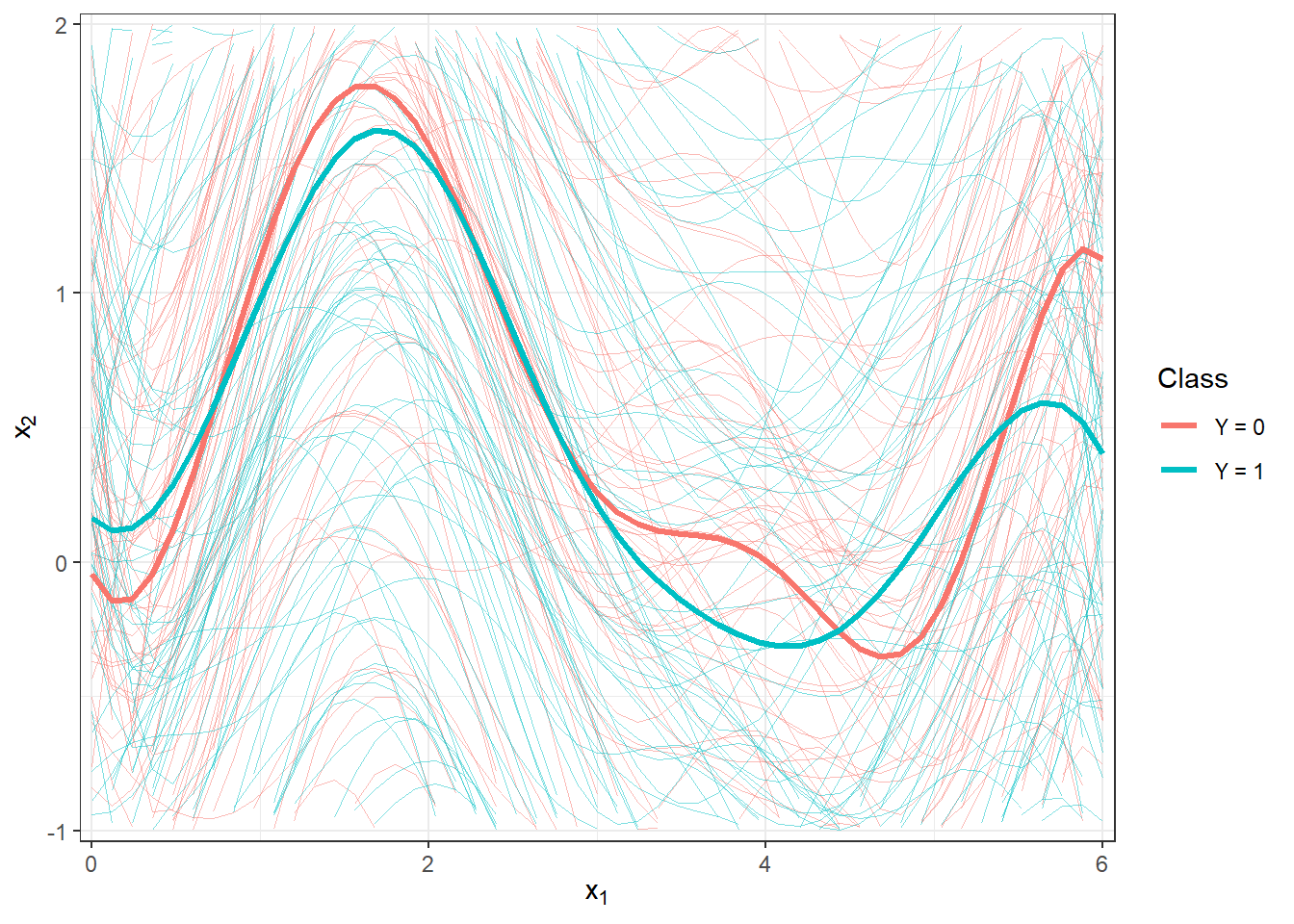

Figure 2.2: Plot of all smoothed observed curves, colored by their classification class. The mean for each class is shown with a thick line. Zoomed view.

5.1.2 Calculation of Derivatives

To compute the derivative for the functional object, we will use the deriv.fd() function from the fda package in R. Since we want to classify based on the first derivative, we choose the argument Lfdobj = 1.

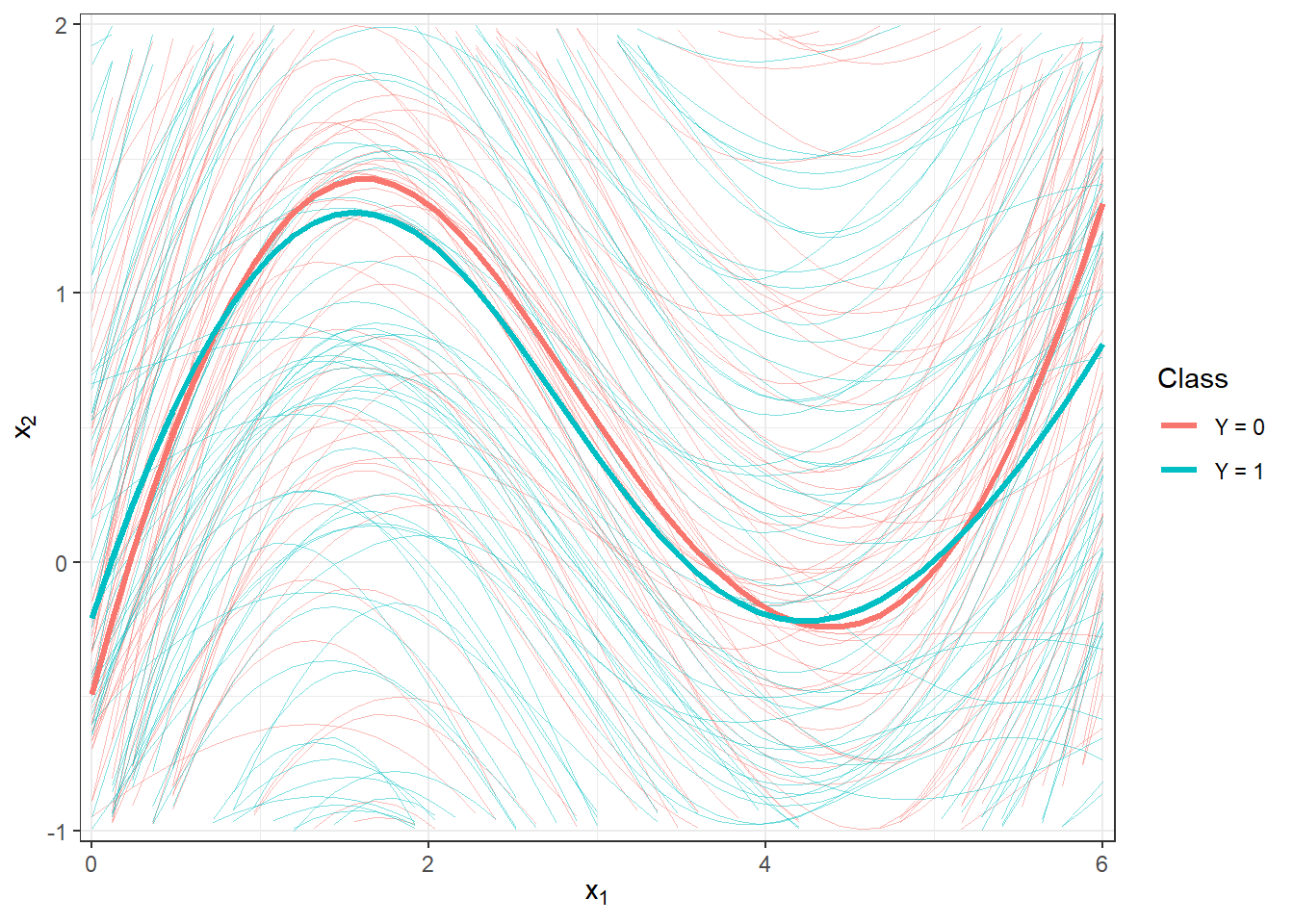

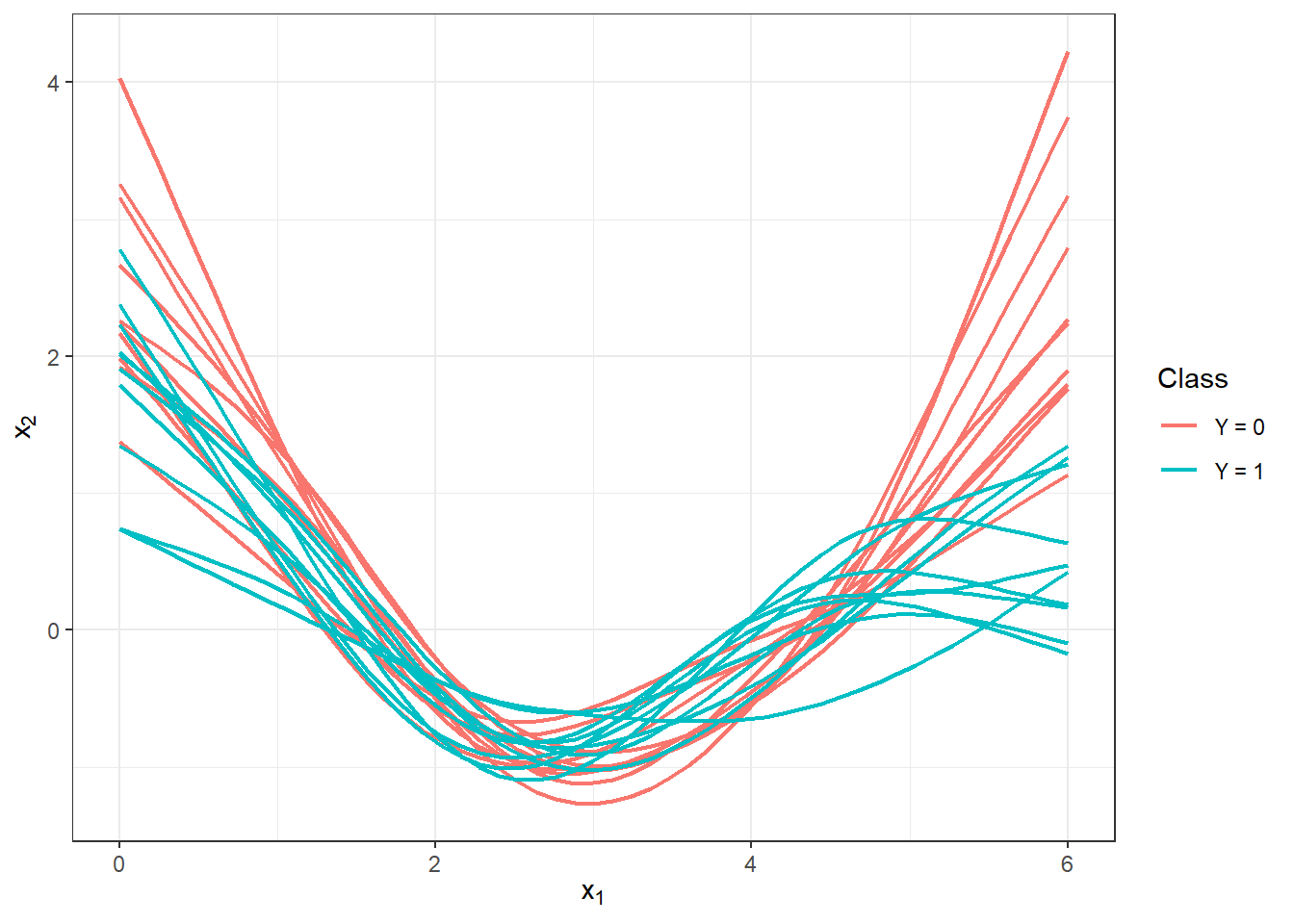

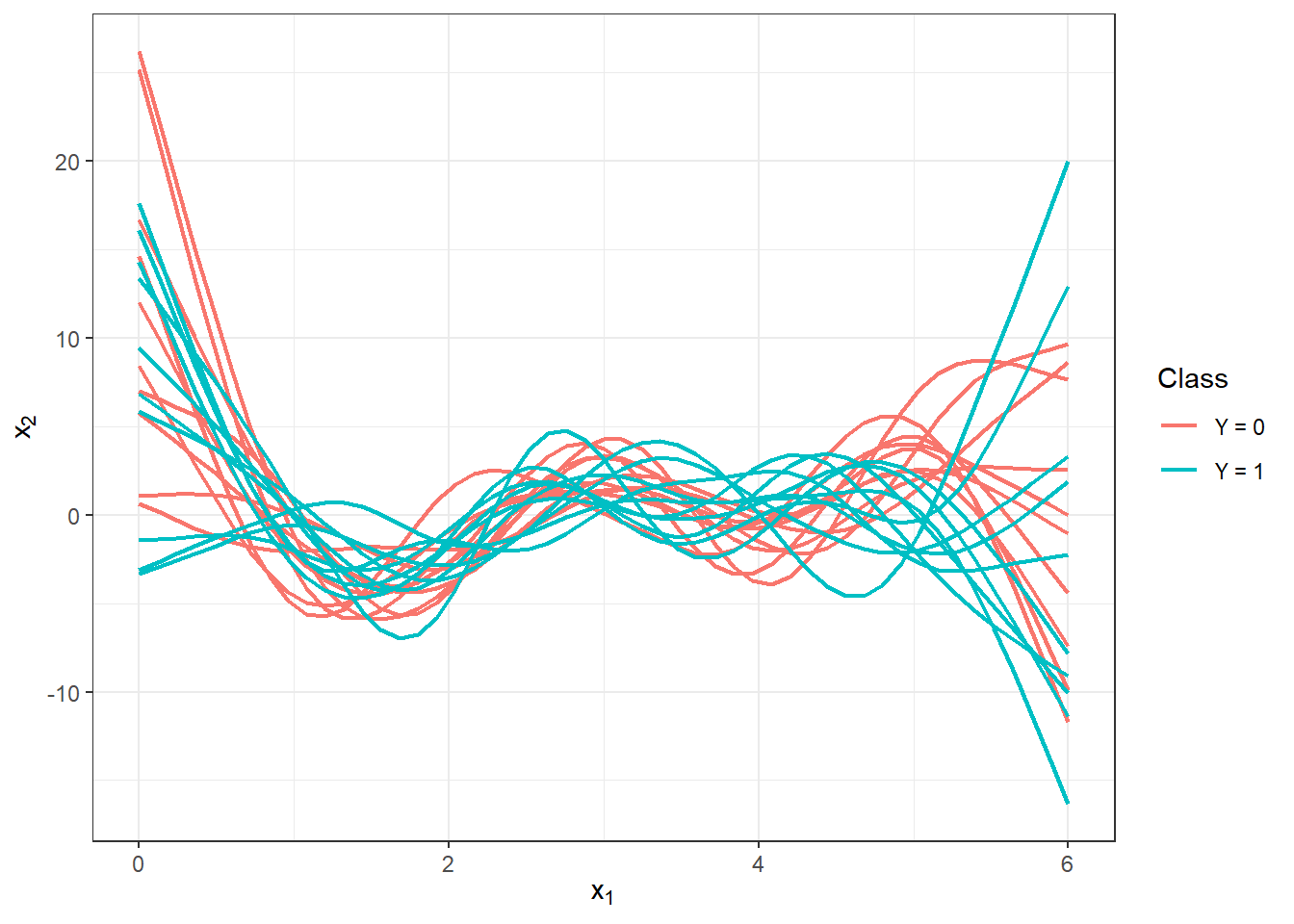

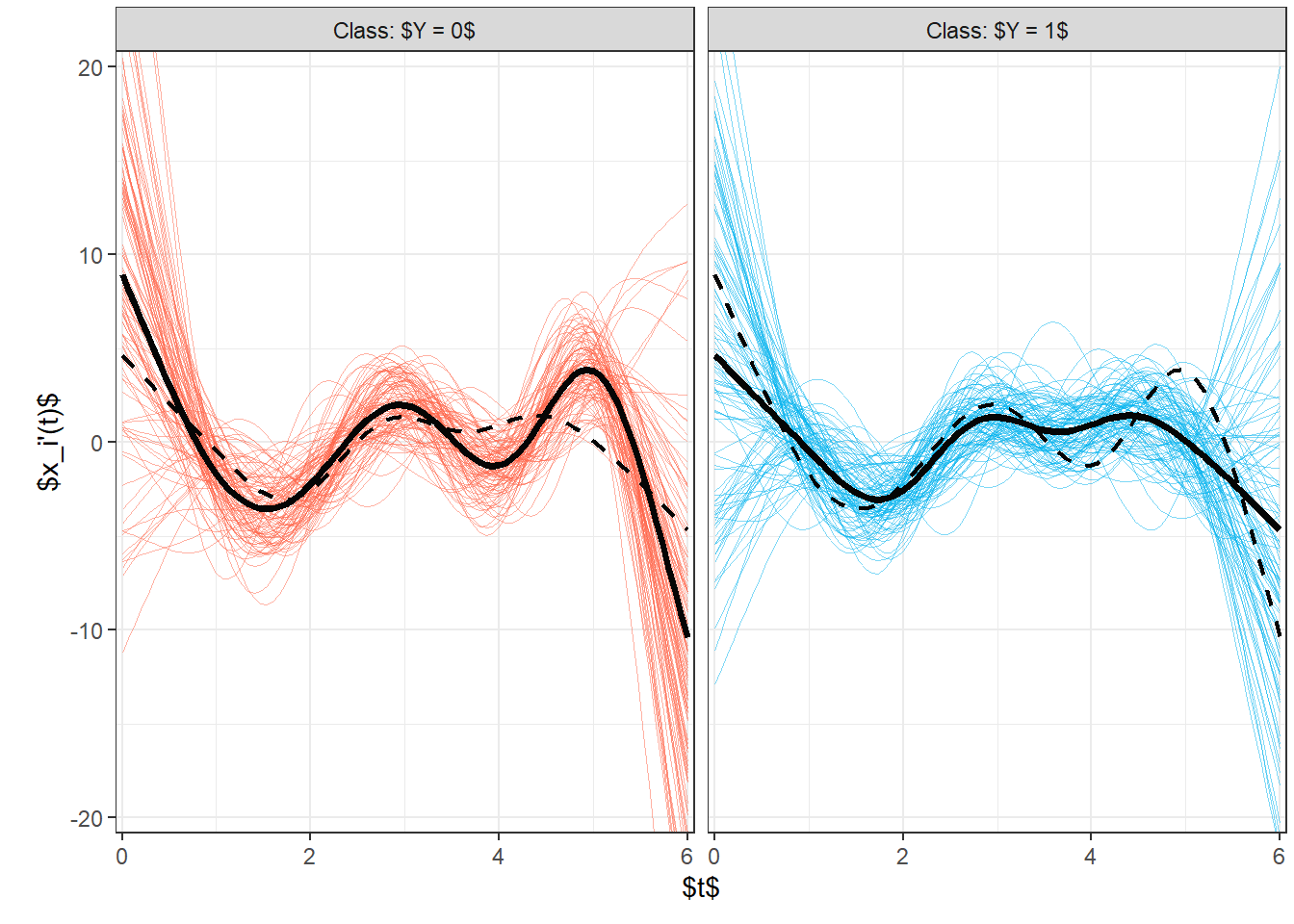

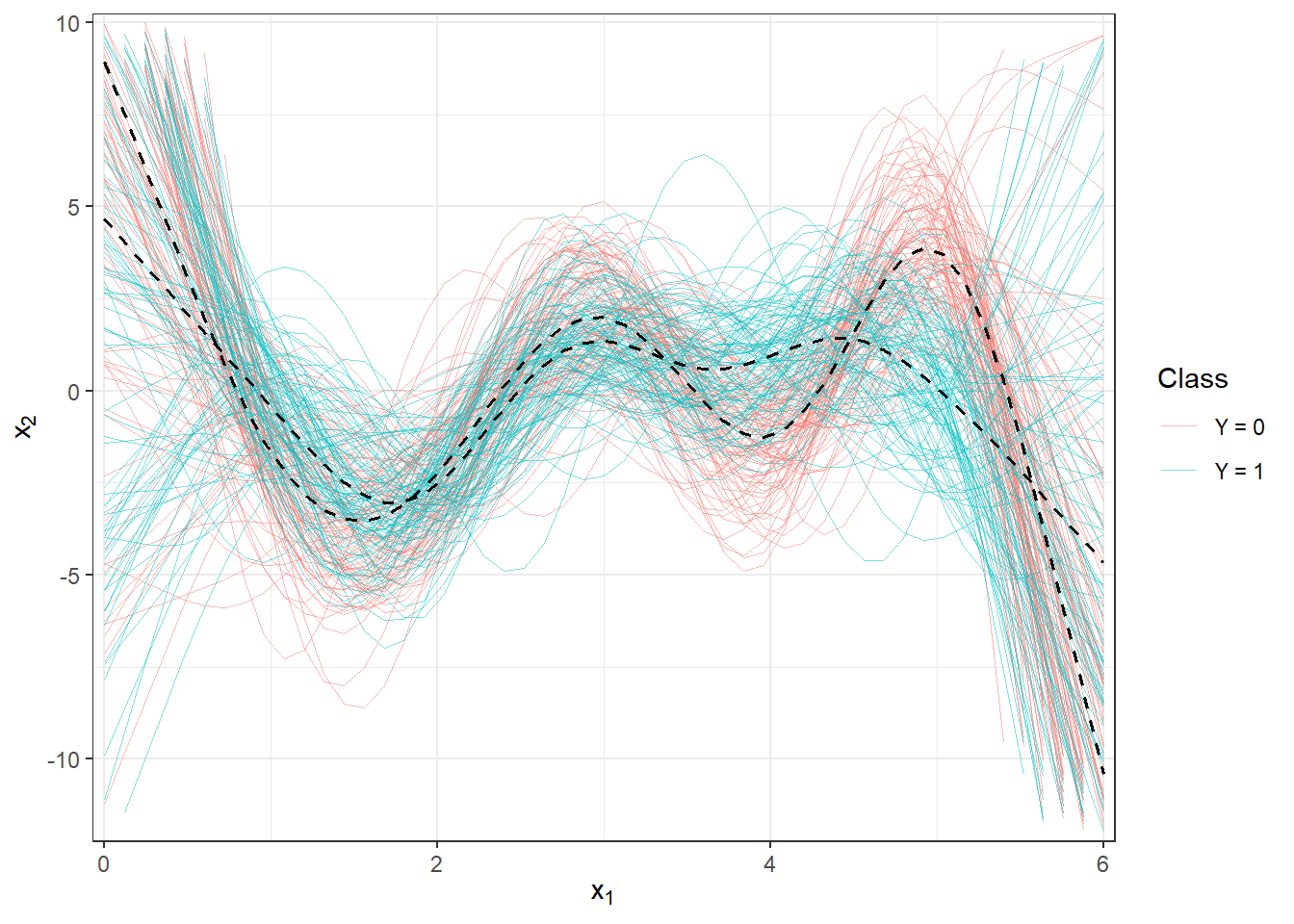

Now let’s plot the first few first derivatives for both classification classes. Notice from the figure below that the vertical shift due to differentiation has indeed been successfully removed. However, we have somewhat lost the distinctiveness between the curves because, as implied by the figure, the derivative curves for both classes differ primarily towards the end of the interval, specifically for the argument in the range approximately \([5, 6]\).

Code

fdobjSmootheval <- eval.fd(fdobj = XXder, evalarg = t)

DF$Vsmooth <- c(fdobjSmootheval[, c(1 : n_curves_plot,

(n + 1) : (n + n_curves_plot))])

DF |> ggplot(aes(x = t, y = Vsmooth, group = interaction(time, group),

colour = group)) +

geom_line(linewidth = 0.75) +

theme_bw() +

labs(x = expression(x[1]),

y = expression(x[2]),

colour = 'Class') +

scale_colour_discrete(labels=c('Y = 0', 'Y = 1'))

Let’s also illustrate all curves including the average separately for each class.

Code

abs.labs <- paste("Classification class:", c("$Y = 0$", "$Y = 1$"))

names(abs.labs) <- c('0', '1')

# fdobjSmootheval <- eval.fd(fdobj = XXfd, evalarg = t)

DFsmooth <- data.frame(

t = rep(t, 2 * n),

time = rep(rep(1:n, each = length(t)), 2),

Smooth = c(fdobjSmootheval),

group = factor(rep(c(0, 1), each = n * length(t)))

)

DFmean <- data.frame(

t = rep(t, 2),

Mean = c(eval.fd(fdobj = mean.fd(XXder[1:n]), evalarg = t),

eval.fd(fdobj = mean.fd(XXder[(n + 1):(2 * n)]), evalarg = t)),

group = factor(rep(c(0, 1), each = length(t)))

)

DFsmooth |> ggplot(aes(x = t, y = Smooth, #group = interaction(time, group),

colour = group)) +

geom_line(aes(group = time), linewidth = 0.05, alpha = 0.5) +

theme_bw() +

labs(x = "$t$",

# y = "$\\frac{\\text d}{\\text d t} x_i(t)$",

y ="$x_i'(t)$",

colour = 'Class') +

# geom_line(data = DFsmooth |>

# mutate(group = factor(ifelse(group == '0', '1', '0'))) |>

# filter(group == '1'),

# aes(x = t, y = Mean, colour = group),

# colour = 'tomato', linewidth = 0.8, linetype = 'solid') +

# geom_line(data = DFsmooth |>

# mutate(group = factor(ifelse(group == '0', '1', '0'))) |>

# filter(group == '0'),

# aes(x = t, y = Mean, colour = group),

# colour = 'deepskyblue2', linewidth = 0.8, linetype = 'solid') +

geom_line(data = DFmean |>

mutate(group = factor(ifelse(group == '0', '1', '0'))),

aes(x = t, y = Mean, colour = group),

colour = 'grey2', linewidth = 0.8, linetype = 'dashed') +

geom_line(data = DFmean, aes(x = t, y = Mean, colour = group),

colour = 'grey2', linewidth = 1.25, linetype = 'solid') +

scale_x_continuous(expand = c(0.01, 0.01)) +

facet_wrap(~group, labeller = labeller(group = abs.labs)) +

scale_y_continuous(expand = c(0.02, 0.02)) +

theme(legend.position = 'none',

plot.margin = unit(c(0.1, 0.1, 0.3, 0.5), "cm")) +

coord_cartesian(ylim = c(-1.4, 3.5)) +

scale_colour_manual(values = c('tomato', 'deepskyblue2'))

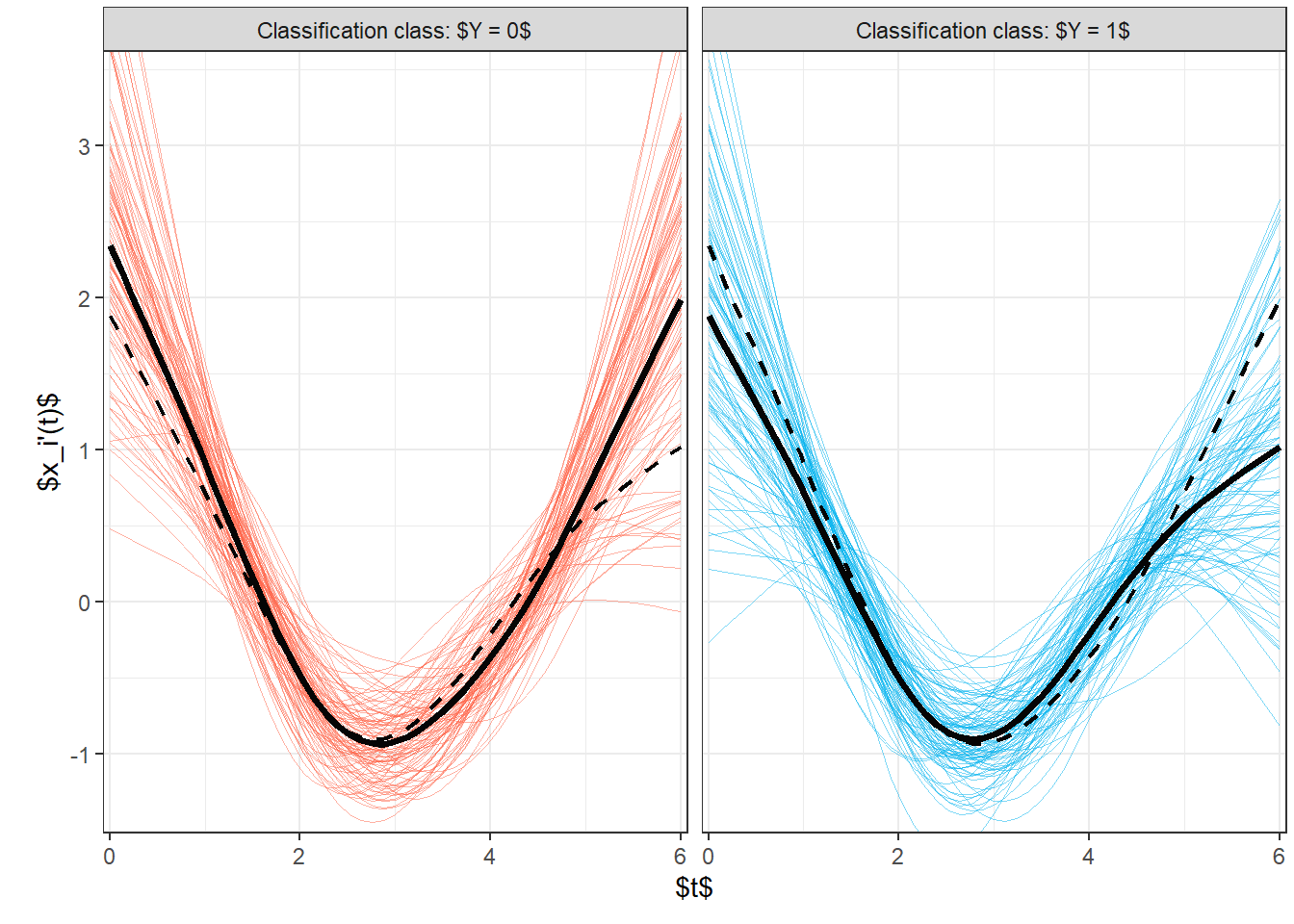

Figure 5.1: Plot of all smoothed observed curves, colored differently according to classification class membership. The average for each class is plotted as a solid black line.

Code

DFsmooth <- data.frame(

t = rep(t, 2 * n),

time = rep(rep(1:n, each = length(t)), 2),

Smooth = c(fdobjSmootheval),

Mean = c(rep(apply(fdobjSmootheval[ , 1 : n], 1, mean), n),

rep(apply(fdobjSmootheval[ , (n + 1) : (2 * n)], 1, mean), n)),

group = factor(rep(c(0, 1), each = n * length(t)))

)

DFmean <- data.frame(

t = rep(t, 2),

Mean = c(apply(fdobjSmootheval[ , 1 : n], 1, mean),

apply(fdobjSmootheval[ , (n + 1) : (2 * n)], 1, mean)),

group = factor(rep(c(0, 1), each = length(t)))

)

DFsmooth |> ggplot(aes(x = t, y = Smooth, group = interaction(time, group),

colour = group)) +

geom_line(linewidth = 0.25, alpha = 0.5) +

theme_bw() +

labs(x = expression(x[1]),

y = expression(x[2]),

colour = 'Class') +

scale_colour_discrete(labels = c('Y = 0', 'Y = 1')) +

geom_line(aes(x = t, y = Mean), colour = 'grey3',

linewidth = 0.7, linetype = 'dashed') +

scale_x_continuous(expand = c(0.01, 0.01)) +

#ylim(c(-1, 2)) +

scale_y_continuous(expand = c(0.01, 0.01), limits = c(-1.5, 2))

Figure 5.2: Plot of all smoothed observed curves, colored differently according to classification class membership. The average for each class is plotted as a solid black line. Closer look.

5.1.3 Classification of Curves

First, we will load the necessary libraries for classification.

Code

library(caTools) # for splitting into test and training sets

library(caret) # for k-fold CV

library(fda.usc) # for KNN, fLR

library(MASS) # for LDA

library(fdapace)

library(pracma)

library(refund) # for logistic regression on scores

library(nnet) # for logistic regression on scores

library(caret)

library(rpart) # decision trees

library(rattle) # visualization

library(e1071)

library(randomForest) # random forestTo compare individual classifiers, we will split the generated observations into two parts in a 70:30 ratio for training and testing (validation) sets. The training set will be used to construct the classifier, while the test set will be used to calculate the classification error and potentially other characteristics of our model. The resulting classifiers can then be compared based on these computed characteristics in terms of their classification success.

Code

Next, we will examine the representation of individual groups in the test and training portions of the data.

## Y.train

## 0 1

## 71 69## Y.test

## 0 1

## 29 31## Y.train

## 0 1

## 0.5071429 0.4928571## Y.test

## 0 1

## 0.4833333 0.51666675.1.3.1 \(K\) Nearest Neighbors

Let’s start with a non-parametric classification method, specifically the \(K\) nearest neighbors method. First, we will create the necessary objects so that we can work with them using the classif.knn() function from the fda.usc library.

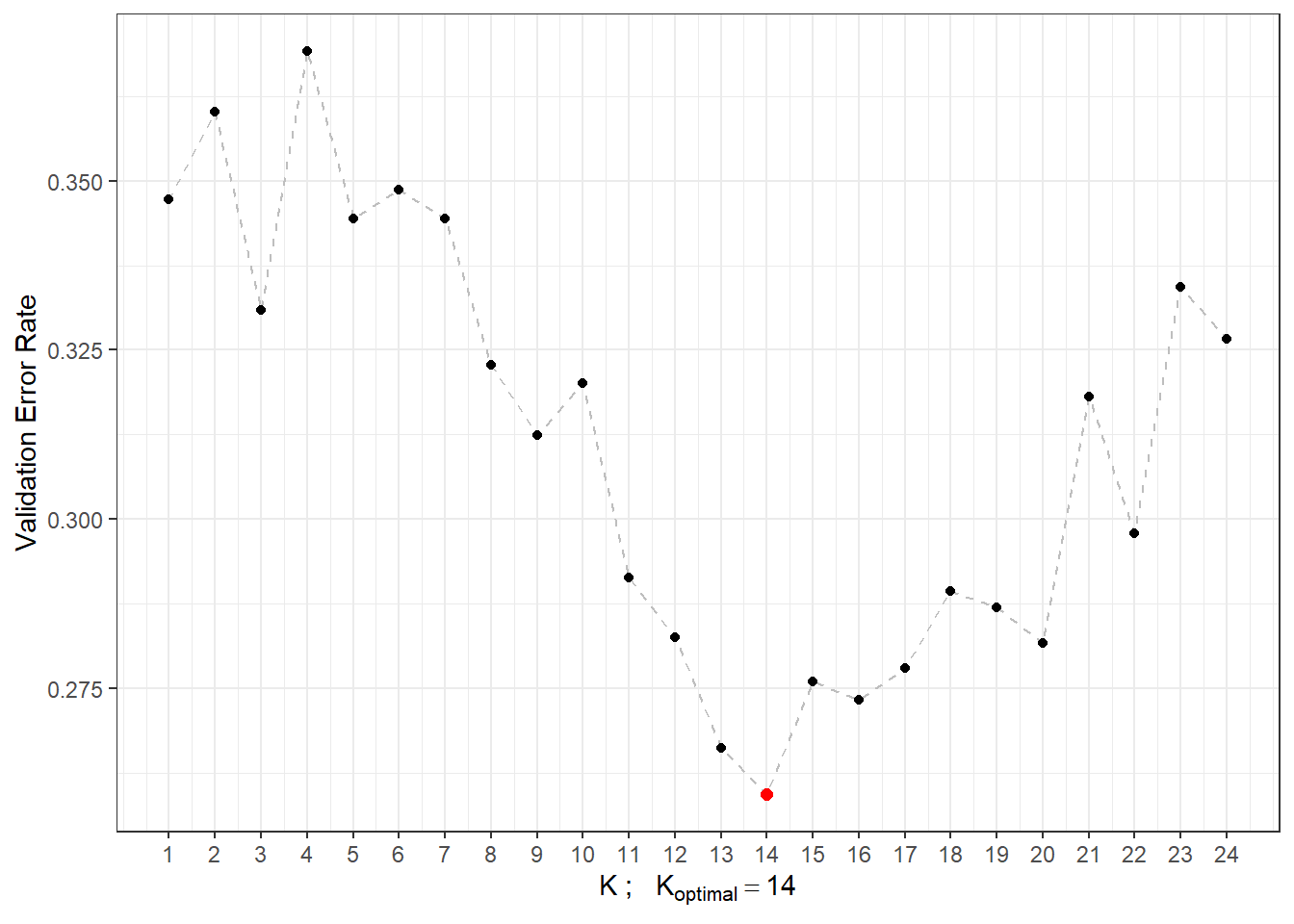

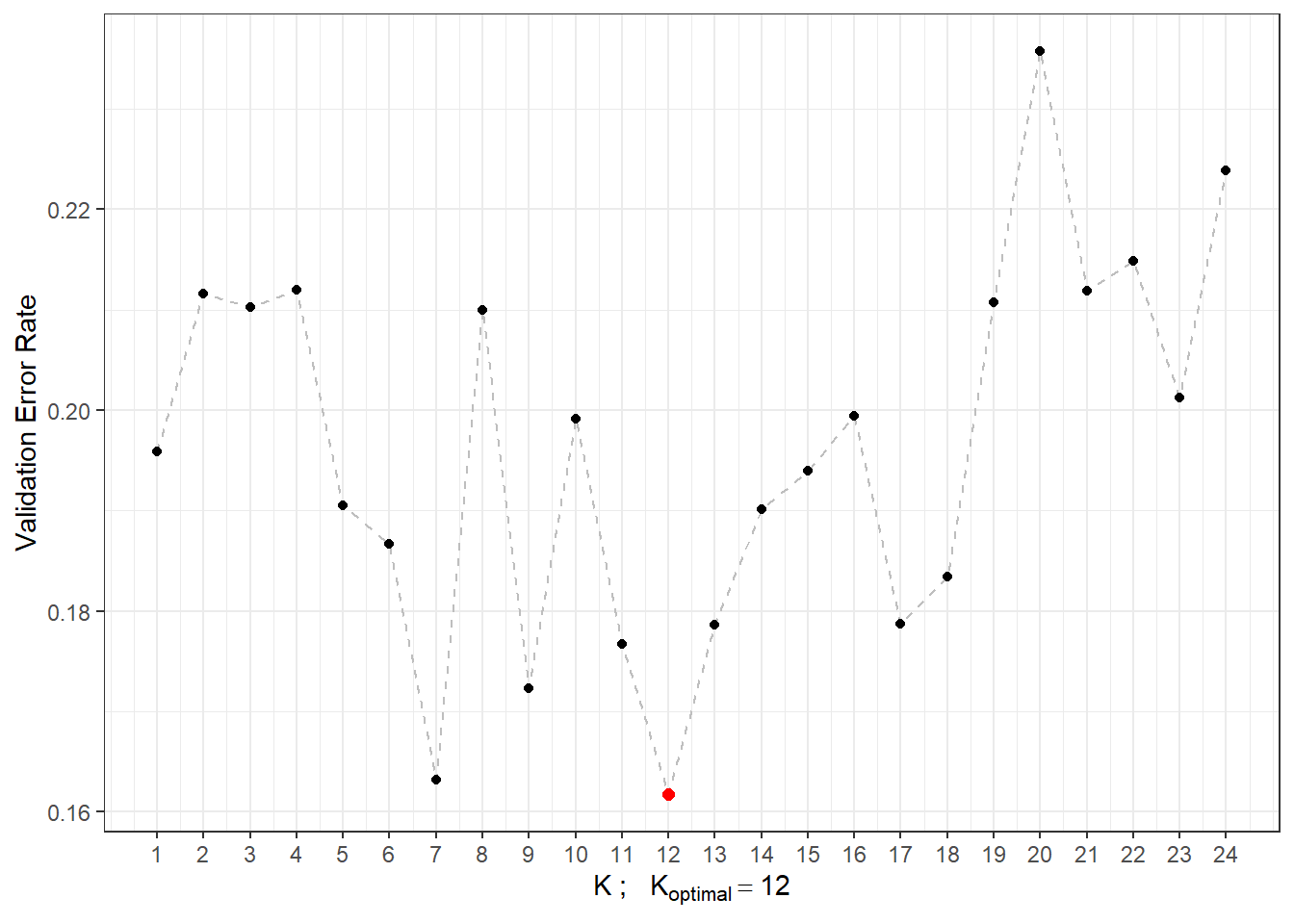

Now we can define the model and look at its classification success. The last question remains how to choose the optimal number of neighbors \(K\). We could choose this number as the value of \(K\) that results in the minimum error rate on the training data. However, this could lead to overfitting the model, so we will use cross-validation. Given the computational complexity and size of the dataset, we will opt for \(k\)-fold CV; we will choose a value of \(k = 10\).

Code

# model for all training data for K = 1, 2, ..., sqrt(n_train)

neighb.model <- classif.knn(group = y.train,

fdataobj = x.train,

knn = c(1:round(sqrt(length(y.train)))))

# summary(neighb.model) # summary of the model

# plot(neighb.model$gcv, pch = 16) # plot GCV dependence on the number of neighbors K

# neighb.model$max.prob # maximum accuracy

(K.opt <- neighb.model$h.opt) # optimal value of K## [1] 12Let’s proceed with the previous procedure for the training data, which we will split into \(k\) parts and repeat this code \(k\) times.

Code

k_cv <- 10 # k-fold CV

neighbours <- c(1:(2 * ceiling(sqrt(length(y.train))))) # number of neighbors

# split training data into k parts

folds <- createMultiFolds(X.train$fdnames$reps, k = k_cv, time = 1)

# empty matrix to store the results

# columns will contain accuracy values for the corresponding part of the training set

# rows will contain values for the given number of neighbors K

CV.results <- matrix(NA, nrow = length(neighbours), ncol = k_cv)

for (index in 1:k_cv) {

# define the current index set

fold <- folds[[index]]

x.train.cv <- subset(X.train, c(1:length(X.train$fdnames$reps)) %in% fold) |>

fdata()

y.train.cv <- subset(Y.train, c(1:length(X.train$fdnames$reps)) %in% fold) |>

factor() |> as.numeric()

x.test.cv <- subset(X.train, !c(1:length(X.train$fdnames$reps)) %in% fold) |>

fdata()

y.test.cv <- subset(Y.train, !c(1:length(X.train$fdnames$reps)) %in% fold) |>

factor() |> as.numeric()

# iterate over each part ... repeat k times

for(neighbour in neighbours) {

# model for specific choice of K

neighb.model <- classif.knn(group = y.train.cv,

fdataobj = x.train.cv,

knn = neighbour)

# predictions on validation set

model.neighb.predict <- predict(neighb.model,

new.fdataobj = x.test.cv)

# accuracy on validation set

accuracy <- table(y.test.cv, model.neighb.predict) |>

prop.table() |> diag() |> sum()

# store accuracy in the position for given K and fold

CV.results[neighbour, index] <- accuracy

}

}

# compute average accuracies for individual K across folds

CV.results <- apply(CV.results, 1, mean)

K.opt <- which.max(CV.results)

presnost.opt.cv <- max(CV.results)

# CV.resultsWe can see that the best value for the parameter \(K\) is 14, with an error rate calculated using 10-fold CV of 0.2594.

For clarity, let’s also plot the validation error rate as a function of the number of neighbors \(K\).

Code

CV.results <- data.frame(K = neighbours, CV = CV.results)

CV.results |> ggplot(aes(x = K, y = 1 - CV)) +

geom_line(linetype = 'dashed', colour = 'grey') +

geom_point(size = 1.5) +

geom_point(aes(x = K.opt, y = 1 - presnost.opt.cv), colour = 'red', size = 2) +

theme_bw() +

labs(x = bquote(paste(K, ' ; ',

K[optimal] == .(K.opt))),

y = 'Validation Error Rate') +

scale_x_continuous(breaks = neighbours)## Warning in geom_point(aes(x = K.opt, y = 1 - presnost.opt.cv), colour = "red", : All aesthetics have length 1, but the data has 24 rows.

## ℹ Please consider using `annotate()` or provide this layer with data containing

## a single row.

Figure 5.3: Dependency of validation error rate on the value of \(K\), i.e., on the number of neighbors.

Now that we have determined the optimal value of the parameter \(K\), we can build the final model.

Code

neighb.model <- classif.knn(group = y.train, fdataobj = x.train, knn = K.opt)

# predictions

model.neighb.predict <- predict(neighb.model,

new.fdataobj = fdata(X.test))

# summary(neighb.model)

# accuracy on test data

accuracy <- table(as.numeric(factor(Y.test)), model.neighb.predict) |>

prop.table() |>

diag() |>

sum()

# error rate

# 1 - accuracyThus, the error rate of the model constructed using the \(K\)-nearest neighbors method with the optimal choice of \(K_{optimal}\) equal to 14, determined by cross-validation, is 0.3071 on the training data and 0.1833 on the test data.

To compare different models, we can use both types of error rates, which we will store in a table for clarity.

5.1.3.2 Linear Discriminant Analysis

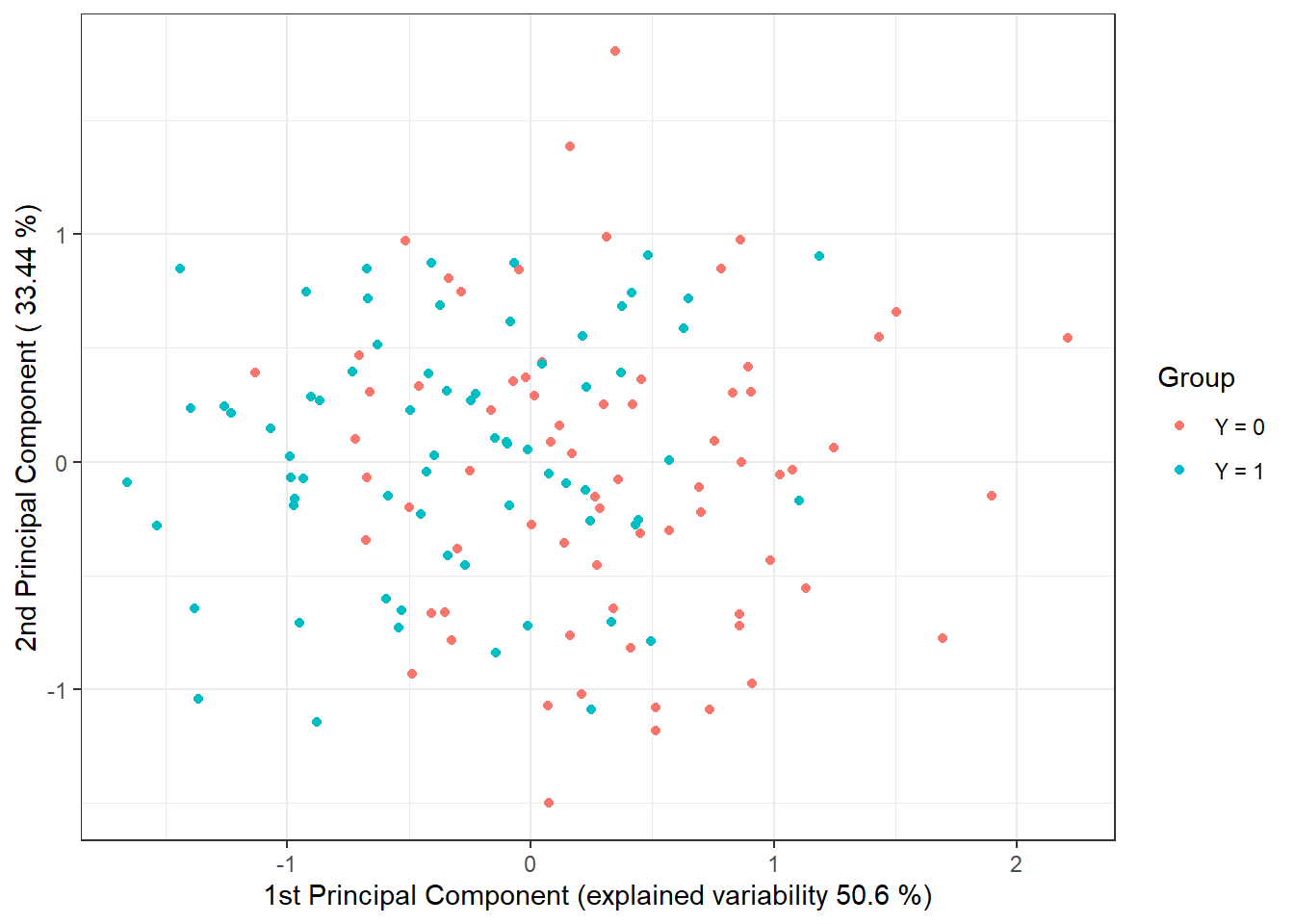

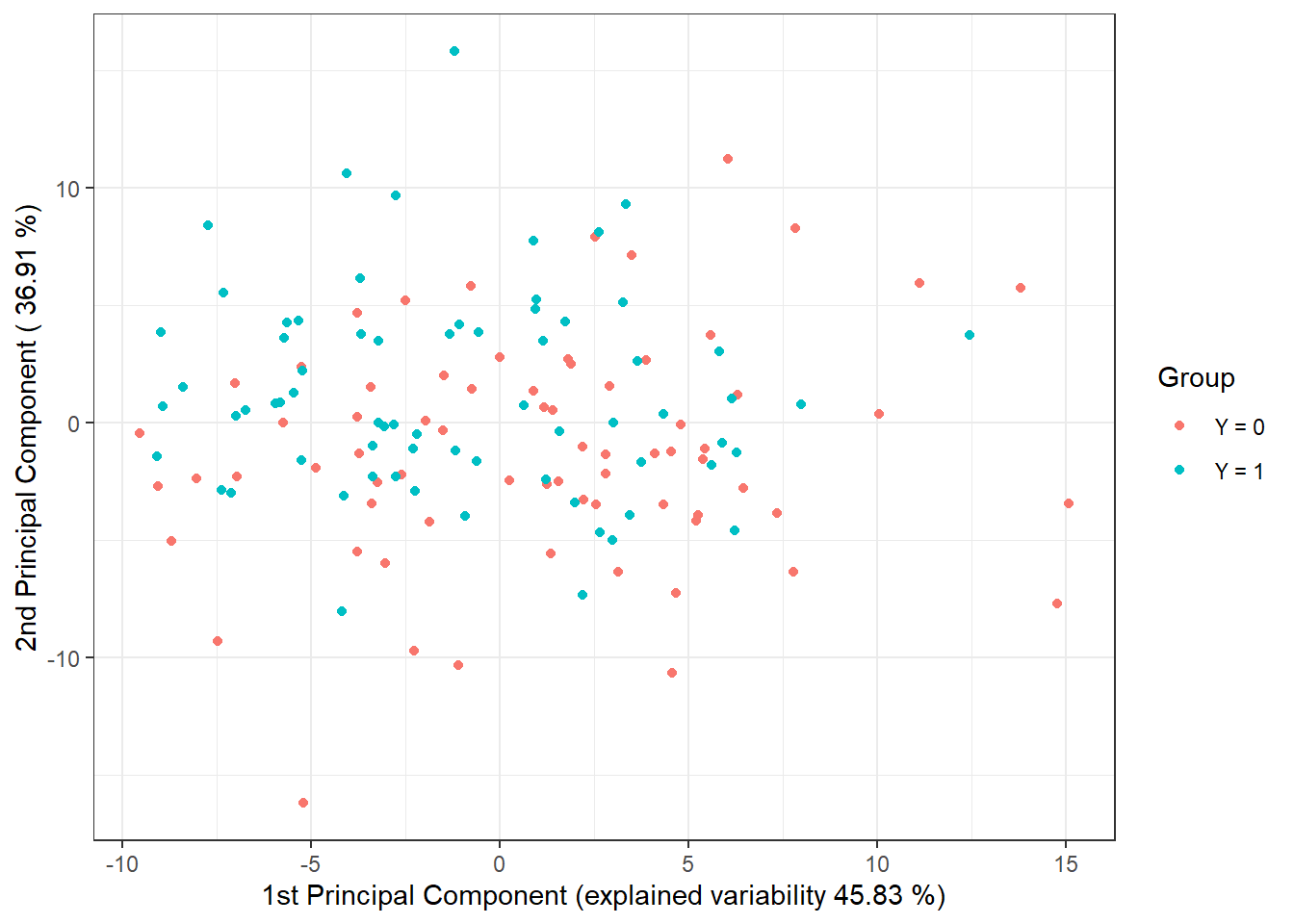

As the second method for constructing a classifier, we will consider Linear Discriminant Analysis (LDA). Since this method cannot be applied to functional data, we must first discretize the data, which we will do using Functional Principal Component Analysis (FPCA). We will then perform the classification algorithm on the scores of the first \(p\) principal components. We will choose the number of components \(p\) such that the first \(p\) principal components together explain at least 90% of the variability in the data.

First, let’s perform the functional principal component analysis and determine the number \(p\).

Code

# principal component analysis

data.PCA <- pca.fd(X.train, nharm = 10) # nharm - maximum number of PCs

nharm <- which(cumsum(data.PCA$varprop) >= 0.9)[1] # determine p

if(nharm == 1) nharm <- 2

data.PCA <- pca.fd(X.train, nharm = nharm)

data.PCA.train <- as.data.frame(data.PCA$scores) # scores of the first p PCs

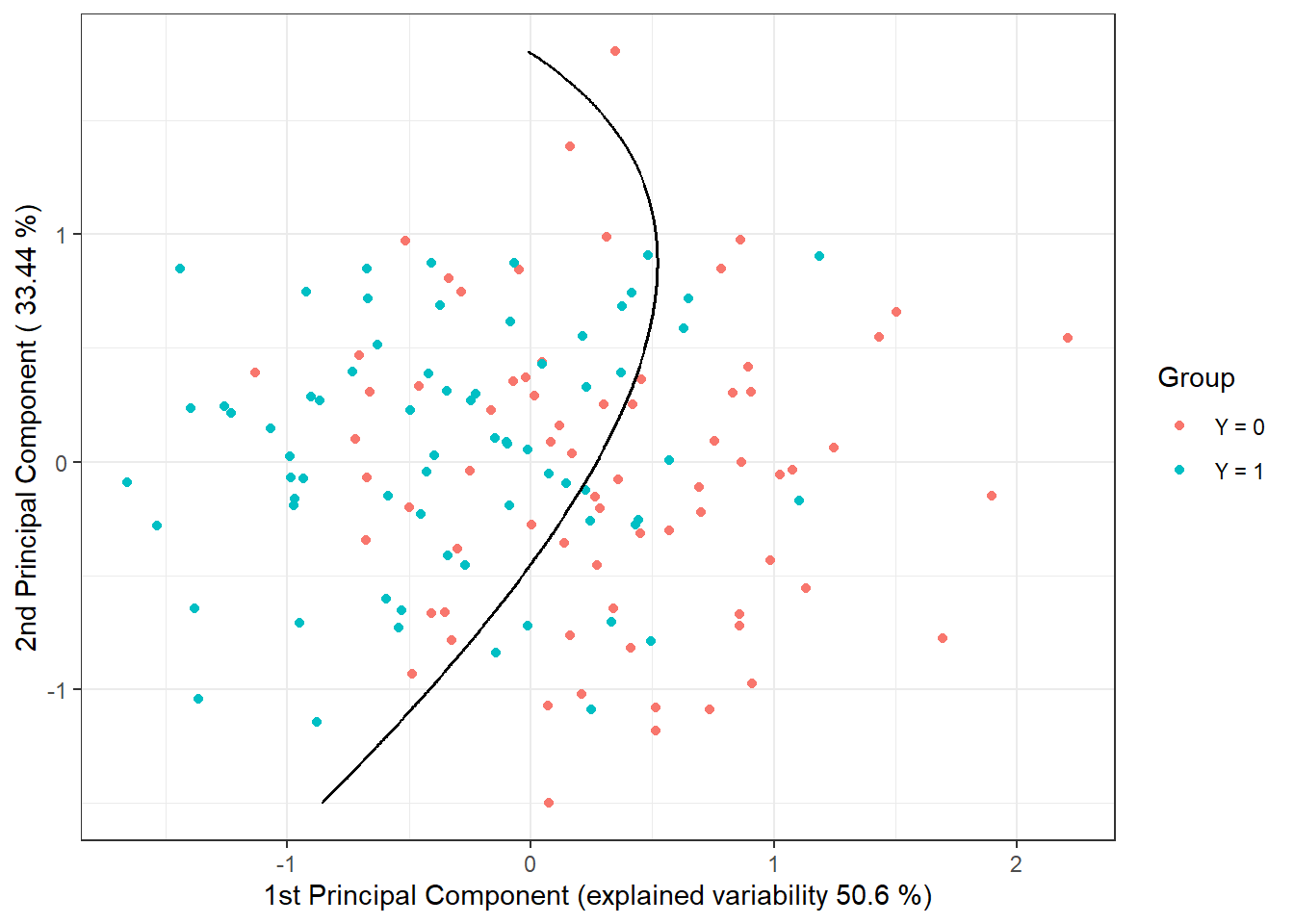

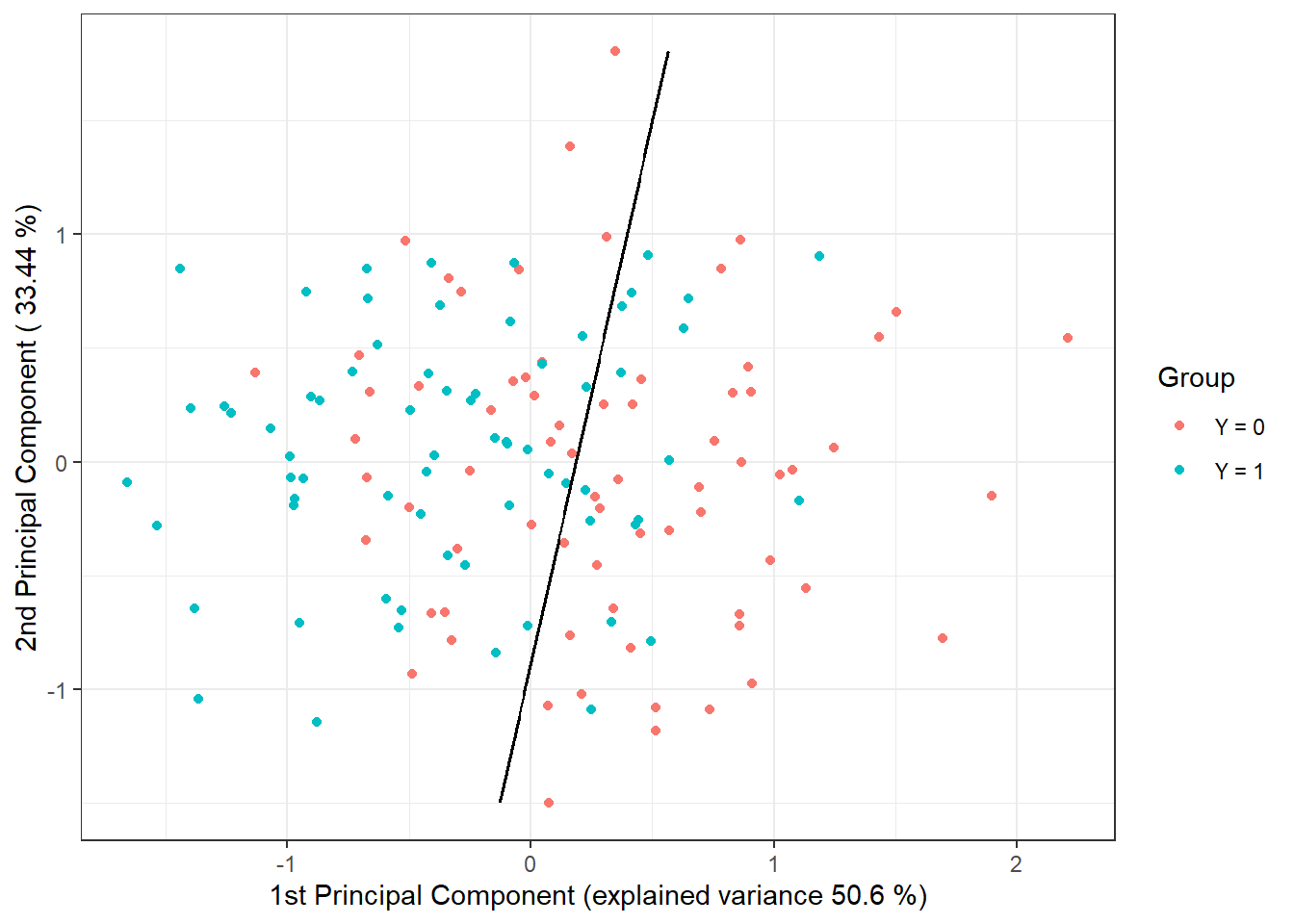

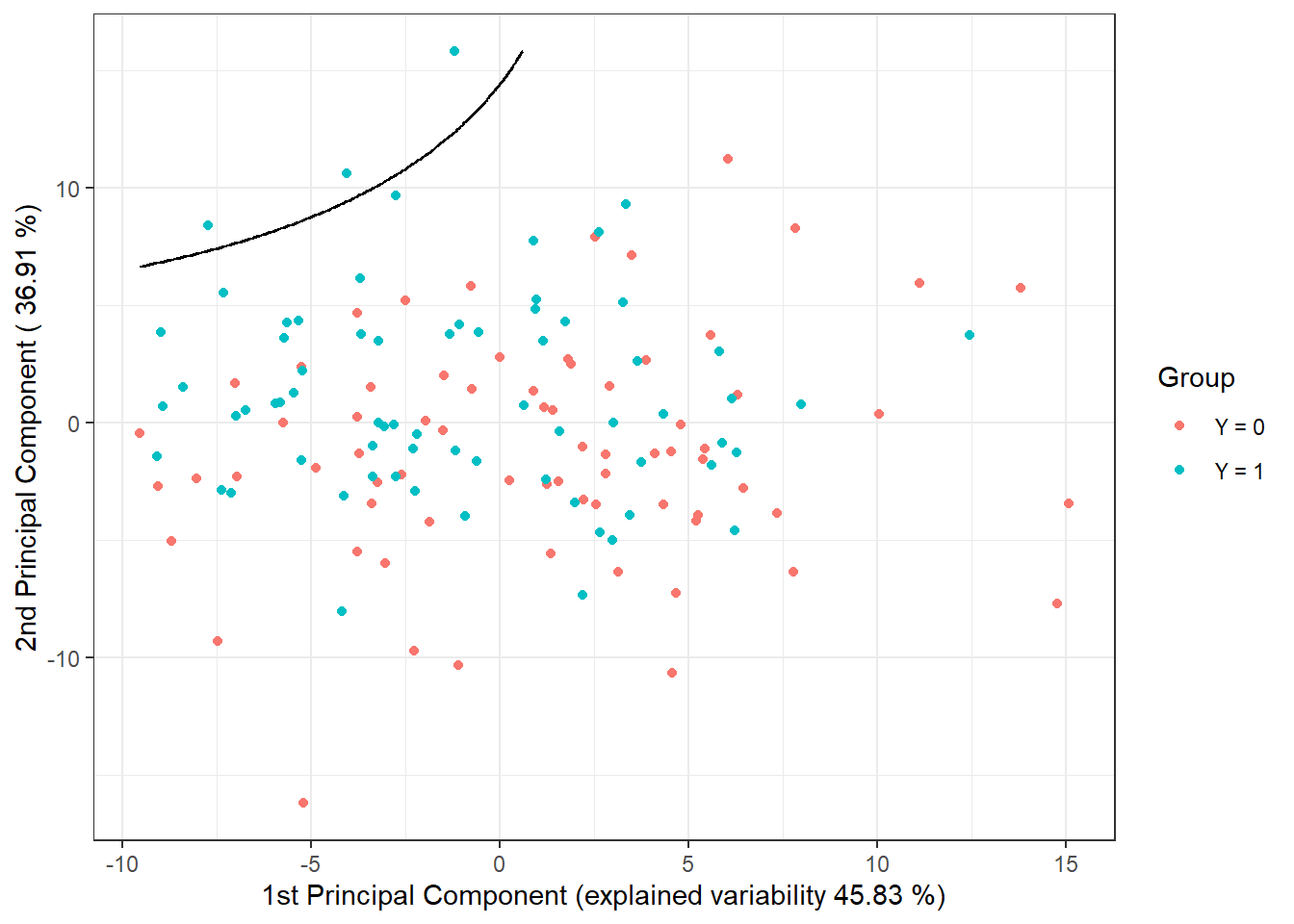

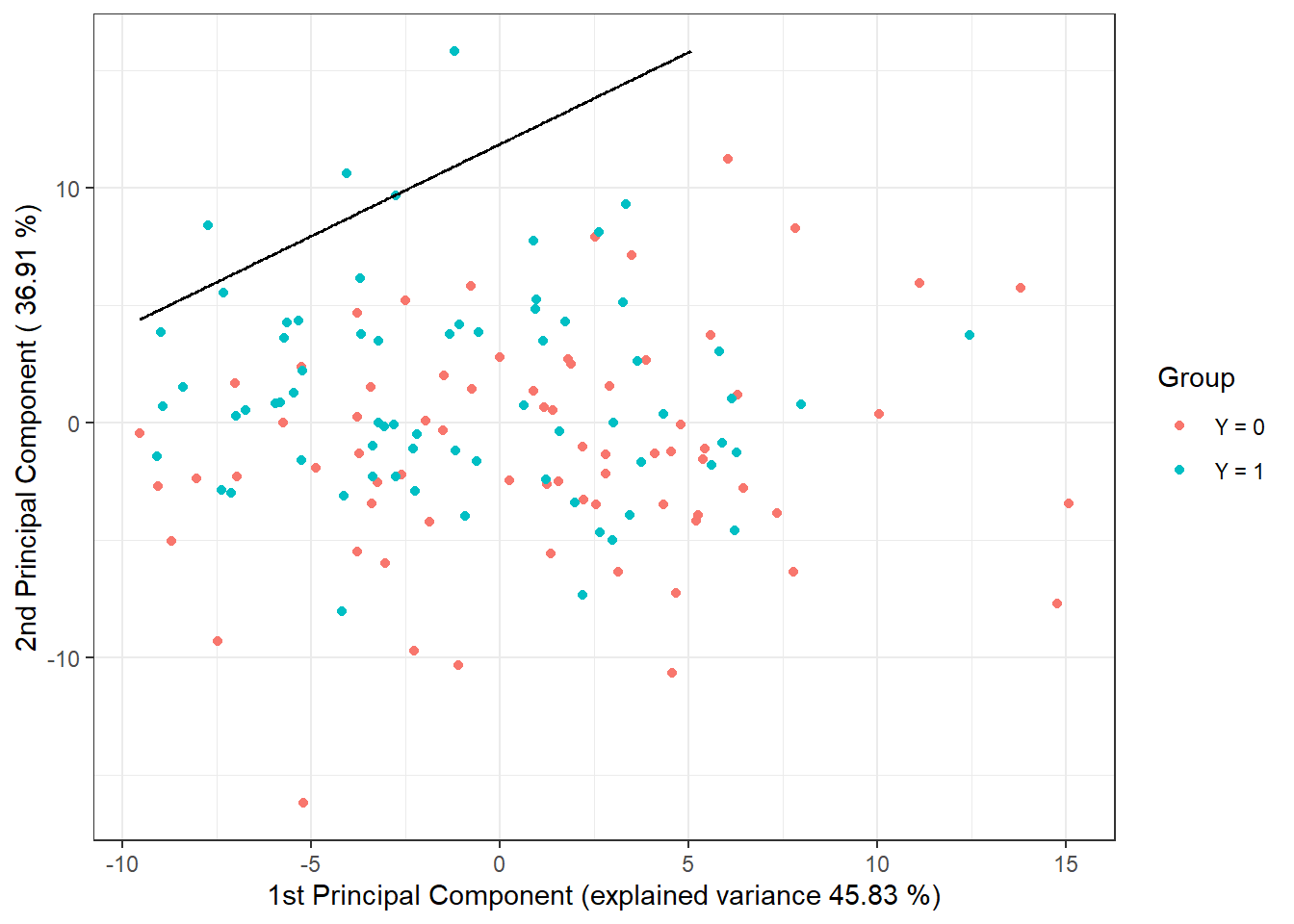

data.PCA.train$Y <- factor(Y.train) # class membershipIn this particular case, we took the number of principal components as \(p\) = 3, which together explain 93.96 % of the variability in the data. The first principal component explains 50.6 % and the second 33.44 % of the variability. We can graphically display the scores of the first two principal components, color-coded according to class membership.

Code

data.PCA.train |> ggplot(aes(x = V1, y = V2, colour = Y)) +

geom_point(size = 1.5) +

labs(x = paste('1st Principal Component (explained variability',

round(100 * data.PCA$varprop[1], 2), '%)'),

y = paste('2nd Principal Component (',

round(100 * data.PCA$varprop[2], 2), '%)'),

colour = 'Group') +

scale_colour_discrete(labels = c('Y = 0', 'Y = 1')) +

theme_bw()

Figure 5.4: Scores of the first two principal components for the training data. Points are color-coded according to class membership.

To determine the classification accuracy on the test data, we need to calculate the scores for the first 3 principal components for the test data. These scores are determined using the formula:

\[ \xi_{i, j} = \int \left( X_i(t) - \mu(t)\right) \cdot \rho_j(t)\text{ dt}, \]

where \(\mu(t)\) is the mean function and \(\rho_j(t)\) is the eigenfunction (functional principal component).

Code

# compute scores for test functions

scores <- matrix(NA, ncol = nharm, nrow = length(Y.test)) # empty matrix

for(k in 1:dim(scores)[1]) {

xfd = X.test[k] - data.PCA$meanfd[1] # k-th observation - mean function

scores[k, ] = inprod(xfd, data.PCA$harmonics)

# scalar product of residuals and eigenfunctions (functional principal components)

}

data.PCA.test <- as.data.frame(scores)

data.PCA.test$Y <- factor(Y.test)

colnames(data.PCA.test) <- colnames(data.PCA.train) Now we can construct the classifier on the training portion of the data.

Code

# model

clf.LDA <- lda(Y ~ ., data = data.PCA.train)

# accuracy on training data

predictions.train <- predict(clf.LDA, newdata = data.PCA.train)

accuracy.train <- table(data.PCA.train$Y, predictions.train$class) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test <- predict(clf.LDA, newdata = data.PCA.test)

accuracy.test <- table(data.PCA.test$Y, predictions.test$class) |>

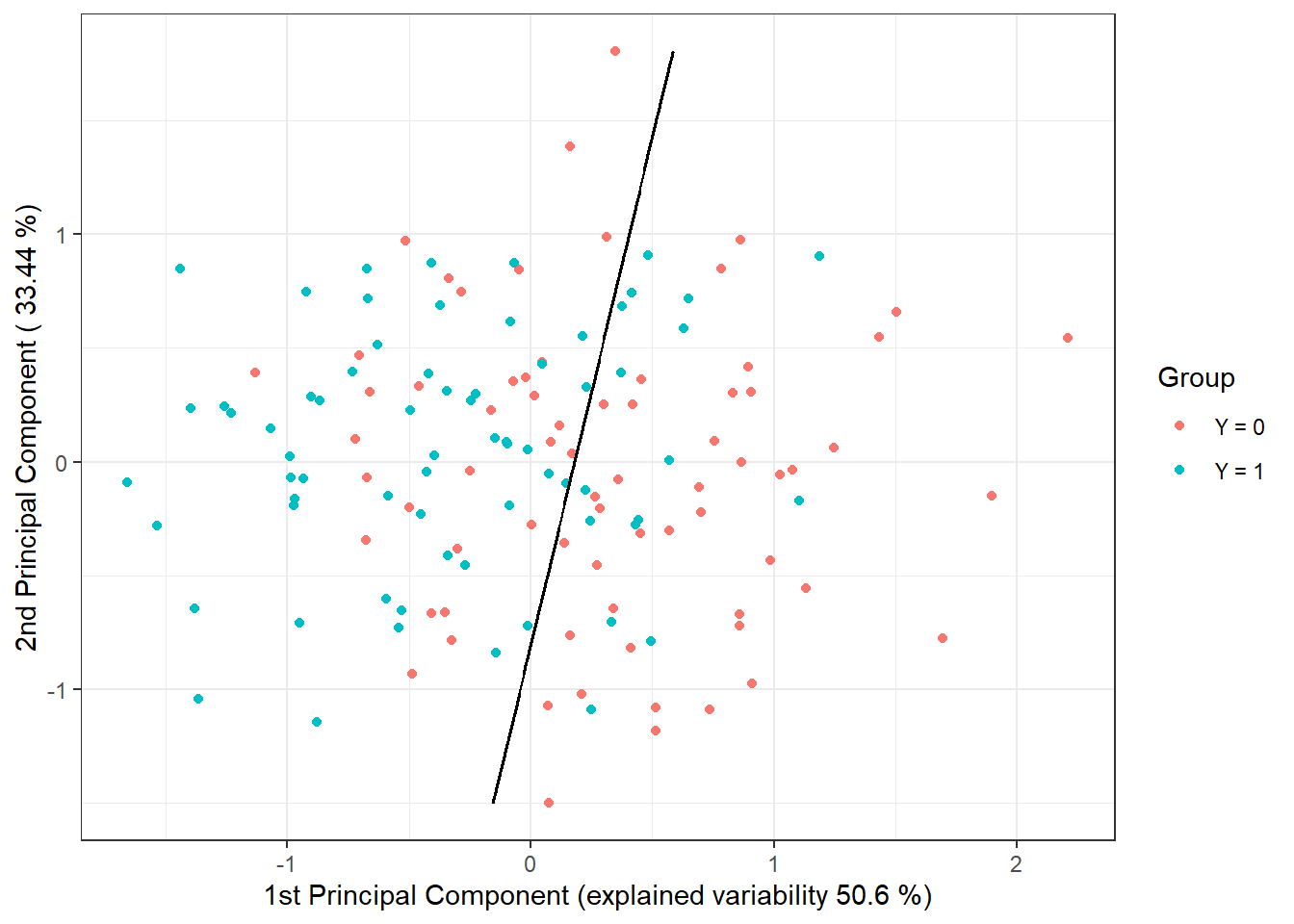

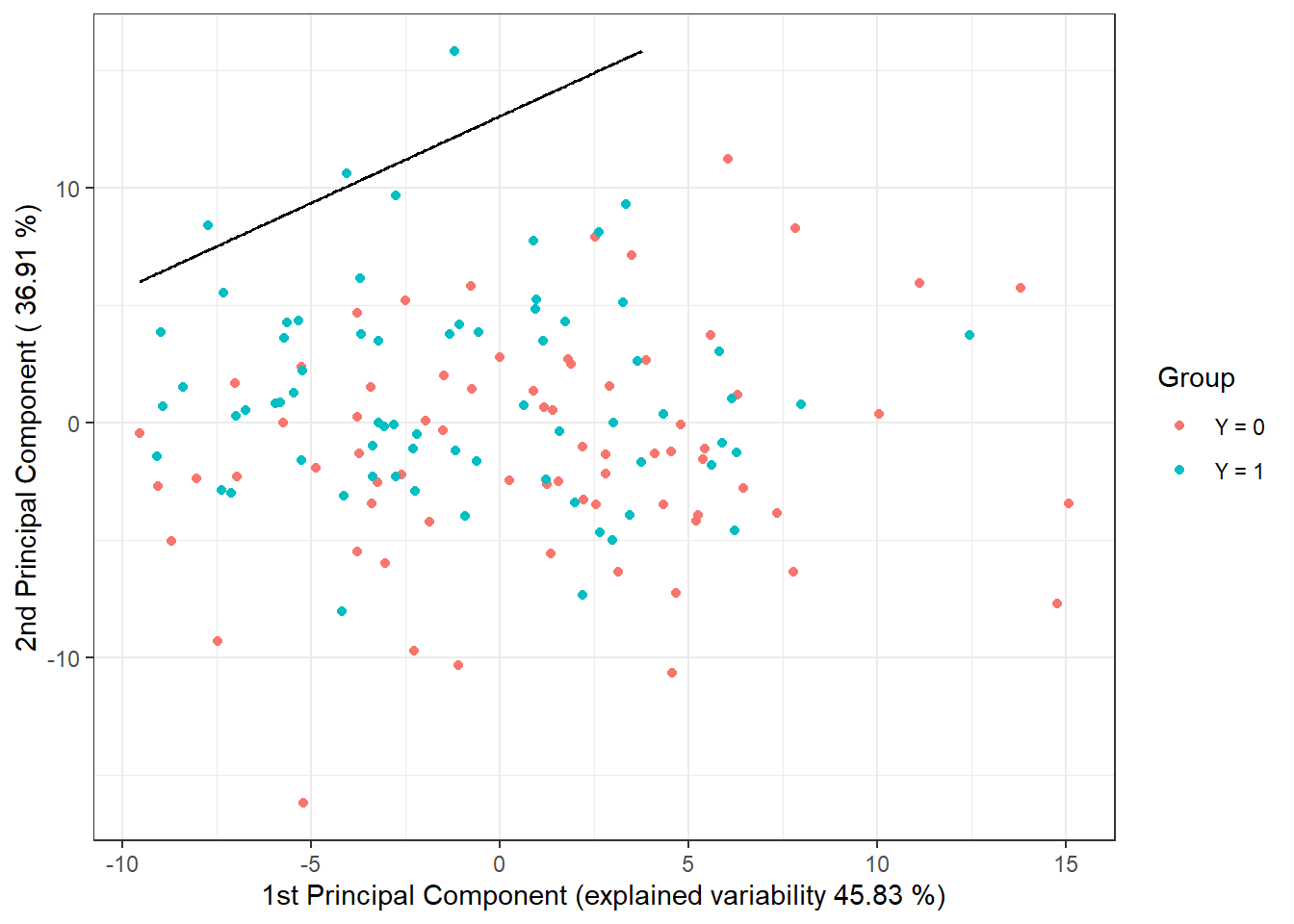

prop.table() |> diag() |> sum()We have calculated the error rate of the classifier on the training (31.43 %) and on the test data (23.33 %).

To visually represent the method, we can indicate the decision boundary in the plot of the scores of the first two principal components. We will compute this boundary on a dense grid of points and display it using the geom_contour() function.

Code

# add decision boundary

np <- 1001 # number of grid points

# x-axis ... 1st PC

nd.x <- seq(from = min(data.PCA.train$V1),

to = max(data.PCA.train$V1), length.out = np)

# y-axis ... 2nd PC

nd.y <- seq(from = min(data.PCA.train$V2),

to = max(data.PCA.train$V2), length.out = np)

# case for 2 PCs ... p = 2

nd <- expand.grid(V1 = nd.x, V2 = nd.y)

# if p = 3

if(dim(data.PCA.train)[2] == 4) {

nd <- expand.grid(V1 = nd.x, V2 = nd.y, V3 = data.PCA.train$V3[1])}

# if p = 4

if(dim(data.PCA.train)[2] == 5) {

nd <- expand.grid(V1 = nd.x, V2 = nd.y, V3 = data.PCA.train$V3[1],

V4 = data.PCA.train$V4[1])}

# if p = 5

if(dim(data.PCA.train)[2] == 6) {

nd <- expand.grid(V1 = nd.x, V2 = nd.y, V3 = data.PCA.train$V3[1],

V4 = data.PCA.train$V4[1], V5 = data.PCA.train$V5[1])}

# add Y = 0, 1

nd <- nd |> mutate(prd = as.numeric(predict(clf.LDA, newdata = nd)$class))

data.PCA.train |> ggplot(aes(x = V1, y = V2, colour = Y)) +

geom_point(size = 1.5) +

labs(x = paste('1st Principal Component (explained variability',

round(100 * data.PCA$varprop[1], 2), '%)'),

y = paste('2nd Principal Component (',

round(100 * data.PCA$varprop[2], 2), '%)'),

colour = 'Group') +

scale_colour_discrete(labels = c('Y = 0', 'Y = 1')) +

theme_bw() +

geom_contour(data = nd, aes(x = V1, y = V2, z = prd), colour = 'black')

Figure 1.11: Scores of the first two principal components, color-coded according to class membership. The decision boundary (line in the plane of the first two principal components) between the classes constructed using LDA is marked in black.

We see that the decision boundary is a line, a linear function in the 2D space, which is indeed what we expected from LDA. Finally, we will add the error rates to the summary table.

5.1.3.3 Quadratic Discriminant Analysis

Next, we will construct a classifier using Quadratic Discriminant Analysis (QDA). This is an analogous case to LDA, with the difference that we now allow for different covariance matrices for each of the classes from which the corresponding scores are drawn. This relaxed assumption of equal covariance matrices leads to a quadratic boundary between the classes.

In R, we perform QDA similarly to how we did LDA in the previous section. We will compute the scores for the training and test functions using the results from the functional Principal Component Analysis (PCA) obtained earlier.

Thus, we can proceed directly to constructing the classifier using the qda() function. We will then calculate the accuracy of the classifier on both test and training data.

Code

# model

clf.QDA <- qda(Y ~ ., data = data.PCA.train)

# accuracy on training data

predictions.train <- predict(clf.QDA, newdata = data.PCA.train)

accuracy.train <- table(data.PCA.train$Y, predictions.train$class) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test <- predict(clf.QDA, newdata = data.PCA.test)

accuracy.test <- table(data.PCA.test$Y, predictions.test$class) |>

prop.table() |> diag() |> sum()We have calculated the error rate of the classifier on the training (35 %) and test data (20 %).

To visually represent the method, we can indicate the decision boundary in the plot of the scores of the first two principal components. We will compute this boundary on a dense grid of points and display it using the geom_contour() function, just like in the case of LDA.

Code

nd <- nd |> mutate(prd = as.numeric(predict(clf.QDA, newdata = nd)$class))

data.PCA.train |> ggplot(aes(x = V1, y = V2, colour = Y)) +

geom_point(size = 1.5) +

labs(x = paste('1st Principal Component (explained variability',

round(100 * data.PCA$varprop[1], 2), '%)'),

y = paste('2nd Principal Component (',

round(100 * data.PCA$varprop[2], 2), '%)'),

colour = 'Group') +

scale_colour_discrete(labels = c('Y = 0', 'Y = 1')) +

theme_bw() +

geom_contour(data = nd, aes(x = V1, y = V2, z = prd), colour = 'black')

Figure 4.1: Scores of the first two principal components, color-coded according to class membership. The decision boundary (parabola in the plane of the first two principal components) between the classes constructed using QDA is marked in black.

Notice that the decision boundary between the classification classes is now a parabola.

Finally, we will add the error rates to the summary table.

5.1.3.4 Logistic Regression

We can perform logistic regression in two ways. First, we can use the functional analogue of classical logistic regression, and second, we can apply classical multivariate logistic regression on the scores of the first \(p\) principal components.

5.1.3.4.1 Functional Logistic Regression

Analogous to the case with finite-dimensional input data, we consider the logistic model in the form:

\[ g\left(\mathbb E [Y|X = x]\right) = \eta (x) = g(\pi(x)) = \alpha + \int \beta(t)\cdot x(t) \text d t, \]

where \(\eta(x)\) is a linear predictor taking values in the interval \((-\infty, \infty)\), \(g(\cdot)\) is the link function (in the case of logistic regression, this is the logit function \(g: (0,1) \rightarrow \mathbb R,\ g(p) = \ln\frac{p}{1-p}\)), and \(\pi(x)\) is the conditional probability:

\[ \pi(x) = \text{Pr}(Y = 1 | X = x) = g^{-1}(\eta(x)) = \frac{\text e^{\alpha + \int \beta(t)\cdot x(t) \text d t}}{1 + \text e^{\alpha + \int \beta(t)\cdot x(t) \text d t}}, \]

where \(\alpha\) is a constant and \(\beta(t) \in L^2[a, b]\) is a parametric function. Our goal is to estimate this parametric function.

For functional logistic regression, we will use the fregre.glm() function from the fda.usc package. First, we will create suitable objects for the classifier construction.

Code

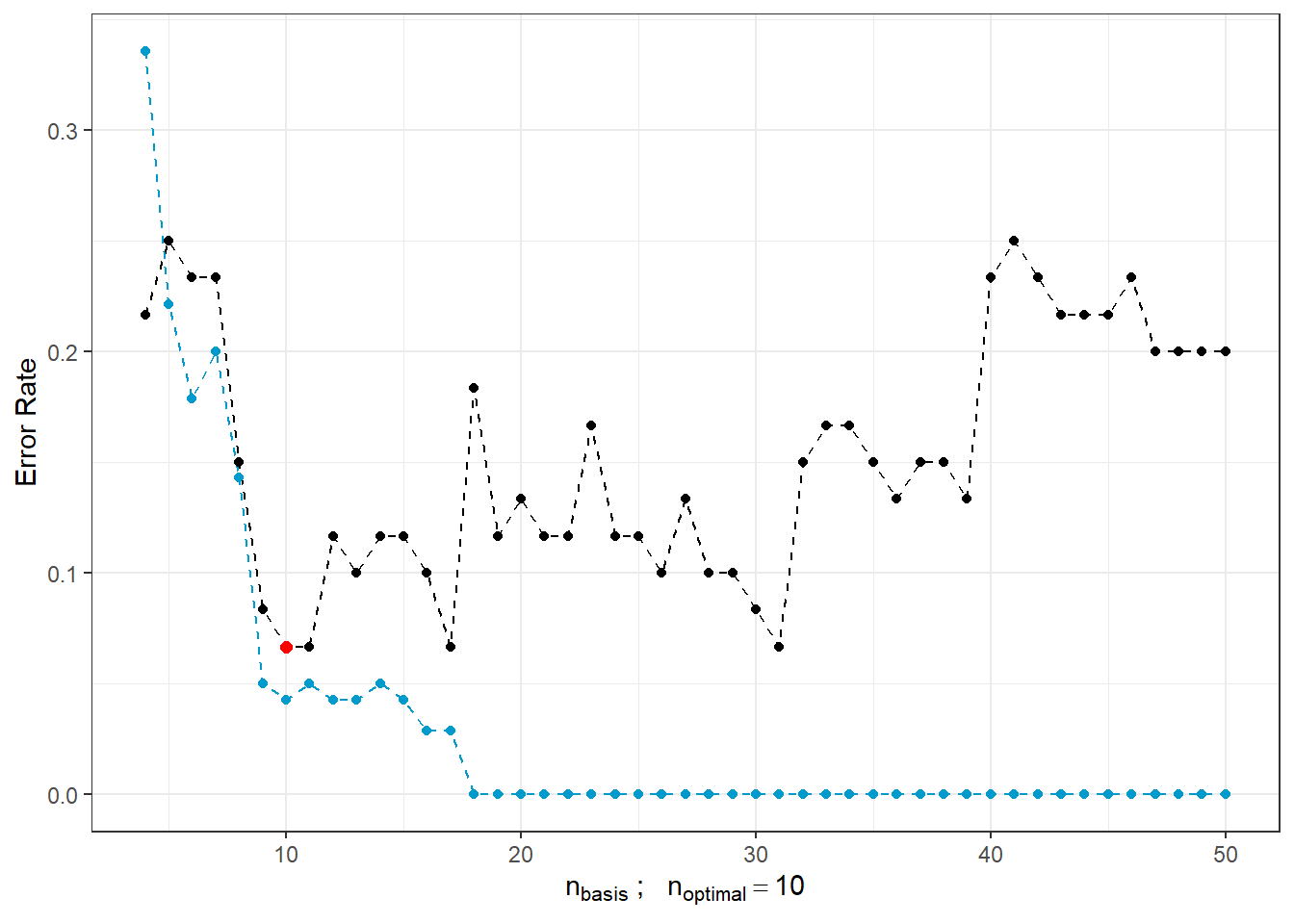

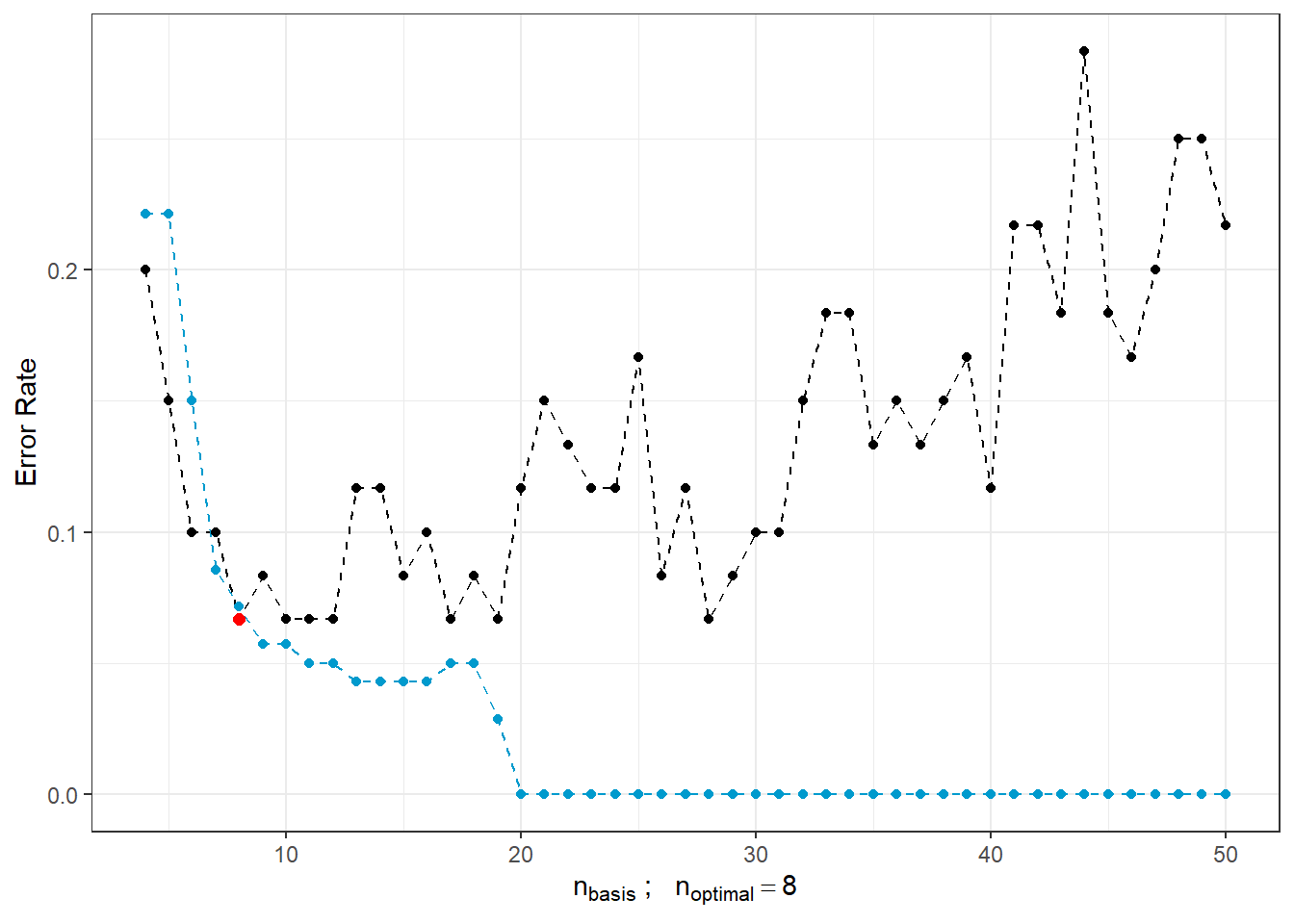

To estimate the parametric function \(\beta(t)\), we need to express it in some basis representation, in our case, a B-spline basis. However, we need to determine a suitable number of basis functions. We could determine this based on the error rate on the training data, but this would lead to a preference for selecting a large number of bases, resulting in overfitting.

Let us illustrate this with the following case. For each number of bases \(n_{basis} \in \{4, 5, \dots, 50\}\), we will train the model on the training data, determine the error rate on the training data, and also calculate the error rate on the test data. We must remember that we cannot use the same data for estimating the test error rate, as this would underestimate the error rate.

Code

n.basis.max <- 50

n.basis <- 4:n.basis.max

pred.baz <- matrix(NA, nrow = length(n.basis), ncol = 2,

dimnames = list(n.basis, c('Err.train', 'Err.test')))

for (i in n.basis) {

# basis for betas

basis2 <- create.bspline.basis(rangeval = range(tt), nbasis = i)

# formula

f <- Y ~ x

# basis for x and betas

basis.x <- list("x" = basis1) # smoothed data

basis.b <- list("x" = basis2)

# input data for the model

ldata <- list("df" = dataf, "x" = x.train)

# binomial model ... logistic regression model

model.glm <- fregre.glm(f, family = binomial(), data = ldata,

basis.x = basis.x, basis.b = basis.b)

# accuracy on training data

predictions.train <- predict(model.glm, newx = ldata)

predictions.train <- data.frame(Y.pred = ifelse(predictions.train < 1/2, 0, 1))

accuracy.train <- table(Y.train, predictions.train$Y.pred) |>

prop.table() |> diag() |> sum()

# accuracy on test data

newldata = list("df" = as.data.frame(Y.test), "x" = fdata(X.test))

predictions.test <- predict(model.glm, newx = newldata)

predictions.test <- data.frame(Y.pred = ifelse(predictions.test < 1/2, 0, 1))

accuracy.test <- table(Y.test, predictions.test$Y.pred) |>

prop.table() |> diag() |> sum()

# insert into the matrix

pred.baz[as.character(i), ] <- 1 - c(accuracy.train, accuracy.test)

}

pred.baz <- as.data.frame(pred.baz)

pred.baz$n.basis <- n.basisLet’s visualize the trends of both training and test error rates in a graph based on the number of basis functions.

Code

n.basis.beta.opt <- pred.baz$n.basis[which.min(pred.baz$Err.test)]

pred.baz |> ggplot(aes(x = n.basis, y = Err.test)) +

geom_line(linetype = 'dashed', colour = 'black') +

geom_line(aes(x = n.basis, y = Err.train), colour = 'deepskyblue3',

linetype = 'dashed', linewidth = 0.5) +

geom_point(size = 1.5) +

geom_point(aes(x = n.basis, y = Err.train), colour = 'deepskyblue3',

size = 1.5) +

geom_point(aes(x = n.basis.beta.opt, y = min(pred.baz$Err.test)),

colour = 'red', size = 2) +

theme_bw() +

labs(x = bquote(paste(n[basis], ' ; ',

n[optimal] == .(n.basis.beta.opt))),

y = 'Error Rate')## Warning: Use of `pred.baz$Err.test` is discouraged.

## ℹ Use `Err.test` instead.## Warning in geom_point(aes(x = n.basis.beta.opt, y = min(pred.baz$Err.test)), : All aesthetics have length 1, but the data has 47 rows.

## ℹ Please consider using `annotate()` or provide this layer with data containing

## a single row.

Figure 1.13: Dependence of test and training error rates on the number of basis functions for \(\beta\). The red point represents the optimal number \(n_{optimal}\) chosen as the minimum test error rate, the black line depicts the test error, and the blue dashed line illustrates the training error rate.

We see that as the number of bases for \(\beta(t)\) increases, the training error rate (represented by the blue line) tends to decrease, suggesting that we might choose large values for \(n_{basis}\) based solely on it. In contrast, the optimal choice based on the test error rate is \(n\) equal to 10, which is significantly smaller than 50. Conversely, as \(n\) increases, the test error rate rises, indicating overfitting of the model.

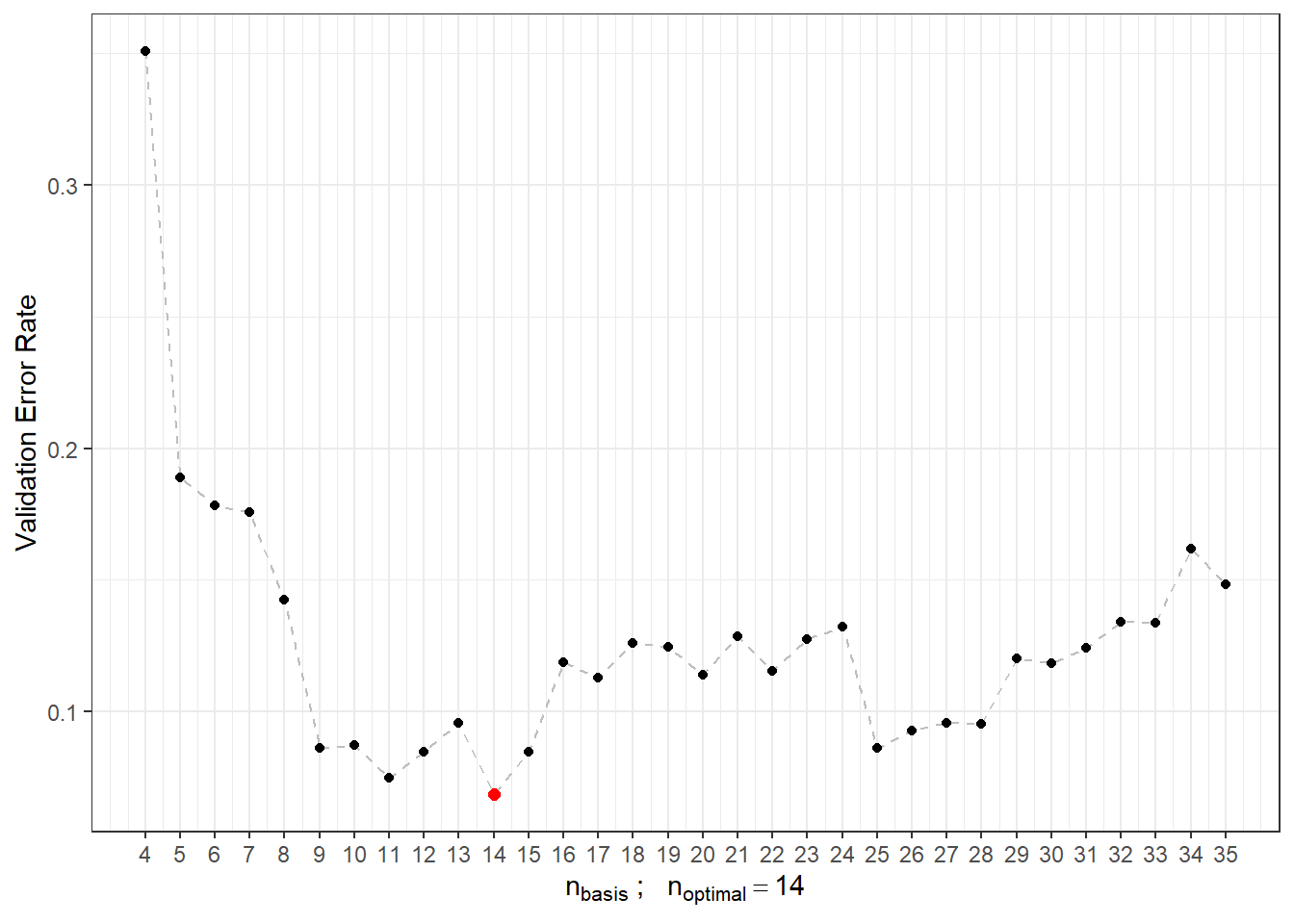

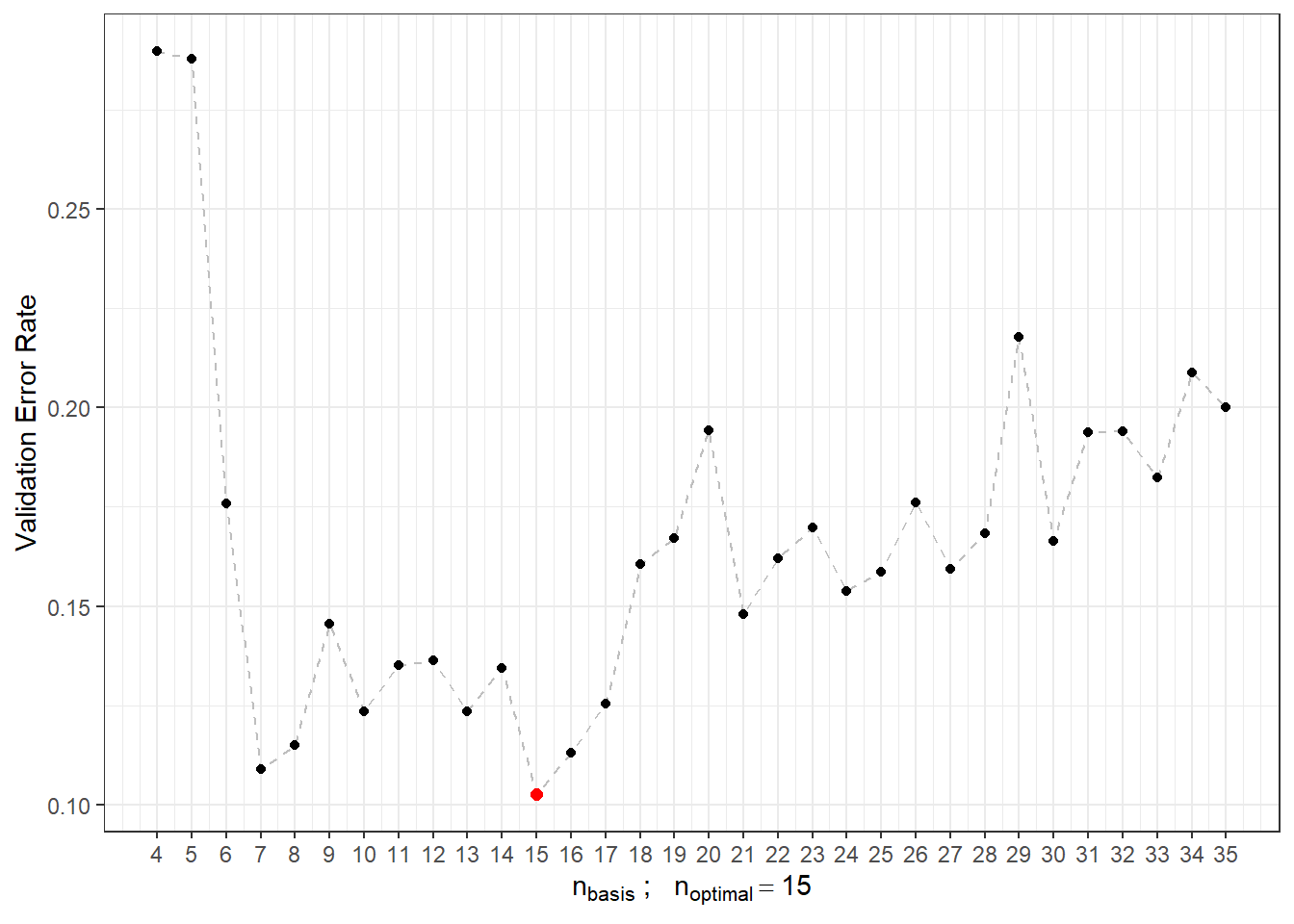

For these reasons, we will use 10-fold cross-validation to determine the optimal number of basis functions for \(\beta(t)\). The maximum number of basis functions considered is 35, as we observed that exceeding this value leads to overfitting.

Code

### 10-fold cross-validation

n.basis.max <- 35

n.basis <- 4:n.basis.max

k_cv <- 10 # k-fold CV

# divide the training data into k parts

folds <- createMultiFolds(X.train$fdnames$reps, k = k_cv, time = 1)

## elements that do not change during the loop

# points at which the functions are evaluated

tt <- x.train[["argvals"]]

rangeval <- range(tt)

# B-spline basis

basis1 <- X.train$basis

# formula

f <- Y ~ x

# basis for x

basis.x <- list("x" = basis1)

# empty matrix to store results

# columns will contain accuracy values for the respective training subset

# rows will contain values for the respective number of bases

CV.results <- matrix(NA, nrow = length(n.basis), ncol = k_cv,

dimnames = list(n.basis, 1:k_cv))Now that we have everything prepared, we will calculate the error rates for each of the ten subsets of the training set. Subsequently, we will determine the average error and take the argument of the minimum validation error as the optimal \(n\).

Code

for (index in 1:k_cv) {

# define the index set

fold <- folds[[index]]

x.train.cv <- subset(X.train, c(1:length(X.train$fdnames$reps)) %in% fold) |>

fdata()

y.train.cv <- subset(Y.train, c(1:length(X.train$fdnames$reps)) %in% fold) |>

as.numeric()

x.test.cv <- subset(X.train, !c(1:length(X.train$fdnames$reps)) %in% fold) |>

fdata()

y.test.cv <- subset(Y.train, !c(1:length(X.train$fdnames$reps)) %in% fold) |>

as.numeric()

dataf <- as.data.frame(y.train.cv)

colnames(dataf) <- "Y"

for (i in n.basis) {

# basis for betas

basis2 <- create.bspline.basis(rangeval = rangeval, nbasis = i)

basis.b <- list("x" = basis2)

# input data for the model

ldata <- list("df" = dataf, "x" = x.train.cv)

# binomial model ... logistic regression model

model.glm <- fregre.glm(f, family = binomial(), data = ldata,

basis.x = basis.x, basis.b = basis.b)

# accuracy on the validation subset

newldata = list("df" = as.data.frame(y.test.cv), "x" = x.test.cv)

predictions.valid <- predict(model.glm, newx = newldata)

predictions.valid <- data.frame(Y.pred = ifelse(predictions.valid < 1/2, 0, 1))

accuracy.valid <- table(y.test.cv, predictions.valid$Y.pred) |>

prop.table() |> diag() |> sum()

# insert into the matrix

CV.results[as.character(i), as.character(index)] <- accuracy.valid

}

}

# calculate average accuracies for each n across folds

CV.results <- apply(CV.results, 1, mean)

n.basis.opt <- n.basis[which.max(CV.results)]

presnost.opt.cv <- max(CV.results)

# CV.resultsLet’s plot the validation error rates, highlighting the optimal value of \(n_{optimal}\), which is 14, with a validation error rate of 0.0684.

Code

CV.results <- data.frame(n.basis = n.basis, CV = CV.results)

CV.results |> ggplot(aes(x = n.basis, y = 1 - CV)) +

geom_line(linetype = 'dashed', colour = 'grey') +

geom_point(size = 1.5) +

geom_point(aes(x = n.basis.opt, y = 1 - presnost.opt.cv), colour = 'red', size = 2) +

theme_bw() +

labs(x = bquote(paste(n[basis], ' ; ',

n[optimal] == .(n.basis.opt))),

y = 'Validation Error Rate') +

scale_x_continuous(breaks = n.basis)## Warning in geom_point(aes(x = n.basis.opt, y = 1 - presnost.opt.cv), colour = "red", : All aesthetics have length 1, but the data has 32 rows.

## ℹ Please consider using `annotate()` or provide this layer with data containing

## a single row.

Figure 1.15: Dependence of validation error on the value of \(n_{basis}\), i.e., on the number of bases.

We can now define the final model using functional logistic regression, choosing the B-spline basis for \(\beta(t)\) with 14 bases.

Code

# optimal model

basis2 <- create.bspline.basis(rangeval = range(tt), nbasis = n.basis.opt)

f <- Y ~ x

# bases for x and betas

basis.x <- list("x" = basis1)

basis.b <- list("x" = basis2)

# input data for the model

dataf <- as.data.frame(y.train)

colnames(dataf) <- "Y"

ldata <- list("df" = dataf, "x" = x.train)

# binomial model ... logistic regression model

model.glm <- fregre.glm(f, family = binomial(), data = ldata,

basis.x = basis.x, basis.b = basis.b)

# accuracy on training data

predictions.train <- predict(model.glm, newx = ldata)

predictions.train <- data.frame(Y.pred = ifelse(predictions.train < 1/2, 0, 1))

presnost.train <- table(Y.train, predictions.train$Y.pred) |>

prop.table() |> diag() |> sum()

# accuracy on test data

newldata = list("df" = as.data.frame(Y.test), "x" = fdata(X.test))

predictions.test <- predict(model.glm, newx = newldata)

predictions.test <- data.frame(Y.pred = ifelse(predictions.test < 1/2, 0, 1))

presnost.test <- table(Y.test, predictions.test$Y.pred) |>

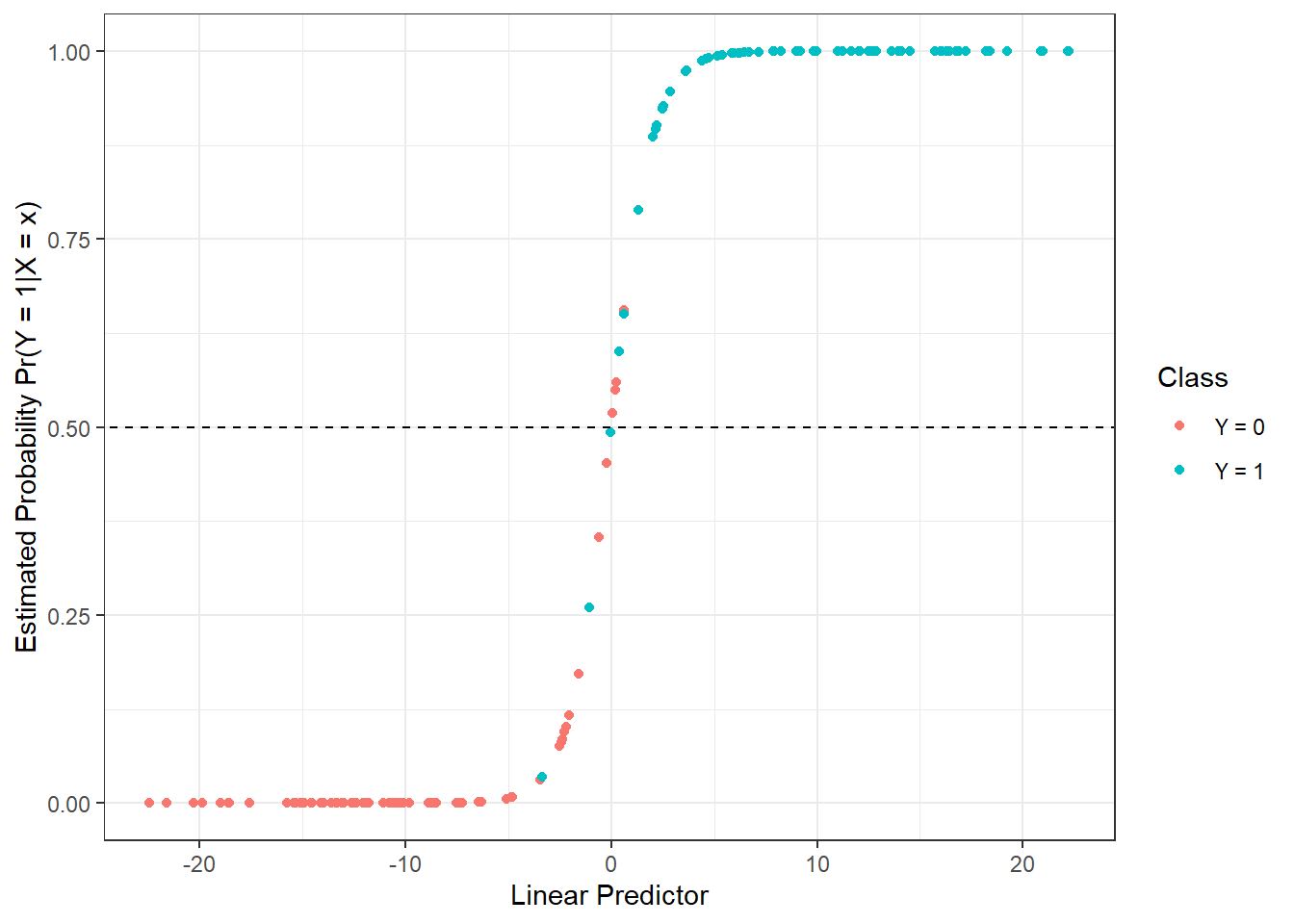

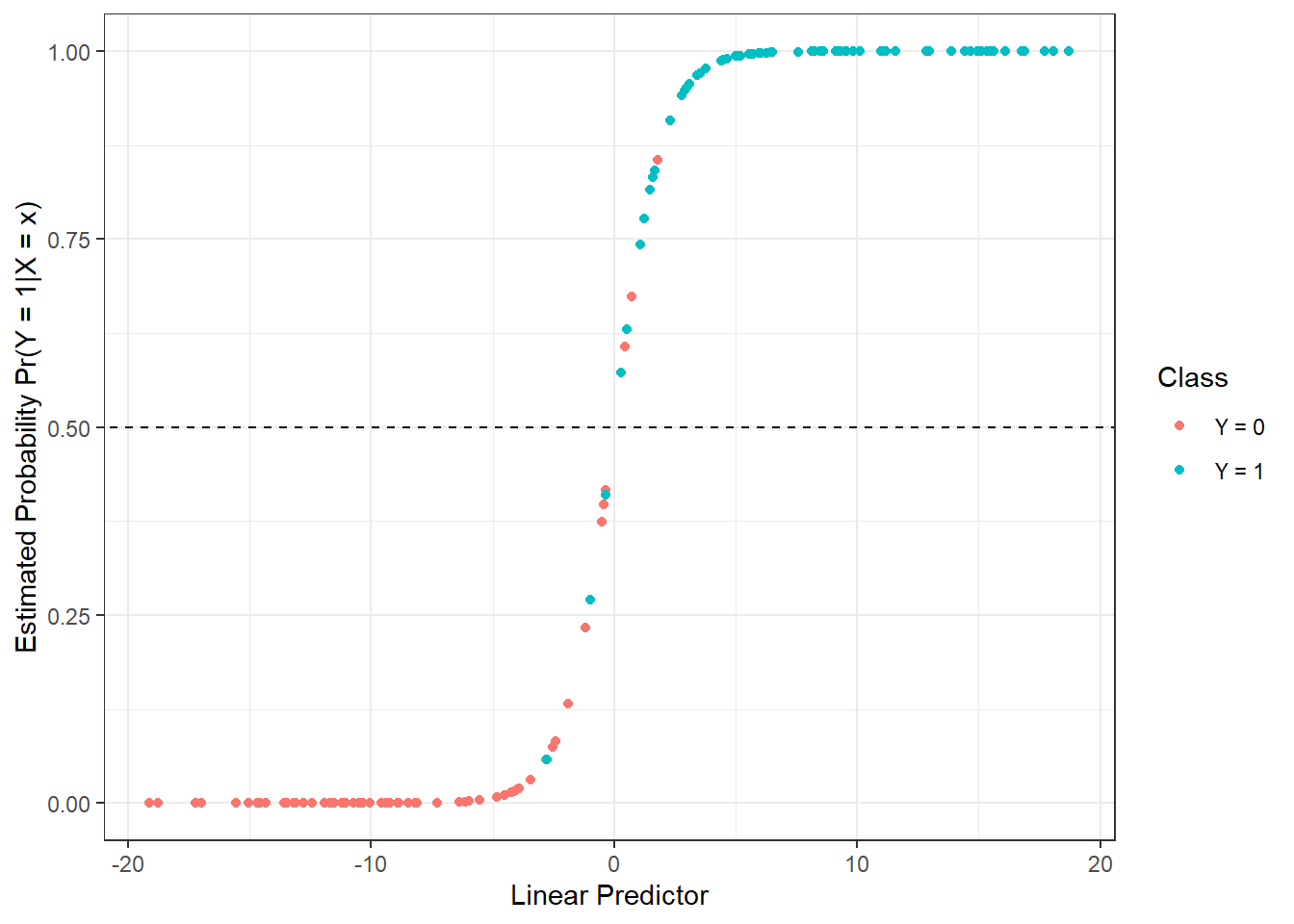

prop.table() |> diag() |> sum()We have calculated the training error rate (which is 5 %) and the test error rate (which is 11.67 %). For better visualization, we can also plot the estimated probabilities of belonging to the classification class \(Y = 1\) on the training data against the values of the linear predictor.

Code

data.frame(

linear.predictor = model.glm$linear.predictors,

response = model.glm$fitted.values,

Y = factor(y.train)

) |> ggplot(aes(x = linear.predictor, y = response, colour = Y)) +

geom_point(size = 1.5) +

scale_colour_discrete(labels = c('Y = 0', 'Y = 1')) +

geom_abline(aes(slope = 0, intercept = 0.5), linetype = 'dashed') +

theme_bw() +

labs(x = 'Linear Predictor',

y = 'Estimated Probability Pr(Y = 1|X = x)',

colour = 'Class')

Figure 5.5: Dependence of estimated probabilities on the values of the linear predictor. Points are color-coded according to their classification class.

For informational purposes, we can also display the progression of the estimated parametric function \(\beta(t)\).

Code

t.seq <- seq(0, 6, length = 1001)

beta.seq <- eval.fd(evalarg = t.seq, fdobj = model.glm$beta.l$x)

data.frame(t = t.seq, beta = beta.seq) |>

ggplot(aes(t, beta)) +

geom_line() +

theme_bw() +

labs(x = 'Time',

y = expression(widehat(beta)(t))) +

theme(panel.grid.major = element_blank(), panel.grid.minor = element_blank()) +

geom_abline(aes(slope = 0, intercept = 0), linetype = 'dashed',

linewidth = 0.5, colour = 'grey')![Plot of the estimated parametric function $\beta(t), t \in [0, 6]$.](05-Simulace_deriv2_files/figure-html/unnamed-chunk-49-1.png)

Figure 5.6: Plot of the estimated parametric function \(\beta(t), t \in [0, 6]\).

Finally, we will add the results to the summary table.

5.1.3.4.2 Logistic Regression with Principal Component Analysis

To construct this classifier, we need to perform functional principal component analysis, determine the appropriate number of components, and calculate the score values for the test data. We have already completed this in the linear discriminant analysis section, so we will use these results in the following section.

We can directly construct the logistic regression model using the glm(, family = binomial) function.

Code

# model

clf.LR <- glm(Y ~ ., data = data.PCA.train, family = binomial)

# accuracy on training data

predictions.train <- predict(clf.LR, newdata = data.PCA.train, type = 'response')

predictions.train <- ifelse(predictions.train > 0.5, 1, 0)

presnost.train <- table(data.PCA.train$Y, predictions.train) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test <- predict(clf.LR, newdata = data.PCA.test, type = 'response')

predictions.test <- ifelse(predictions.test > 0.5, 1, 0)

presnost.test <- table(data.PCA.test$Y, predictions.test) |>

prop.table() |> diag() |> sum()We have calculated the error rate of the classifier on the training data (31.43 %) and on the test data (23.33 %).

For graphical representation of the method, we can plot the decision boundary in the scores of the first two principal components. We will compute this boundary on a dense grid of points and display it using the geom_contour() function, just as we did in the LDA and QDA cases.

Code

nd <- nd |> mutate(prd = as.numeric(predict(clf.LR, newdata = nd,

type = 'response')))

nd$prd <- ifelse(nd$prd > 0.5, 1, 0)

data.PCA.train |> ggplot(aes(x = V1, y = V2, colour = Y)) +

geom_point(size = 1.5) +

labs(x = paste('1st Principal Component (explained variance',

round(100 * data.PCA$varprop[1], 2), '%)'),

y = paste('2nd Principal Component (',

round(100 * data.PCA$varprop[2], 2), '%)'),

colour = 'Group') +

scale_colour_discrete(labels = c('Y = 0', 'Y = 1')) +

theme_bw() +

geom_contour(data = nd, aes(x = V1, y = V2, z = prd), colour = 'black')

Figure 5.7: Scores of the first two principal components, color-coded according to classification class. The decision boundary (a line in the plane of the first two principal components) between classes is indicated in black, constructed using logistic regression.

Note that the decision boundary between the classification classes is now a line, similar to the case with LDA.

Finally, we will add the error rates to the summary table.

5.1.3.5 Decision Trees

In this section, we will look at a very different approach to constructing a classifier compared to methods such as LDA or logistic regression. Decision trees are a very popular tool for classification; however, like some of the previous methods, they are not directly designed for functional data. There are, however, procedures to convert functional objects into multidimensional ones, allowing us to apply decision tree algorithms. We can consider the following approaches:

An algorithm built on basis coefficients,

Utilizing principal component scores,

Discretizing the interval and evaluating the function only on a finite grid of points.

We will first focus on discretizing the interval and then compare the results with the other two approaches to constructing decision trees.

5.1.3.5.1 Interval Discretization

First, we need to define points from the interval \(I = [0, 6]\), where we will evaluate the functions. Next, we will create an object where the rows represent the individual (discretized) functions and the columns represent time. Finally, we will add a column \(Y\) containing information about the classification class and repeat the same for the test data.

Code

# sequence of points at which we will evaluate the functions

t.seq <- seq(0, 6, length = 101)

grid.data <- eval.fd(fdobj = X.train, evalarg = t.seq)

grid.data <- as.data.frame(t(grid.data)) # transpose for functions in rows

grid.data$Y <- Y.train |> factor()

grid.data.test <- eval.fd(fdobj = X.test, evalarg = t.seq)

grid.data.test <- as.data.frame(t(grid.data.test))

grid.data.test$Y <- Y.test |> factor()Now we can construct a decision tree where all times from the vector t.seq will serve as predictors. This classification method is not susceptible to multicollinearity, so we do not need to worry about it. We will choose accuracy as the metric.

Code

# model construction

clf.tree <- train(Y ~ ., data = grid.data,

method = "rpart",

trControl = trainControl(method = "CV", number = 10),

metric = "Accuracy")

# accuracy on training data

predictions.train <- predict(clf.tree, newdata = grid.data)

presnost.train <- table(Y.train, predictions.train) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test <- predict(clf.tree, newdata = grid.data.test)

presnost.test <- table(Y.test, predictions.test) |>

prop.table() |> diag() |> sum()The error rate of the classifier on the test data is thus 18.33 %, and on the training data 26.43 %.

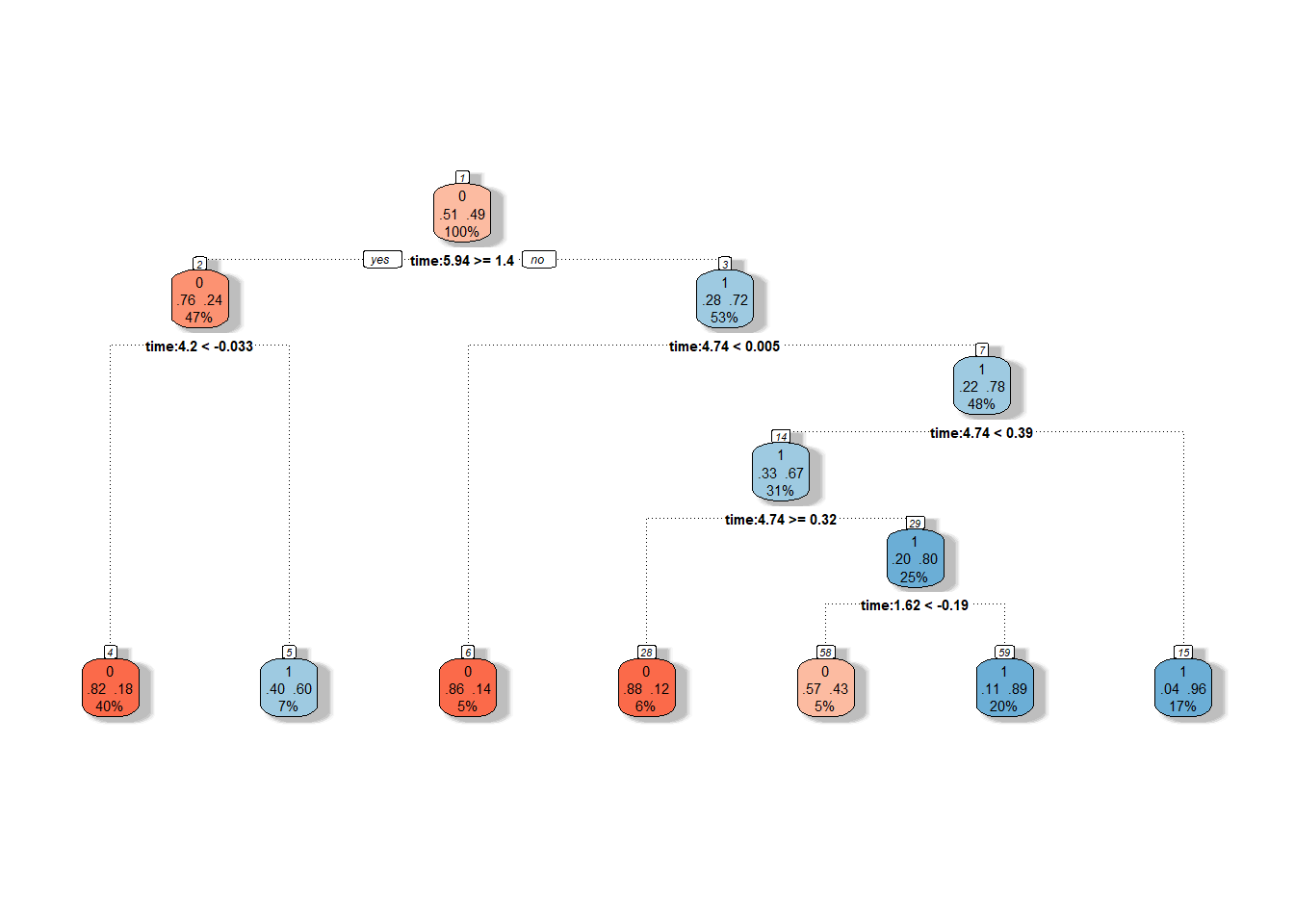

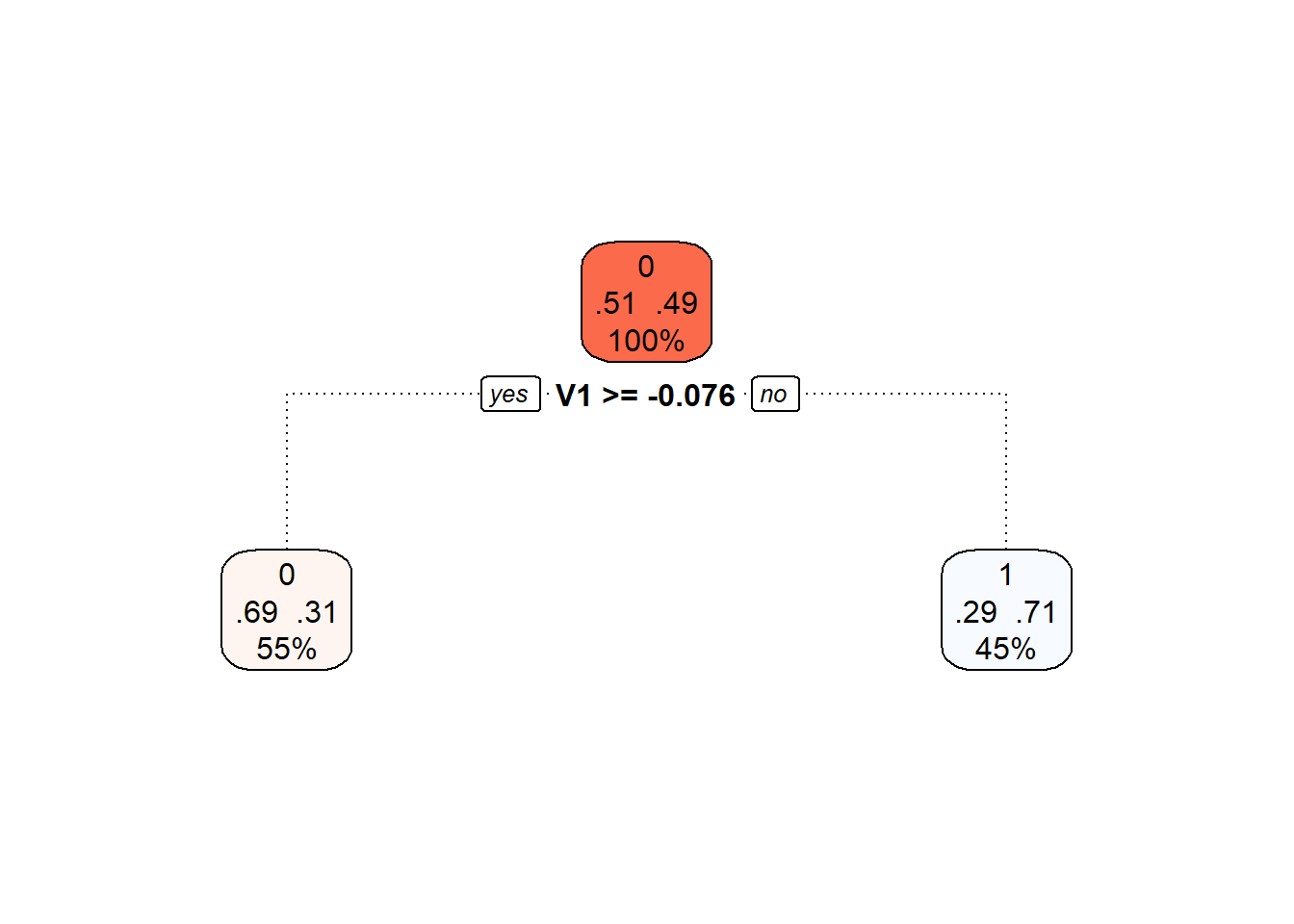

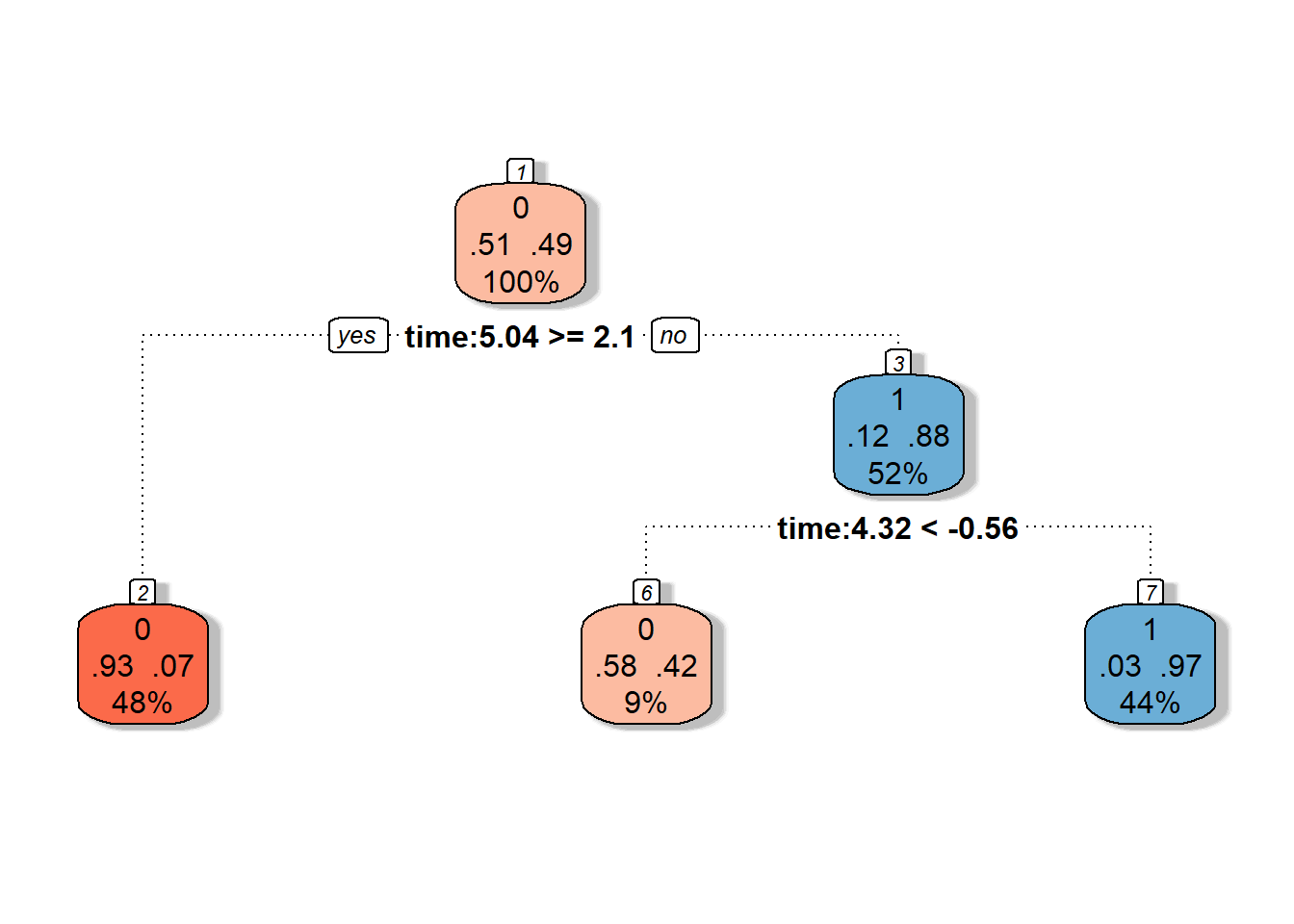

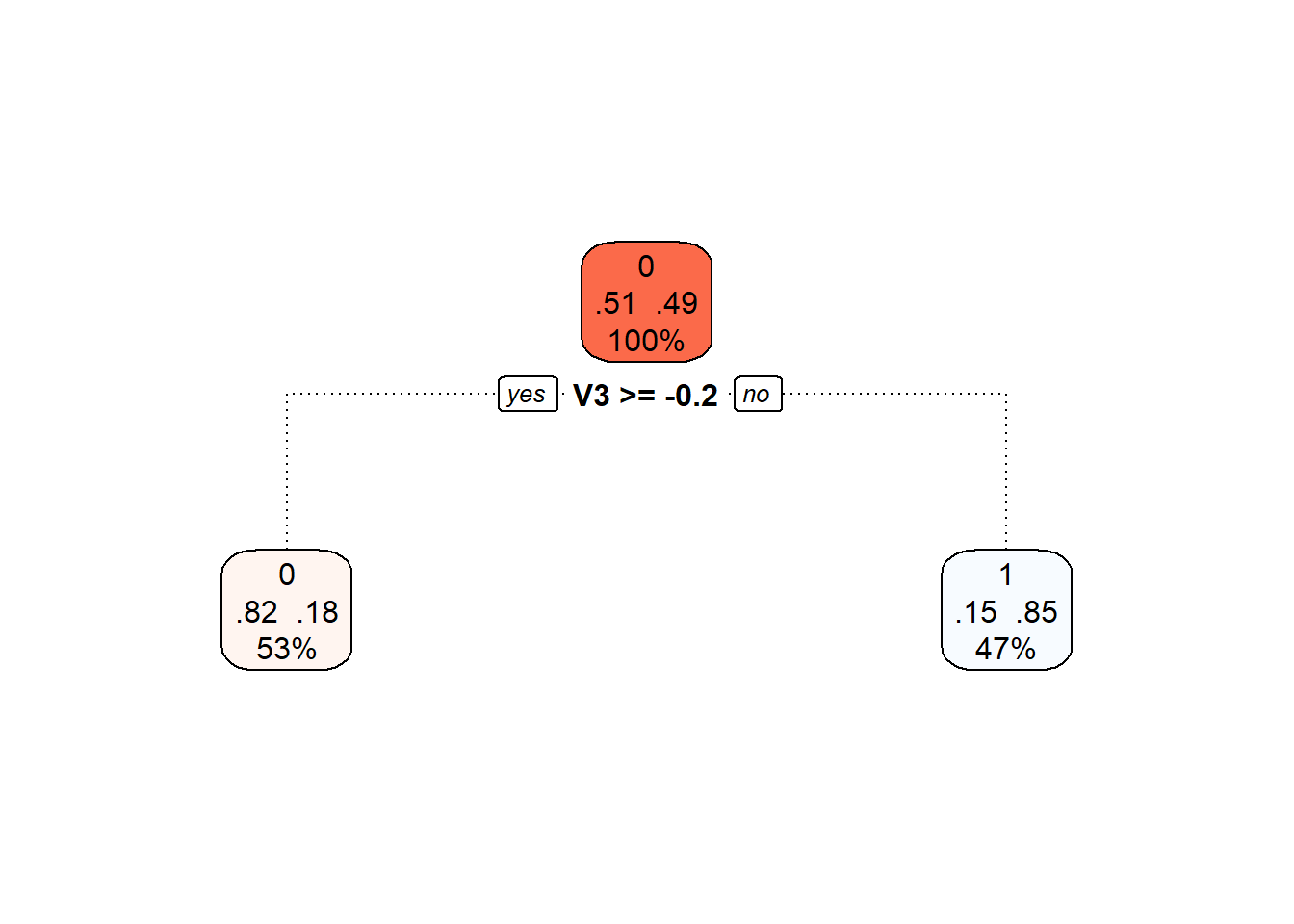

We can visualize the decision tree graphically using the fancyRpartPlot() function. We will set the colors of the nodes to reflect the previous color differentiation. This is an unpruned tree.

Code

Figure 1.18: Graphical representation of the unpruned decision tree. Blue shades represent nodes belonging to classification class 1, and red shades represent class 0.

We can also plot the final pruned decision tree.

Code

Figure 5.8: Final pruned decision tree.

Finally, we will again add the training and test error rates to the summary table.

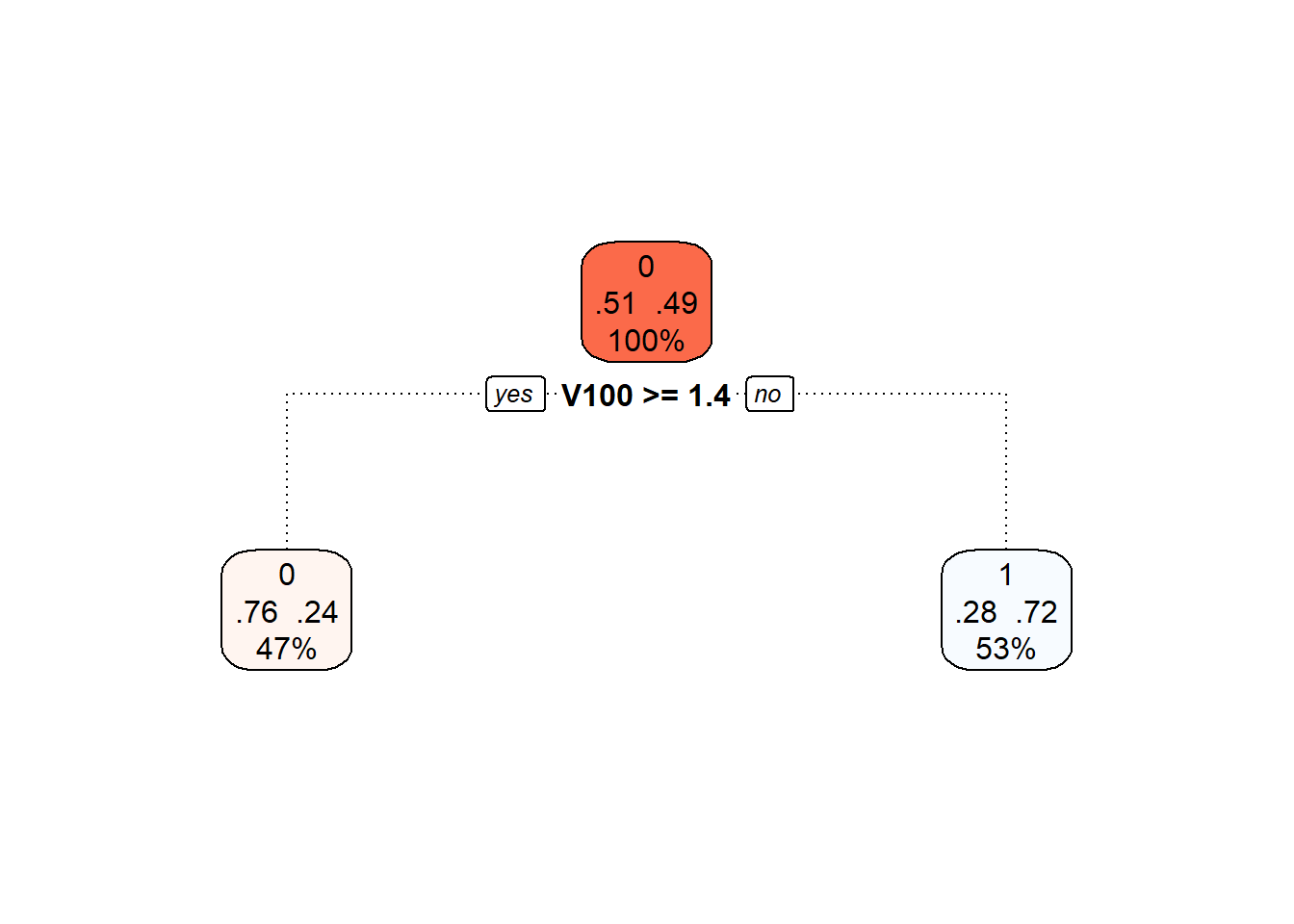

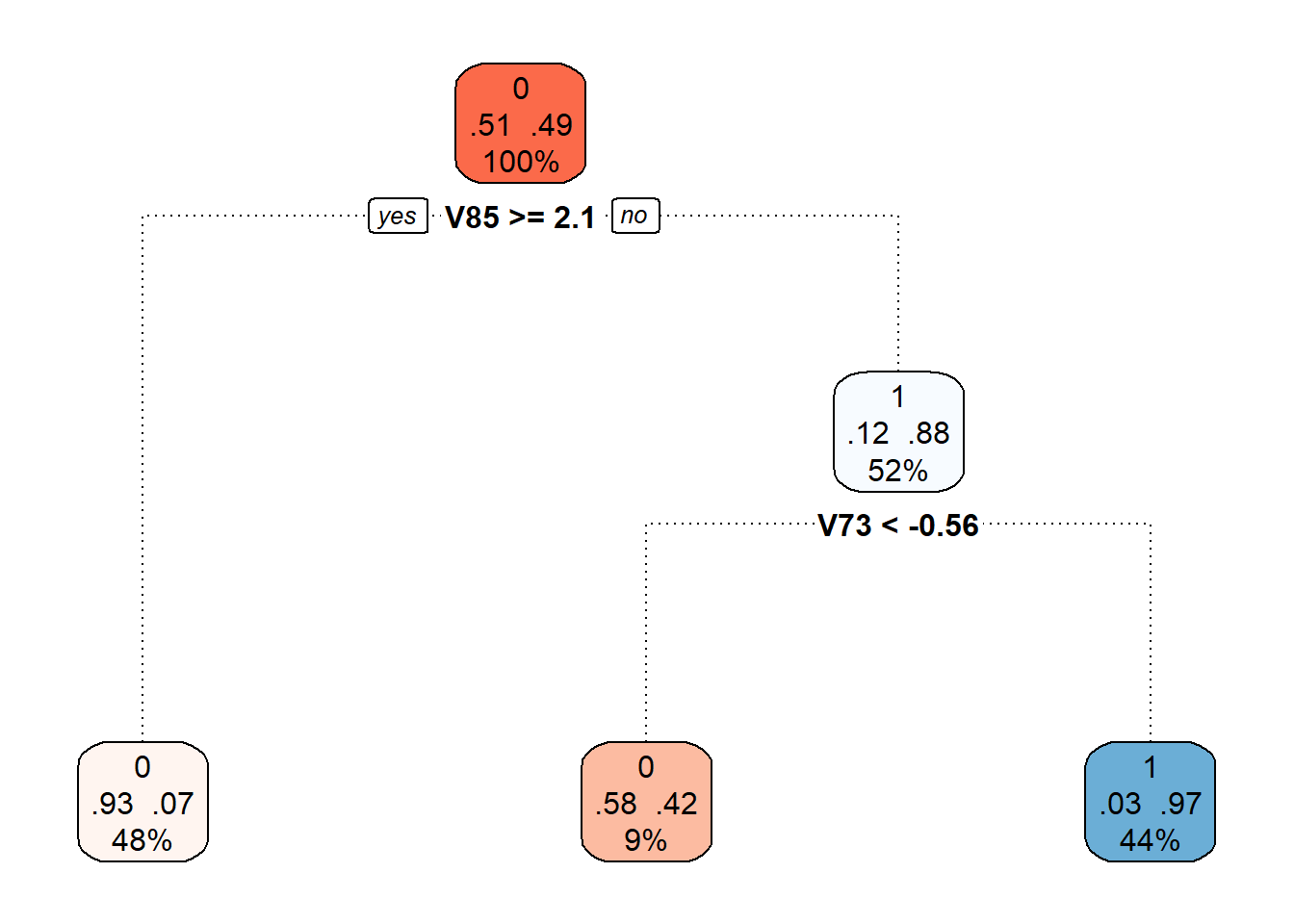

5.1.3.5.2 Principal Component Scores

Another option for constructing a decision tree is to use principal component scores. Since we have already calculated the scores for the previous classification methods, we will utilize this knowledge and construct a decision tree based on the scores of the first 3 principal components.

Code

# model construction

clf.tree.PCA <- train(Y ~ ., data = data.PCA.train,

method = "rpart",

trControl = trainControl(method = "CV", number = 10),

metric = "Accuracy")

# accuracy on training data

predictions.train <- predict(clf.tree.PCA, newdata = data.PCA.train)

presnost.train <- table(Y.train, predictions.train) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test <- predict(clf.tree.PCA, newdata = data.PCA.test)

presnost.test <- table(Y.test, predictions.test) |>

prop.table() |> diag() |> sum()The error rate of the decision tree on the test data is thus 23.33 %, and on the training data 30 %.

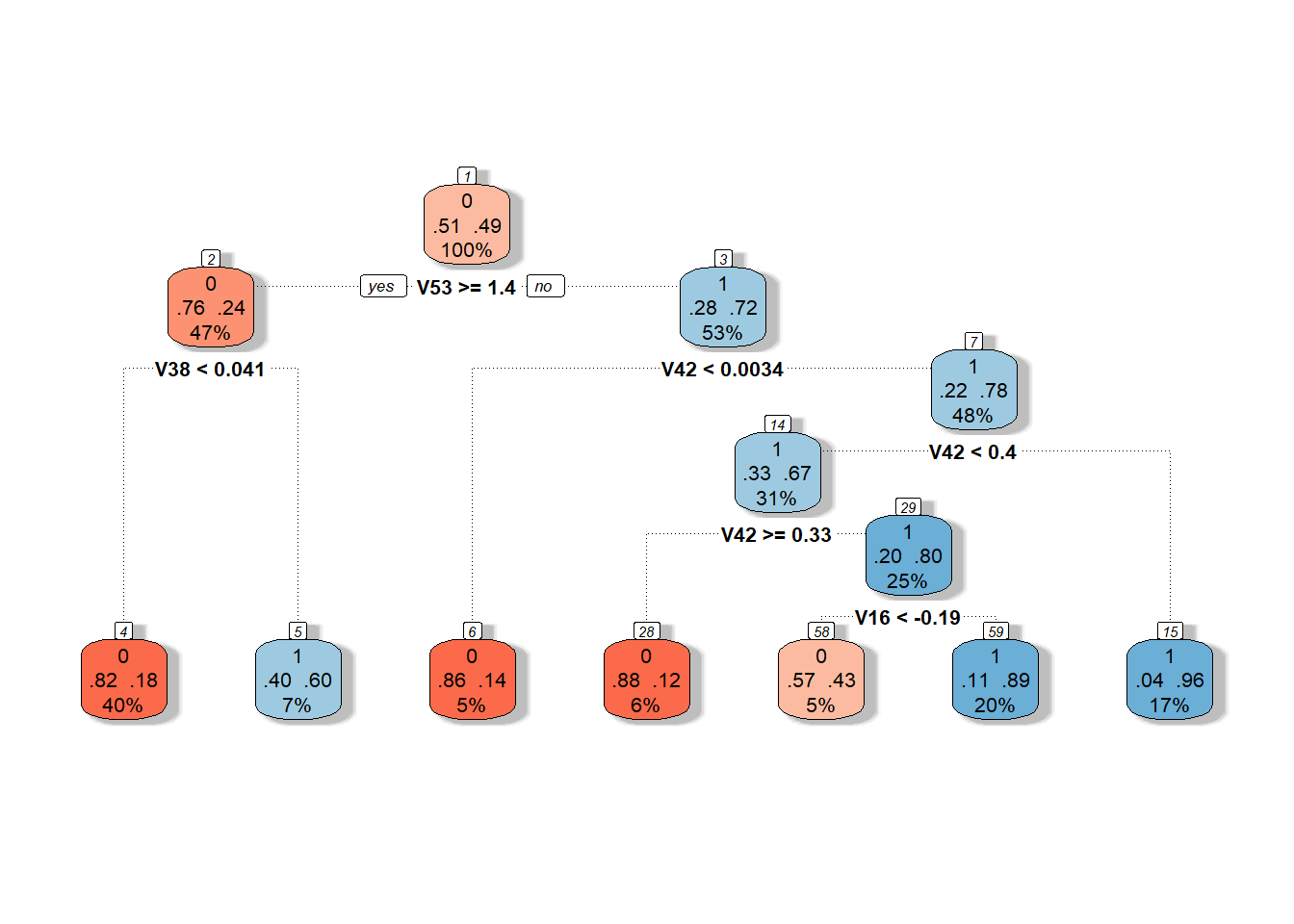

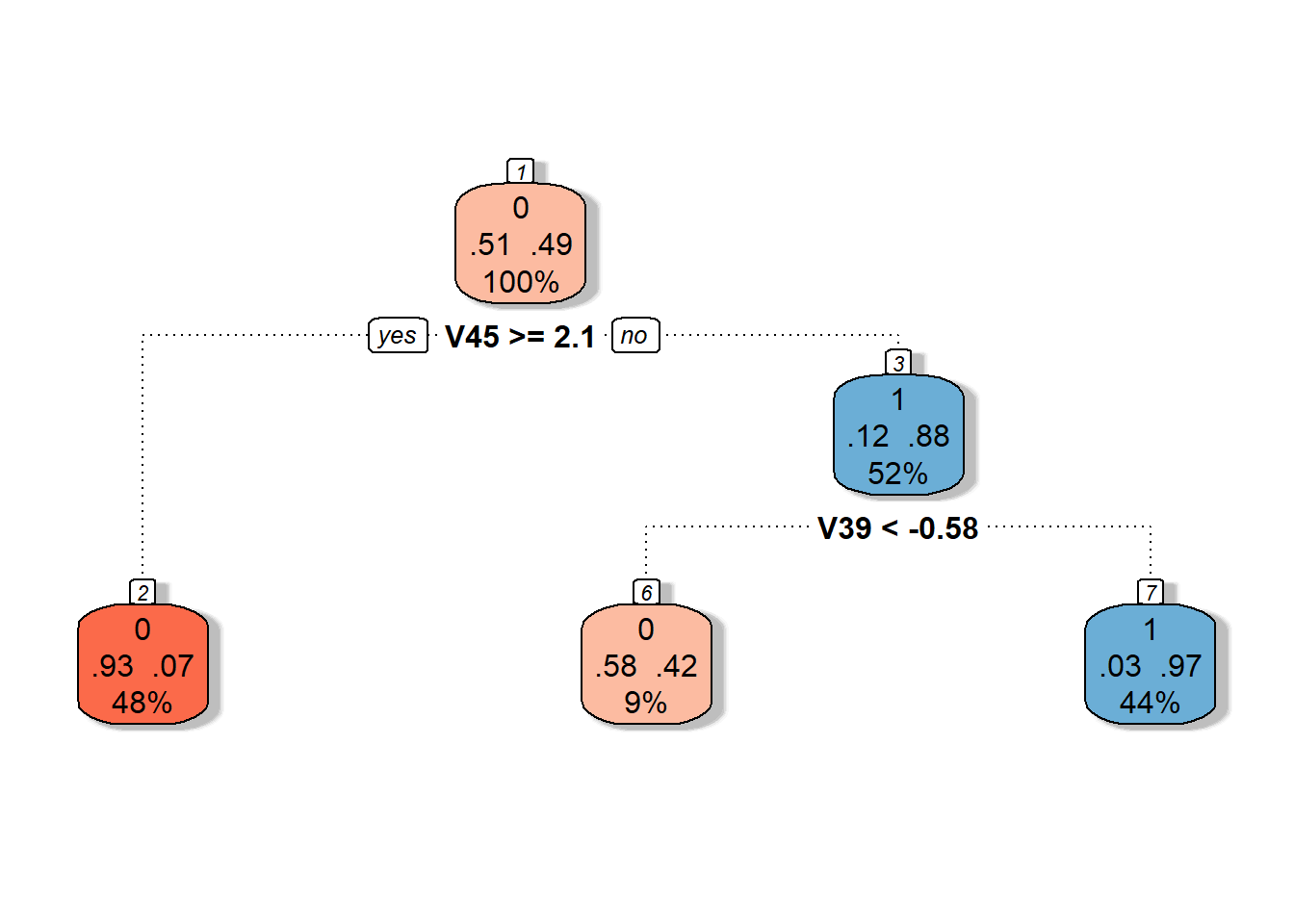

We can visualize the decision tree constructed on the principal component scores using the fancyRpartPlot() function. We will set the colors of the nodes to reflect the previous color differentiation. This is an unpruned tree.

Code

Figure 1.20: Graphical representation of the unpruned decision tree constructed on principal component scores. Blue shades represent nodes belonging to classification class 1, and red shades represent class 0.

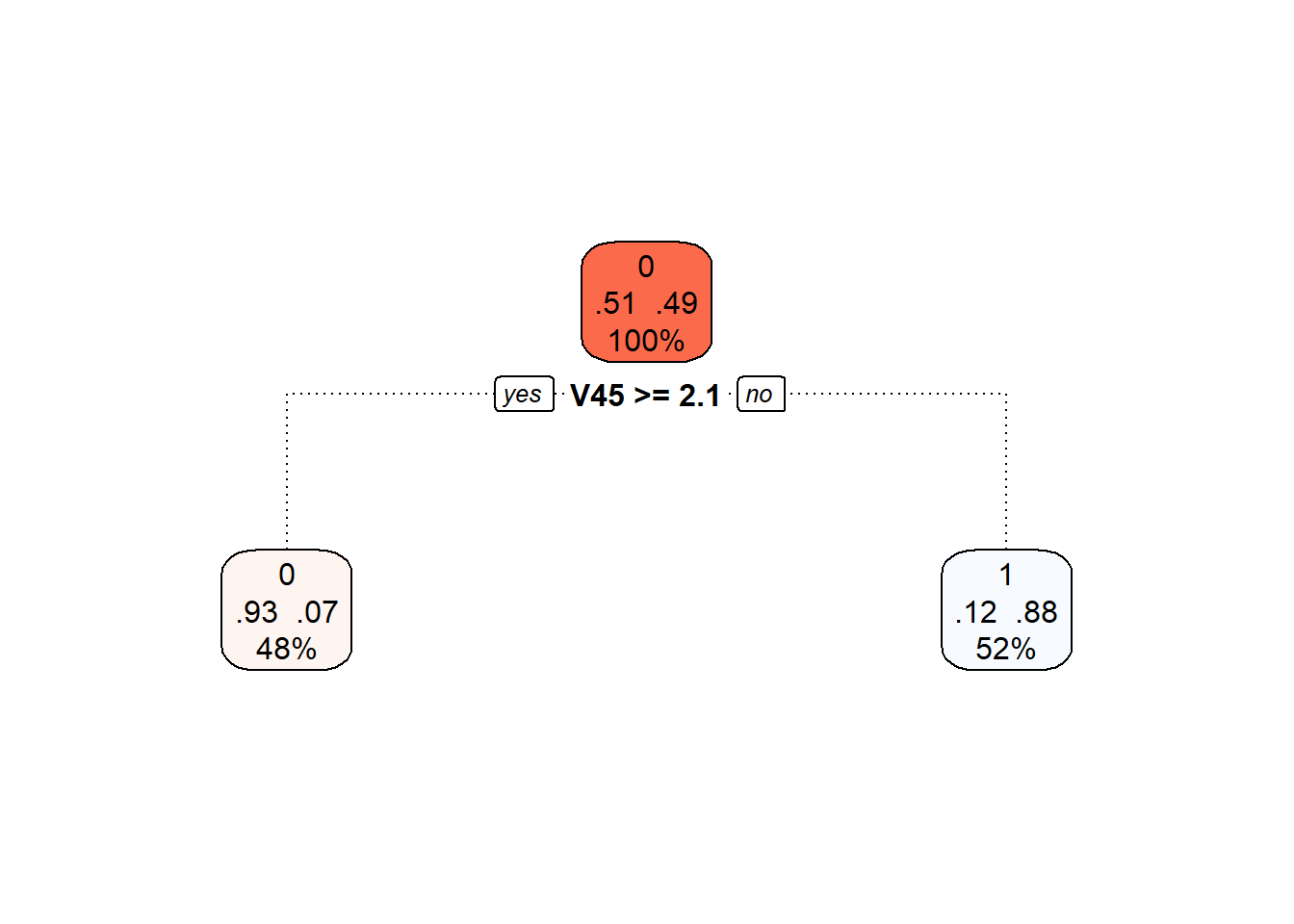

We can also plot the final pruned decision tree.

Code

Figure 3.1: Final pruned decision tree.

Finally, we will again add the training and test error rates to the summary table.

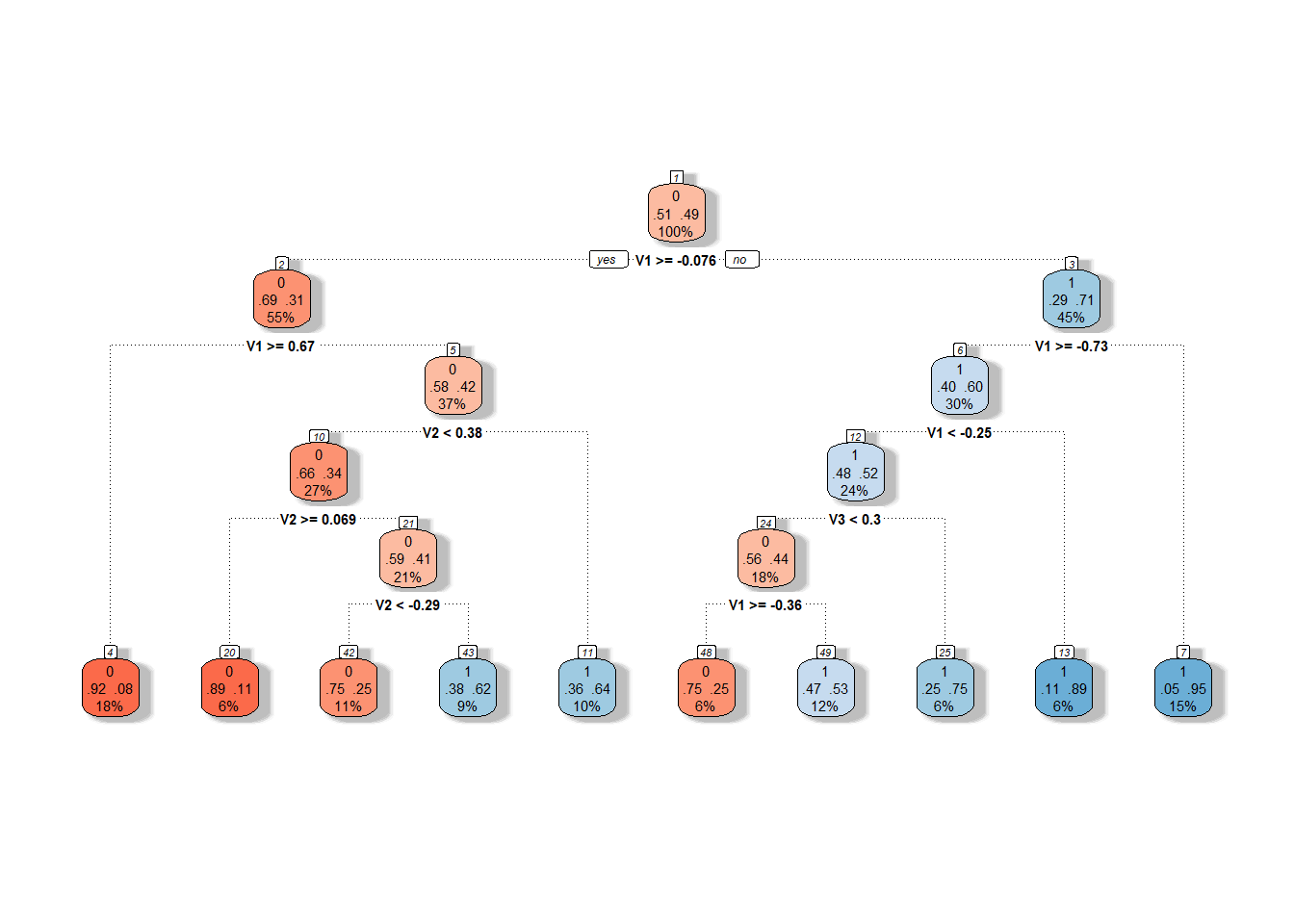

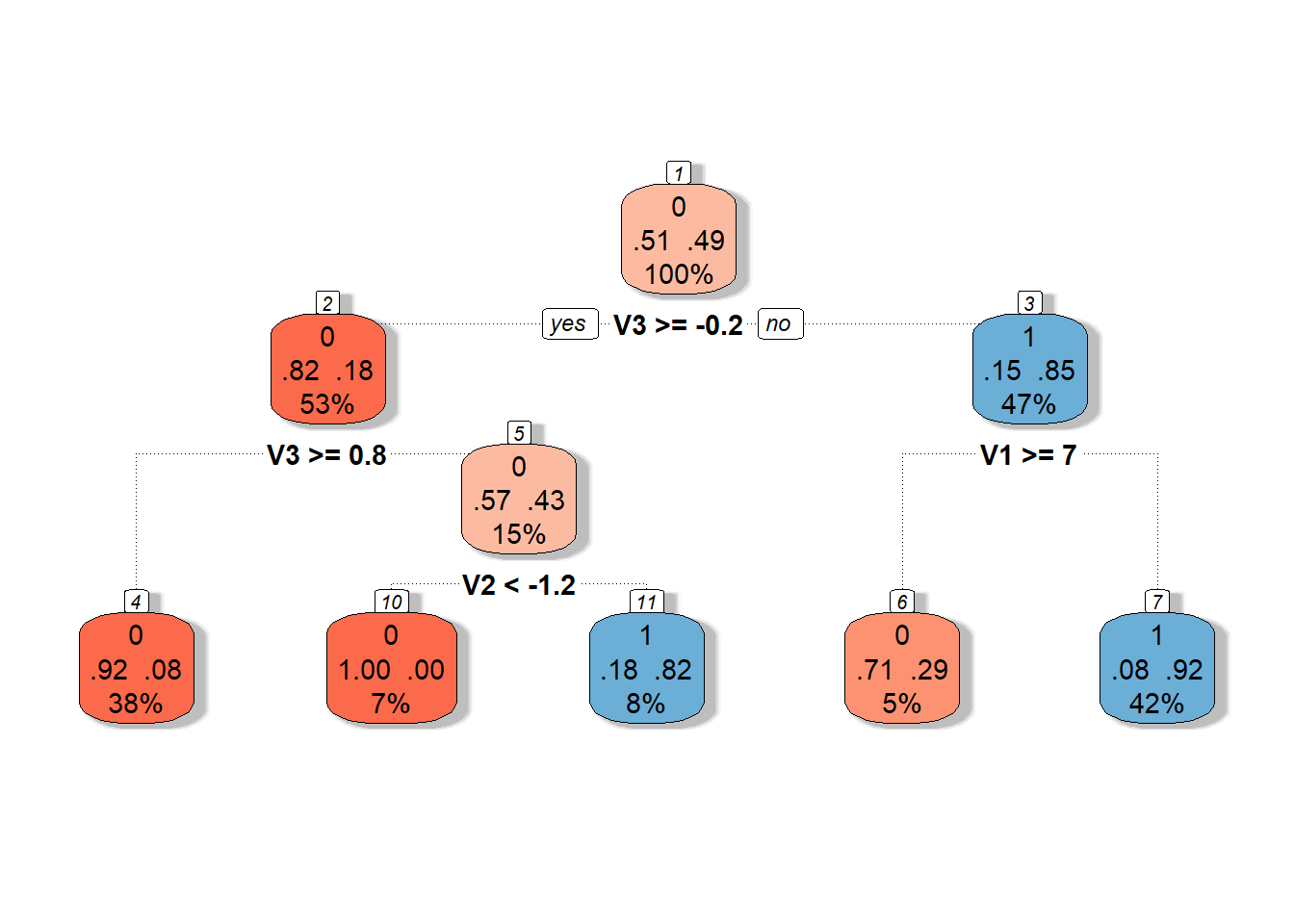

5.1.3.5.3 Basis Coefficients

The final option we will utilize for constructing a decision tree is to use coefficients in the representation of functions in the B-spline basis.

First, let’s define the necessary datasets with the coefficients.

Code

Now we can construct the classifier.

Code

# model construction

clf.tree.Bbasis <- train(Y ~ ., data = data.Bbasis.train,

method = "rpart",

trControl = trainControl(method = "CV", number = 10),

metric = "Accuracy")

# accuracy on training data

predictions.train <- predict(clf.tree.Bbasis, newdata = data.Bbasis.train)

presnost.train <- table(Y.train, predictions.train) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test <- predict(clf.tree.Bbasis, newdata = data.Bbasis.test)

presnost.test <- table(Y.test, predictions.test) |>

prop.table() |> diag() |> sum()The error rate of the decision tree on the training data is thus 26.43 %, and on the test data 18.33 %.

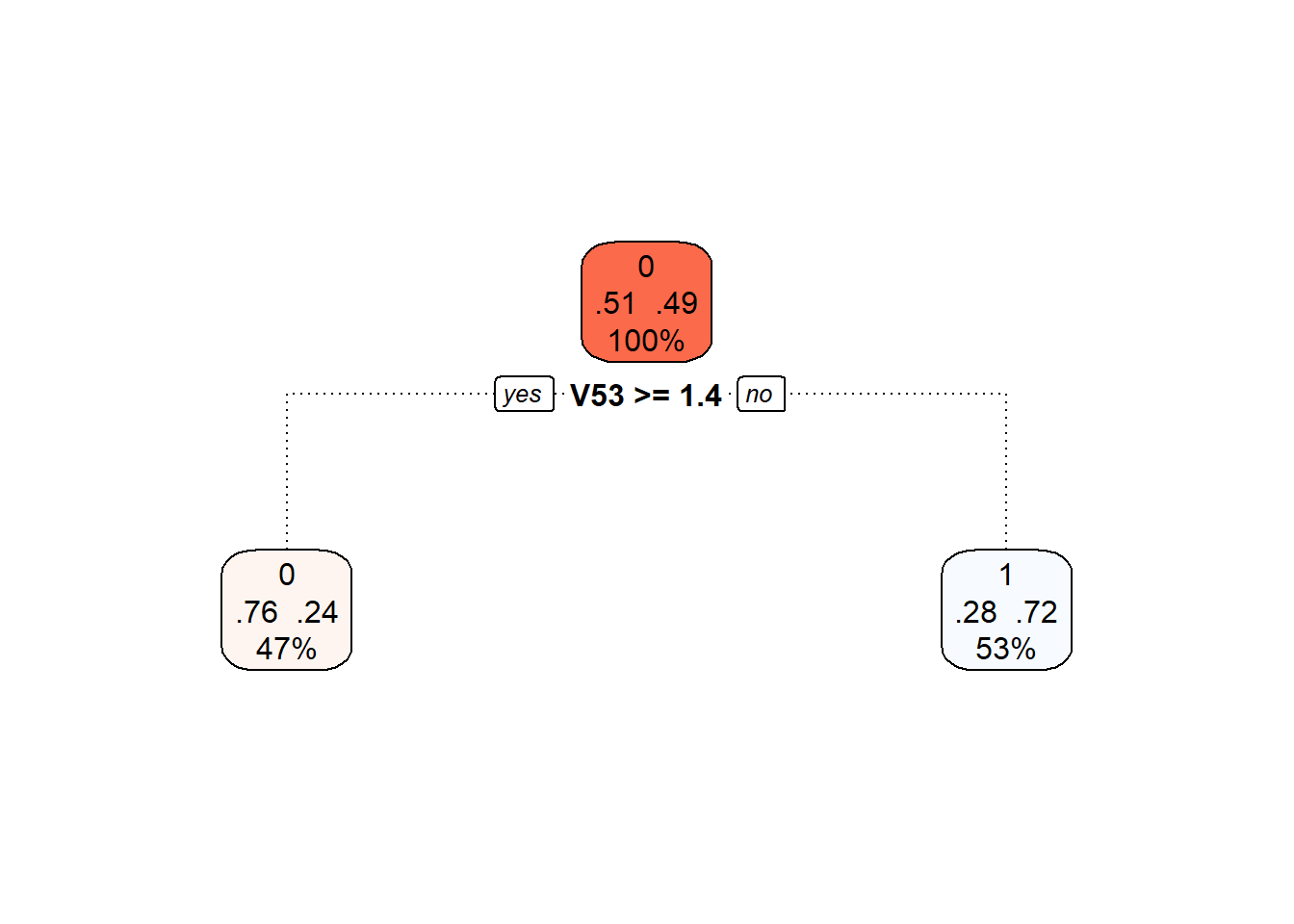

We can visualize the decision tree constructed on the B-spline coefficient representation using the fancyRpartPlot() function. We will set the colors of the nodes to reflect the previous color differentiation. This is an unpruned tree.

Code

Figure 1.22: Graphical representation of the unpruned decision tree constructed on basis coefficients. Blue shades represent nodes belonging to classification class 1, and red shades represent class 0.

We can also plot the final pruned decision tree.

Code

Figure 5.9: Final pruned decision tree.

Finally, we will again add the training and test error rates to the summary table.

5.1.3.6 Random Forests

The classifier constructed using the random forests method consists of building several individual decision trees, which are then combined to create a common classifier (via “voting”).

As with decision trees, we have several options regarding which data (finite-dimensional) we will use to construct the model. We will again consider the three approaches discussed above. The datasets with the corresponding variables for all three approaches have already been prepared from the previous section, so we can directly construct the models, calculate the characteristics of the classifiers, and add the results to the summary table.

5.1.3.6.1 Interval Discretization

In the first case, we utilize the evaluation of functions on a given grid of points over the interval \(I = [0, 6]\).

Code

# model construction

clf.RF <- randomForest(Y ~ ., data = grid.data,

ntree = 500, # number of trees

importance = TRUE,

nodesize = 5)

# accuracy on training data

predictions.train <- predict(clf.RF, newdata = grid.data)

presnost.train <- table(Y.train, predictions.train) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test <- predict(clf.RF, newdata = grid.data.test)

presnost.test <- table(Y.test, predictions.test) |>

prop.table() |> diag() |> sum()The error rate of the random forest on the training data is thus 0 %, and on the test data 20 %.

5.1.3.6.2 Principal Component Scores

In this case, we will use the scores of the first $p = $ 3 principal components.

Code

# model construction

clf.RF.PCA <- randomForest(Y ~ ., data = data.PCA.train,

ntree = 500, # number of trees

importance = TRUE,

nodesize = 5)

# accuracy on training data

predictions.train <- predict(clf.RF.PCA, newdata = data.PCA.train)

presnost.train <- table(Y.train, predictions.train) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test <- predict(clf.RF.PCA, newdata = data.PCA.test)

presnost.test <- table(Y.test, predictions.test) |>

prop.table() |> diag() |> sum()The error rate on the training data is thus 1.43 %, and on the test data 30 %.

5.1.3.6.3 Basis Coefficients

Finally, we will use the representation of functions through the B-spline basis.

Code

# model construction

clf.RF.Bbasis <- randomForest(Y ~ ., data = data.Bbasis.train,

ntree = 500, # number of trees

importance = TRUE,

nodesize = 5)

# accuracy on training data

predictions.train <- predict(clf.RF.Bbasis, newdata = data.Bbasis.train)

presnost.train <- table(Y.train, predictions.train) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test <- predict(clf.RF.Bbasis, newdata = data.Bbasis.test)

presnost.test <- table(Y.test, predictions.test) |>

prop.table() |> diag() |> sum()The error rate of this classifier on the training data is 0 %, and on the test data 18.33 %.

5.1.3.7 Support Vector Machines

Now let’s look at classifying our simulated curves using the Support Vector Machines (SVM) method. The advantage of this classification method is its computational efficiency, as it defines the boundary curve between classes using only a few (often very few) observations.

In the case of functional data, we have several options for applying the SVM method. The simplest variant is to use this classification method directly on the discretized function (section 5.1.3.7.2). Another option is to utilize the principal component scores to classify curves based on their representation 5.1.3.7.3. A straightforward variant is to use the representation of curves through the B-spline basis and classify curves based on the coefficients of their representation in this basis (section 5.1.3.7.4).

A more complex consideration can lead us to several additional options that leverage the functional nature of the data. We can utilize projections of functions onto a subspace generated, for example, by B-spline functions (section 5.1.3.7.5). The final method we will use for classifying functional data involves combining projection onto a certain subspace generated by functions (Reproducing Kernel Hilbert Space, RKHS) and classifying the corresponding representation. This method utilizes not only the classical SVM but also SVM for regression, as discussed in section RKHS + SVM 5.1.3.7.6.

5.1.3.7.1 SVM for Functional Data

In the fda.usc library, we will use the function classif.svm() to apply the SVM method directly to functional data. First, we will create suitable objects for constructing the classifier.

Code

# set norm equal to one

norms <- c()

for (i in 1:dim(XXder$coefs)[2]) {

norms <- c(norms, as.numeric(1 / norm.fd(XXder[i])))

}

XXfd_norm <- XXder

XXfd_norm$coefs <- XXfd_norm$coefs * matrix(norms,

ncol = dim(XXder$coefs)[2],

nrow = dim(XXder$coefs)[1],

byrow = TRUE)

# split into test and training sets

X.train_norm <- subset(XXfd_norm, split == TRUE)

X.test_norm <- subset(XXfd_norm, split == FALSE)

Y.train_norm <- subset(Y, split == TRUE)

Y.test_norm <- subset(Y, split == FALSE)

grid.data <- eval.fd(fdobj = X.train_norm, evalarg = t.seq)

grid.data <- as.data.frame(t(grid.data))

grid.data$Y <- Y.train_norm |> factor()

grid.data.test <- eval.fd(fdobj = X.test_norm, evalarg = t.seq)

grid.data.test <- as.data.frame(t(grid.data.test))

grid.data.test$Y <- Y.test_norm |> factor()Code

Finally, we can construct the classifiers on the entire training data with the hyperparameter values (determined previously by CV). We will also determine the errors on the test and training data.

Code

# Create suitable objects

x.train <- fdata(X.train_norm)

y.train <- as.factor(Y.train_norm)

# Points at which the functions are evaluated

tt <- x.train[["argvals"]]

dataf <- as.data.frame(y.train)

colnames(dataf) <- "Y"

# B-spline basis

basis1 <- X.train_norm$basis

# Formula

f <- Y ~ x

# Basis for x

basis.x <- list("x" = basis1)

# Input data for the model

ldata <- list("df" = dataf, "x" = x.train)Code

## Warning in Minverse(t(B) %*% B): System is computationally singular (rank 54)

##

## The matrix inverse is computed by svd (effective rank 52)Code

## Warning in Minverse(t(B) %*% B): System is computationally singular (rank 54)

##

## The matrix inverse is computed by svd (effective rank 52)Code

## Warning in Minverse(t(B) %*% B): System is computationally singular (rank 54)

##

## The matrix inverse is computed by svd (effective rank 52)Code

# Accuracy on training data

newdat <- list("x" = x.train)

predictions.train.l <- predict(model.svm.f_l, newdat, type = 'class')

accuracy.train.l <- mean(factor(Y.train_norm) == predictions.train.l)

predictions.train.p <- predict(model.svm.f_p, newdat, type = 'class')

accuracy.train.p <- mean(factor(Y.train_norm) == predictions.train.p)

predictions.train.r <- predict(model.svm.f_r, newdat, type = 'class')

accuracy.train.r <- mean(factor(Y.train_norm) == predictions.train.r)

# Accuracy on test data

newdat <- list("x" = fdata(X.test_norm))

predictions.test.l <- predict(model.svm.f_l, newdat, type = 'class')

accuracy.test.l <- mean(factor(Y.test_norm) == predictions.test.l)

predictions.test.p <- predict(model.svm.f_p, newdat, type = 'class')

accuracy.test.p <- mean(factor(Y.test_norm) == predictions.test.p)

predictions.test.r <- predict(model.svm.f_r, newdat, type = 'class')

accuracy.test.r <- mean(factor(Y.test_norm) == predictions.test.r)The error rate of the SVM method on the training data is thus 15.7143 % for the linear kernel, 12.1429 % for the polynomial kernel, and 15.7143 % for the Gaussian kernel. On the test data, the error rate of the method is 16.6667 % for the linear kernel, 25 % for the polynomial kernel, and 23.3333 % for the radial kernel.

5.1.3.7.2 Interval Discretization

Let’s continue by applying the Support Vector Machines method directly to the discretized data (evaluation of the function on a grid of points over the interval \(I = [0, 6]\)), considering all three aforementioned kernel functions.

We will now classify the normalized data using the classic SVM method, selecting parameters as follows. For one generated dataset, we will determine parameters using cross-validation (CV), and these parameters will then be applied to other simulated data.

Code

# model construction

clf.SVM.l <- svm(Y ~ ., data = grid.data,

type = 'C-classification',

scale = TRUE,

cost = 25,

kernel = 'linear')

clf.SVM.p <- svm(Y ~ ., data = grid.data,

type = 'C-classification',

scale = TRUE,

coef0 = 1,

cost = 0.7,

kernel = 'polynomial')

clf.SVM.r <- svm(Y ~ ., data = grid.data,

type = 'C-classification',

scale = TRUE,

cost = 55,

gamma = 0.0005,

kernel = 'radial')

# accuracy on training data

predictions.train.l <- predict(clf.SVM.l, newdata = grid.data)

presnost.train.l <- table(Y.train, predictions.train.l) |>

prop.table() |> diag() |> sum()

predictions.train.p <- predict(clf.SVM.p, newdata = grid.data)

presnost.train.p <- table(Y.train, predictions.train.p) |>

prop.table() |> diag() |> sum()

predictions.train.r <- predict(clf.SVM.r, newdata = grid.data)

presnost.train.r <- table(Y.train, predictions.train.r) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test.l <- predict(clf.SVM.l, newdata = grid.data.test)

presnost.test.l <- table(Y.test, predictions.test.l) |>

prop.table() |> diag() |> sum()

predictions.test.p <- predict(clf.SVM.p, newdata = grid.data.test)

presnost.test.p <- table(Y.test, predictions.test.p) |>

prop.table() |> diag() |> sum()

predictions.test.r <- predict(clf.SVM.r, newdata = grid.data.test)

presnost.test.r <- table(Y.test, predictions.test.r) |>

prop.table() |> diag() |> sum()The error rate of the SVM method on the training data is thus 7.86 % for the linear kernel, 12.86 % for the polynomial kernel, and 14.29 % for the Gaussian kernel. On the test data, the error rates are 10 % for the linear kernel, 23.33 % for the polynomial kernel, and 23.33 % for the radial kernel.

5.1.3.7.3 Principal Component Scores

In this case, we will use the scores of the first \(p =\) 3 principal components.

Code

# model construction

clf.SVM.l.PCA <- svm(Y ~ ., data = data.PCA.train,

type = 'C-classification',

scale = TRUE,

cost = 0.1,

kernel = 'linear')

clf.SVM.p.PCA <- svm(Y ~ ., data = data.PCA.train,

type = 'C-classification',

scale = TRUE,

coef0 = 1,

cost = 0.01,

kernel = 'polynomial')

clf.SVM.r.PCA <- svm(Y ~ ., data = data.PCA.train,

type = 'C-classification',

scale = TRUE,

cost = 1,

gamma = 0.01,

kernel = 'radial')

# accuracy on training data

predictions.train.l <- predict(clf.SVM.l.PCA, newdata = data.PCA.train)

accuracy.train.l <- table(data.PCA.train$Y, predictions.train.l) |>

prop.table() |> diag() |> sum()

predictions.train.p <- predict(clf.SVM.p.PCA, newdata = data.PCA.train)

accuracy.train.p <- table(data.PCA.train$Y, predictions.train.p) |>

prop.table() |> diag() |> sum()

predictions.train.r <- predict(clf.SVM.r.PCA, newdata = data.PCA.train)

accuracy.train.r <- table(data.PCA.train$Y, predictions.train.r) |>

prop.table() |> diag() |> sum()

# accuracy on test data

predictions.test.l <- predict(clf.SVM.l.PCA, newdata = data.PCA.test)

accuracy.test.l <- table(data.PCA.test$Y, predictions.test.l) |>

prop.table() |> diag() |> sum()

predictions.test.p <- predict(clf.SVM.p.PCA, newdata = data.PCA.test)

accuracy.test.p <- table(data.PCA.test$Y, predictions.test.p) |>

prop.table() |> diag() |> sum()

predictions.test.r <- predict(clf.SVM.r.PCA, newdata = data.PCA.test)

accuracy.test.r <- table(data.PCA.test$Y, predictions.test.r) |>

prop.table() |> diag() |> sum()The error rate of the SVM method applied to the principal component scores on the training data is therefore 31.43 % for the linear kernel, 31.43 % for the polynomial kernel, and 32.14 % for the Gaussian kernel. On the test data, the error rate is then 23.33 % for the linear kernel, 23.33 % for the polynomial kernel, and 23.33 % for the radial kernel.

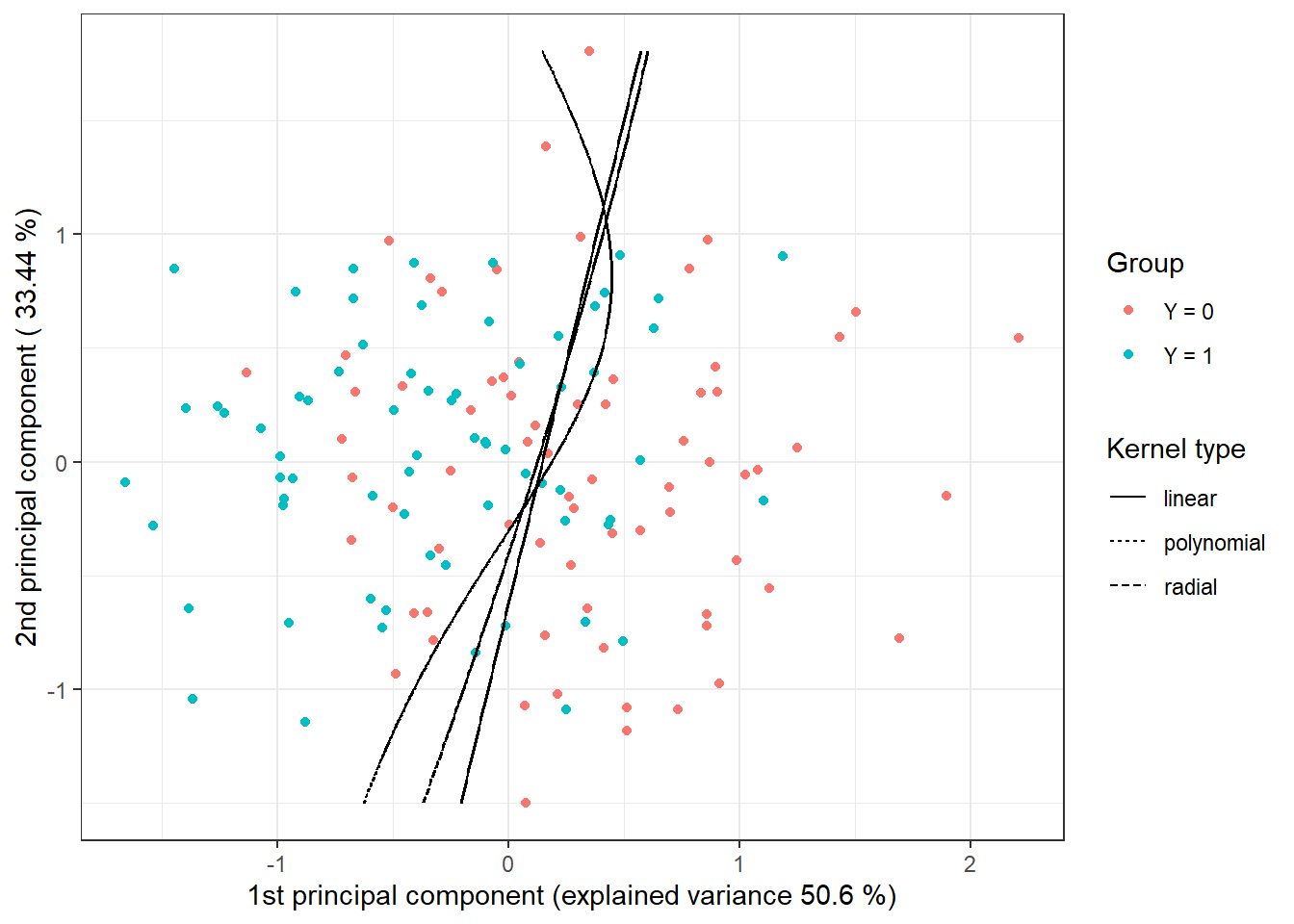

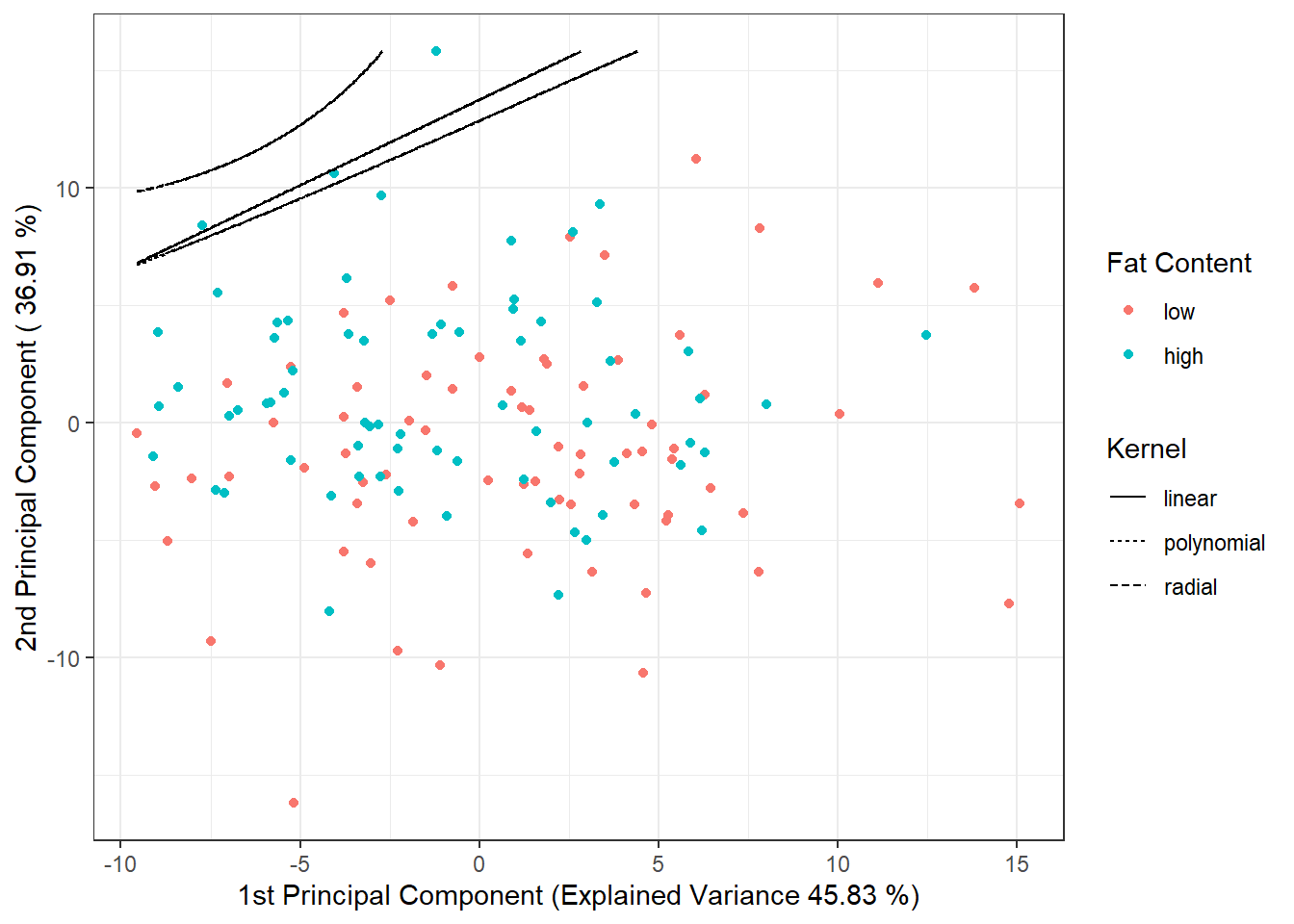

To graphically illustrate the method, we can mark the decision boundary on the graph of the scores of the first two principal components. We will calculate this boundary on a dense grid of points and display it using the geom_contour() function, just as we did in previous cases when plotting the classification boundary.

Code

nd <- rbind(nd, nd, nd) |> mutate(

prd = c(as.numeric(predict(clf.SVM.l.PCA, newdata = nd, type = 'response')),

as.numeric(predict(clf.SVM.p.PCA, newdata = nd, type = 'response')),

as.numeric(predict(clf.SVM.r.PCA, newdata = nd, type = 'response'))),

kernel = rep(c('linear', 'polynomial', 'radial'),

each = length(as.numeric(predict(clf.SVM.l.PCA,

newdata = nd,

type = 'response')))))

data.PCA.train |> ggplot(aes(x = V1, y = V2, colour = Y)) +

geom_point(size = 1.5) +

labs(x = paste('1st principal component (explained variance',

round(100 * data.PCA$varprop[1], 2), '%)'),

y = paste('2nd principal component (',

round(100 * data.PCA$varprop[2], 2), '%)'),

colour = 'Group',

linetype = 'Kernel type') +

scale_colour_discrete(labels = c('Y = 0', 'Y = 1')) +

theme_bw() +

geom_contour(data = nd, aes(x = V1, y = V2, z = prd, linetype = kernel),

colour = 'black') +

geom_contour(data = nd, aes(x = V1, y = V2, z = prd, linetype = kernel),

colour = 'black') +

geom_contour(data = nd, aes(x = V1, y = V2, z = prd, linetype = kernel),

colour = 'black')

Figure 5.10: Scores of the first two principal components, color-coded according to classification group membership. The decision boundary (line or curves in the plane of the first two principal components) between classes constructed using the SVM method is highlighted in black.

5.1.3.7.4 B-spline Coefficients

Finally, we will use function representations through the B-spline basis.

Code

# Building the model

clf.SVM.l.Bbasis <- svm(Y ~ ., data = data.Bbasis.train,

type = 'C-classification',

scale = TRUE,

cost = 50,

kernel = 'linear')

clf.SVM.p.Bbasis <- svm(Y ~ ., data = data.Bbasis.train,

type = 'C-classification',

scale = TRUE,

coef0 = 1,

cost = 0.1,

kernel = 'polynomial')

clf.SVM.r.Bbasis <- svm(Y ~ ., data = data.Bbasis.train,

type = 'C-classification',

scale = TRUE,

cost = 100,

gamma = 0.001,

kernel = 'radial')

# Accuracy on training data

predictions.train.l <- predict(clf.SVM.l.Bbasis, newdata = data.Bbasis.train)

accuracy.train.l <- table(Y.train, predictions.train.l) |>

prop.table() |> diag() |> sum()

predictions.train.p <- predict(clf.SVM.p.Bbasis, newdata = data.Bbasis.train)

accuracy.train.p <- table(Y.train, predictions.train.p) |>

prop.table() |> diag() |> sum()

predictions.train.r <- predict(clf.SVM.r.Bbasis, newdata = data.Bbasis.train)

accuracy.train.r <- table(Y.train, predictions.train.r) |>

prop.table() |> diag() |> sum()

# Accuracy on test data

predictions.test.l <- predict(clf.SVM.l.Bbasis, newdata = data.Bbasis.test)

accuracy.test.l <- table(Y.test, predictions.test.l) |>

prop.table() |> diag() |> sum()

predictions.test.p <- predict(clf.SVM.p.Bbasis, newdata = data.Bbasis.test)

accuracy.test.p <- table(Y.test, predictions.test.p) |>

prop.table() |> diag() |> sum()

predictions.test.r <- predict(clf.SVM.r.Bbasis, newdata = data.Bbasis.test)

accuracy.test.r <- table(Y.test, predictions.test.r) |>

prop.table() |> diag() |> sum()The error rate of the SVM method applied to the B-spline coefficients on the training data is therefore 7.14 % for the linear kernel, 20 % for the polynomial kernel, and 12.86 % for the Gaussian kernel. On the test data, the error rate of the method is 6.67 % for the linear kernel, 21.67 % for the polynomial kernel, and 20 % for the radial kernel.

5.1.3.7.5 Projection onto B-spline Basis

Another option for using the classical SVM method for functional data is to project the original data onto some \(d\)-dimensional subspace of our Hilbert space \(\mathcal{H}\), denoted as \(V_d\). Assume that this subspace \(V_d\) has an orthonormal basis \(\{\Psi_j\}_{j = 1, \dots, d}\). We define the transformation \(P_{V_d}\) as the orthogonal projection onto the subspace \(V_d\), so we can write:

\[ P_{V_d} (x) = \sum_{j = 1}^d \langle x, \Psi_j \rangle \Psi_j. \]

Now we can use the coefficients from the orthogonal projection for classification, that is, we apply the standard SVM to the vectors \(\left( \langle x, \Psi_1 \rangle, \dots, \langle x, \Psi_d \rangle\right)^\top\). By using this transformation, we have defined a new so-called adapted kernel, which consists of the orthogonal projection \(P_{V_d}\) and the kernel function of the standard support vector method. Thus, we have (adapted) kernel \(Q(x_i, x_j) = K(P_{V_d}(x_i), P_{V_d}(x_j))\). This is a dimensionality reduction method, which we can call filtering.

For the projection itself, we will use the project.basis() function from the fda library in R. Its input will be a matrix of the original discrete (non-smoothed) data, the values at which we measure values in the original data matrix, and the basis object onto which we want to project the data. We will choose projection onto a B-spline basis since the use of a Fourier basis is not suitable for our non-periodic data.

We choose the dimension \(d\) either from some prior expert knowledge or by using cross-validation. In our case, we will determine the optimal dimension of the subspace \(V_d\) using \(k\)-fold cross-validation (we choose \(k \ll n\) due to the computational intensity of the method, often \(k = 5\) or \(k = 10\)). We require B-splines of order 4, for which the relationship for the number of basis functions holds:

\[ n_{basis} = n_{breaks} + n_{order} - 2, \]

where \(n_{breaks}\) is the number of knots and \(n_{order} = 4\). Therefore, the minimum dimension (for \(n_{breaks} = 1\)) is chosen as \(n_{basis} = 3\), and the maximum (for \(n_{breaks} = 51\), corresponding to the number of original discrete data points) is \(n_{basis} = 53\). However, in R, the value of \(n_{basis}\) must be at least \(n_{order} = 4\), and for large values of \(n_{basis}\), we already experience model overfitting; therefore, we choose a maximum \(n_{basis}\) of a smaller number, say 43.

Code

k_cv <- 10 # k-fold CV

# Values for B-spline basis

rangeval <- range(t)

norder <- 4

n_basis_min <- norder

n_basis_max <- length(t) + norder - 2 - 10

dimensions <- n_basis_min:n_basis_max # all dimensions we want to try

# Split the training data into k parts

folds <- createMultiFolds(1:sum(split), k = k_cv, time = 1)

# List with three components ... matrices for individual kernels -> linear, poly, radial

# An empty matrix where we will insert individual results

# Columns will contain accuracy values for each part of the training set

# Rows will contain values for each dimension value

CV.results <- list(SVM.l = matrix(NA, nrow = length(dimensions), ncol = k_cv),

SVM.p = matrix(NA, nrow = length(dimensions), ncol = k_cv),

SVM.r = matrix(NA, nrow = length(dimensions), ncol = k_cv))

for (d in dimensions) {

# Basis object

bbasis <- create.bspline.basis(rangeval = rangeval,

nbasis = d)

# Projection of discrete data onto the B-spline basis of dimension d

Projection <- project.basis(y = grid.data |> select(!contains('Y')) |> as.matrix() |> t(), # matrix of discrete data

argvals = t.seq, # vector of arguments

basisobj = bbasis) # basis object

# Splitting into training and test data within CV

XX.train <- t(Projection) # subset(t(Projection), split == TRUE)

for (index_cv in 1:k_cv) {

# Definition of test and training parts for CV

fold <- folds[[index_cv]]

cv_sample <- 1:dim(XX.train)[1] %in% fold

data.projection.train.cv <- as.data.frame(XX.train[cv_sample, ])

data.projection.train.cv$Y <- factor(Y.train[cv_sample])

data.projection.test.cv <- as.data.frame(XX.train[!cv_sample, ])

Y.test.cv <- Y.train[!cv_sample]

data.projection.test.cv$Y <- factor(Y.test.cv)

# Building the models

clf.SVM.l.projection <- svm(Y ~ ., data = data.projection.train.cv,

type = 'C-classification',

scale = TRUE,

kernel = 'linear')

clf.SVM.p.projection <- svm(Y ~ ., data = data.projection.train.cv,

type = 'C-classification',

scale = TRUE,

coef0 = 1,

kernel = 'polynomial')

clf.SVM.r.projection <- svm(Y ~ ., data = data.projection.train.cv,

type = 'C-classification',

scale = TRUE,

kernel = 'radial')

# Accuracy on validation data

## linear kernel

predictions.test.l <- predict(clf.SVM.l.projection,

newdata = data.projection.test.cv)

accuracy.test.l <- table(Y.test.cv, predictions.test.l) |>

prop.table() |> diag() |> sum()

## polynomial kernel

predictions.test.p <- predict(clf.SVM.p.projection,

newdata = data.projection.test.cv)

accuracy.test.p <- table(Y.test.cv, predictions.test.p) |>

prop.table() |> diag() |> sum()

## radial kernel

predictions.test.r <- predict(clf.SVM.r.projection,

newdata = data.projection.test.cv)

accuracy.test.r <- table(Y.test.cv, predictions.test.r) |>

prop.table() |> diag() |> sum()

# Insert accuracies into positions for given d and fold

CV.results$SVM.l[d - min(dimensions) + 1, index_cv] <- accuracy.test.l

CV.results$SVM.p[d - min(dimensions) + 1, index_cv] <- accuracy.test.p

CV.results$SVM.r[d - min(dimensions) + 1, index_cv] <- accuracy.test.r

}

}

# Compute average accuracies for individual d across folds

for (n_method in 1:length(CV.results)) {

CV.results[[n_method]] <- apply(CV.results[[n_method]], 1, mean)

}

d.opt <- c(which.max(CV.results$SVM.l) + n_basis_min - 1,

which.max(CV.results$SVM.p) + n_basis_min - 1,

which.max(CV.results$SVM.r) + n_basis_min - 1)

presnost.opt.cv <- c(max(CV.results$SVM.l),

max(CV.results$SVM.p),

max(CV.results$SVM.r))

data.frame(d_opt = d.opt, ERR = 1 - presnost.opt.cv,

row.names = c('linear', 'poly', 'radial'))## d_opt ERR

## linear 11 0.1890476

## poly 13 0.1869963

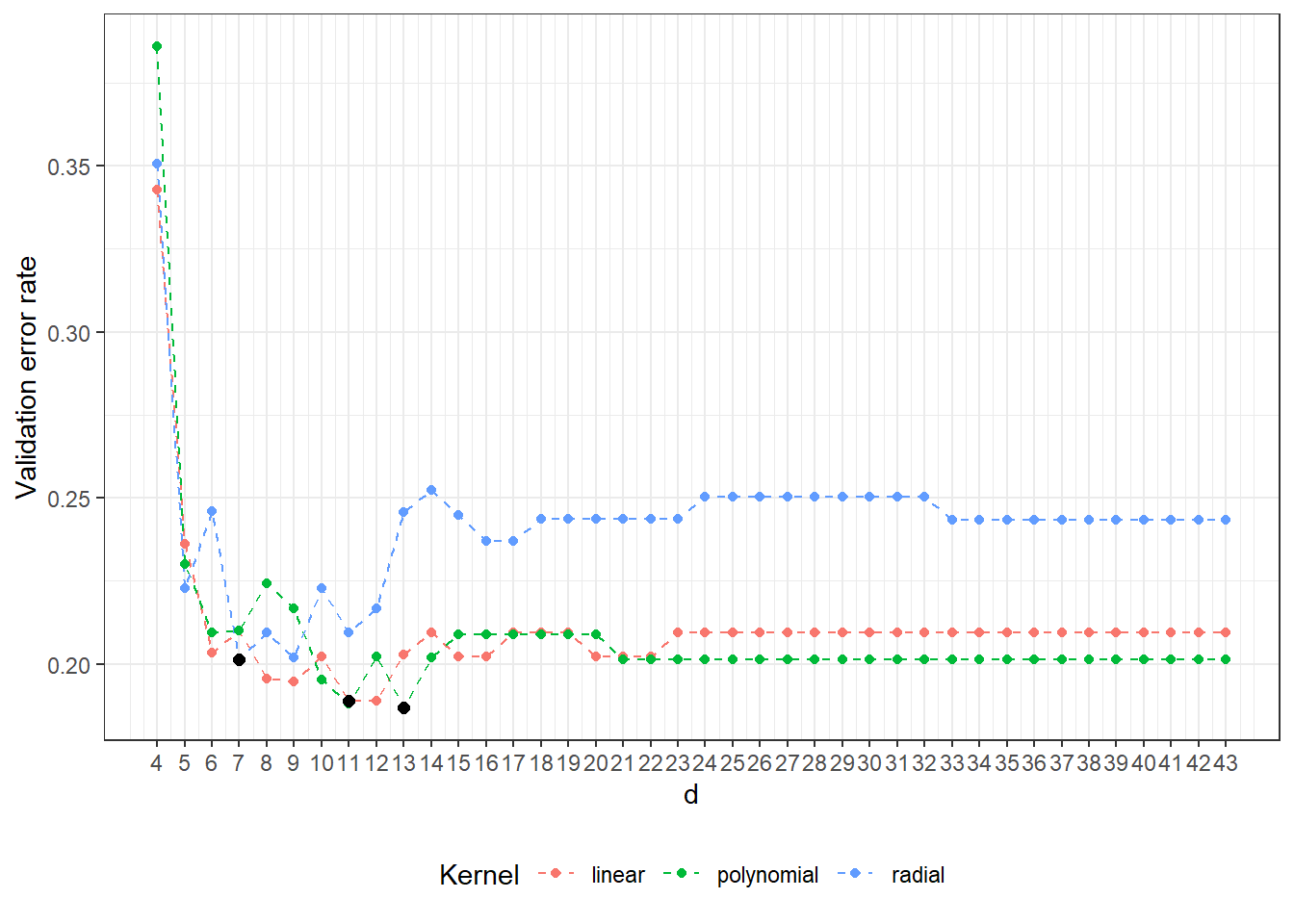

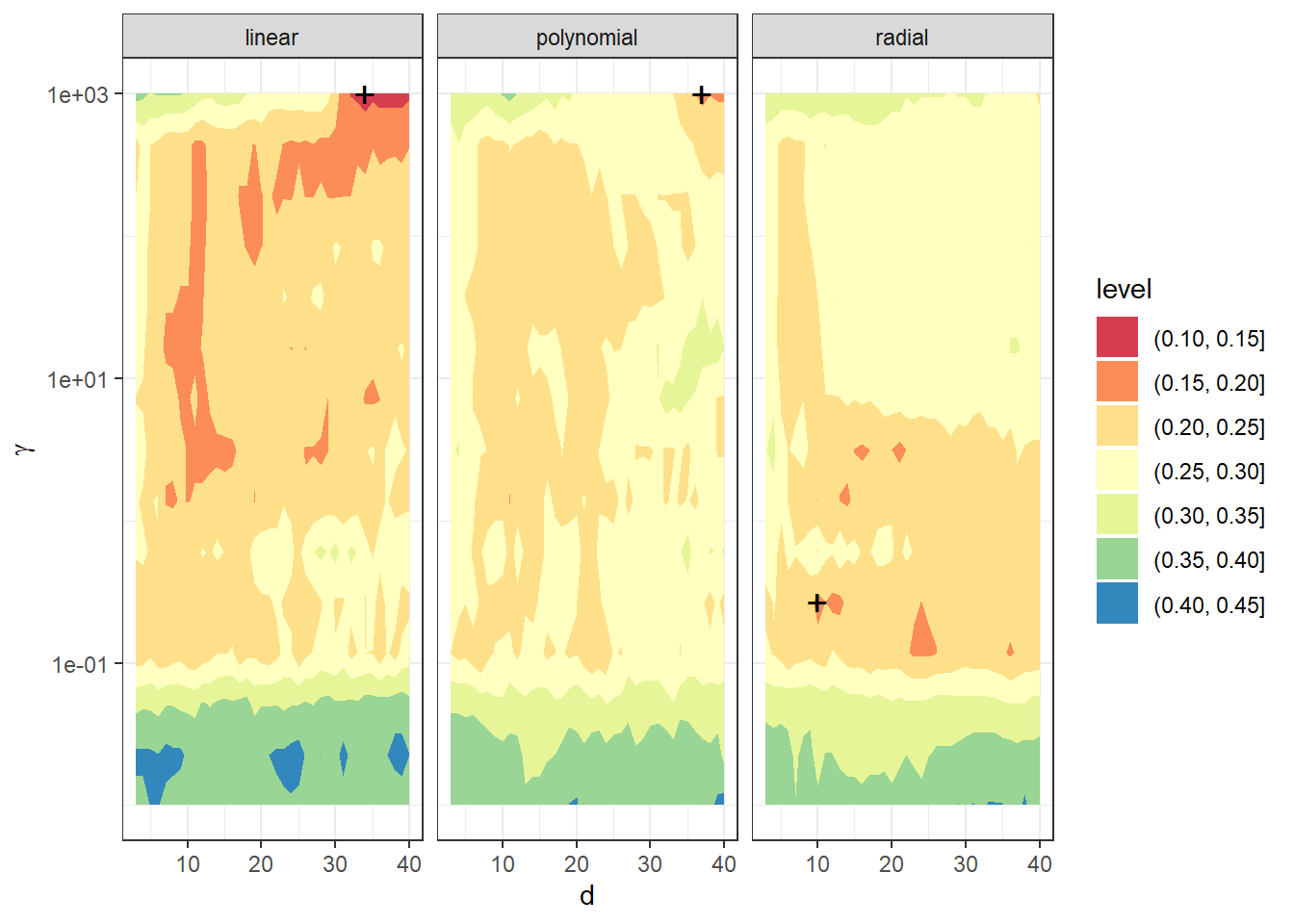

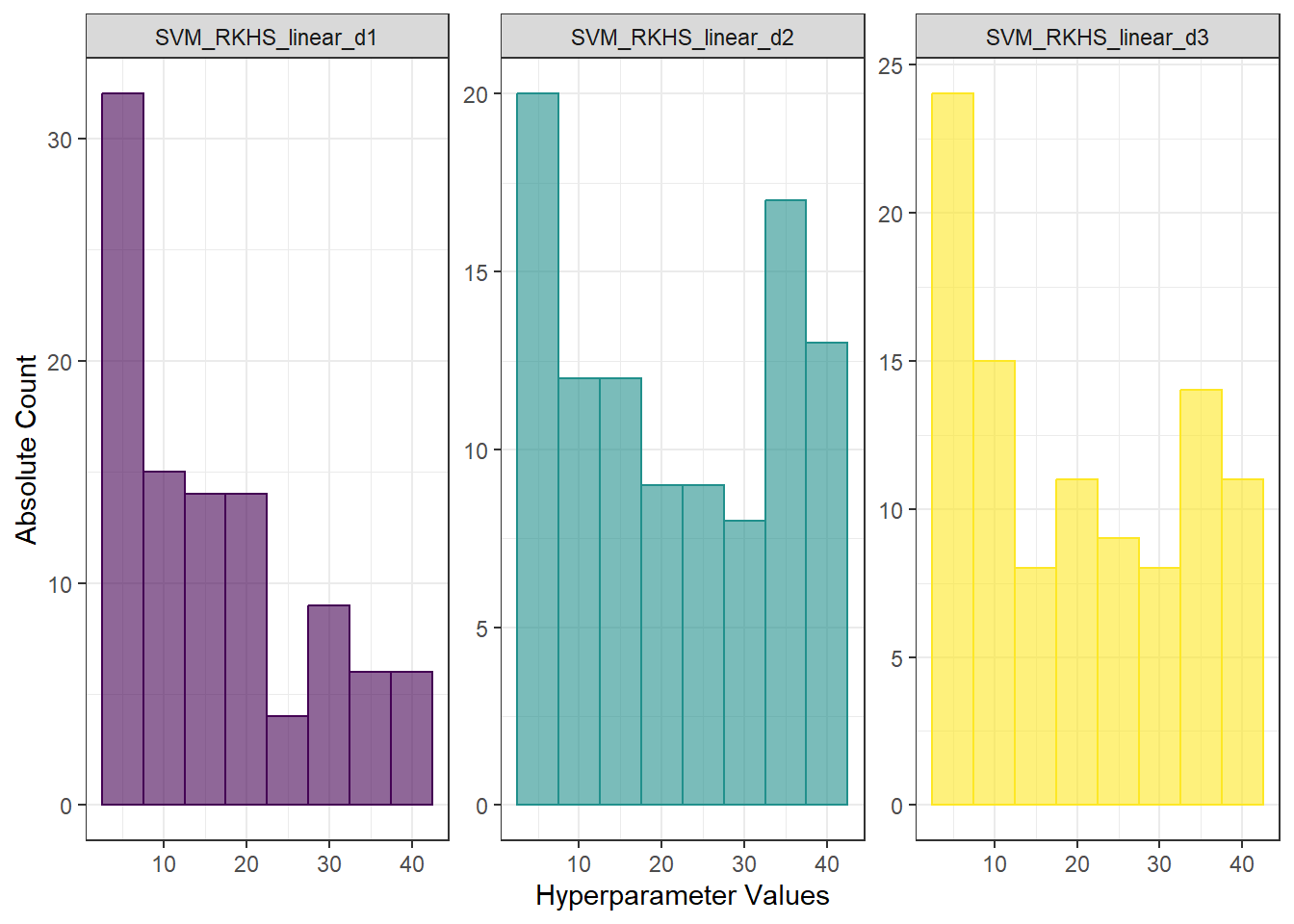

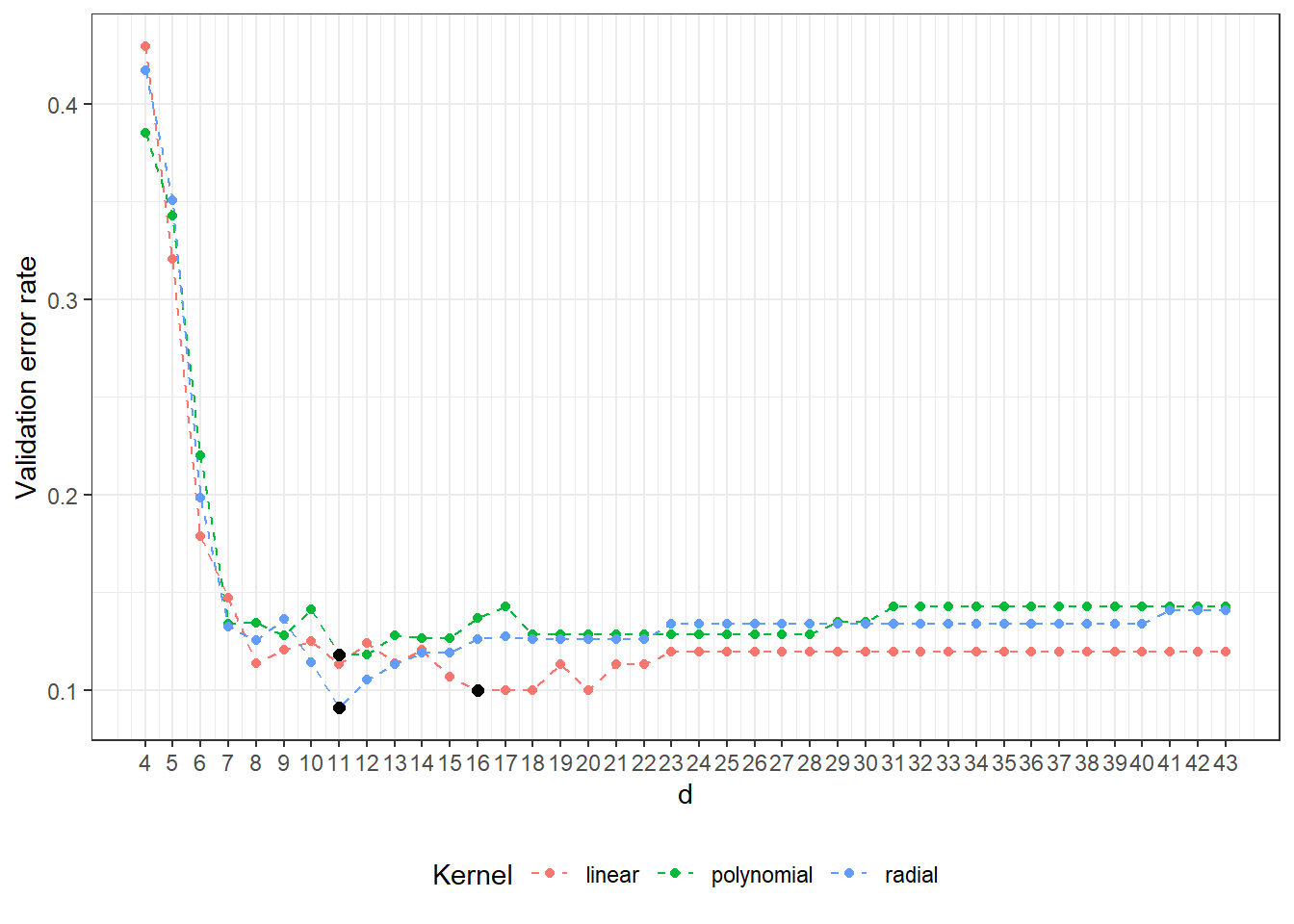

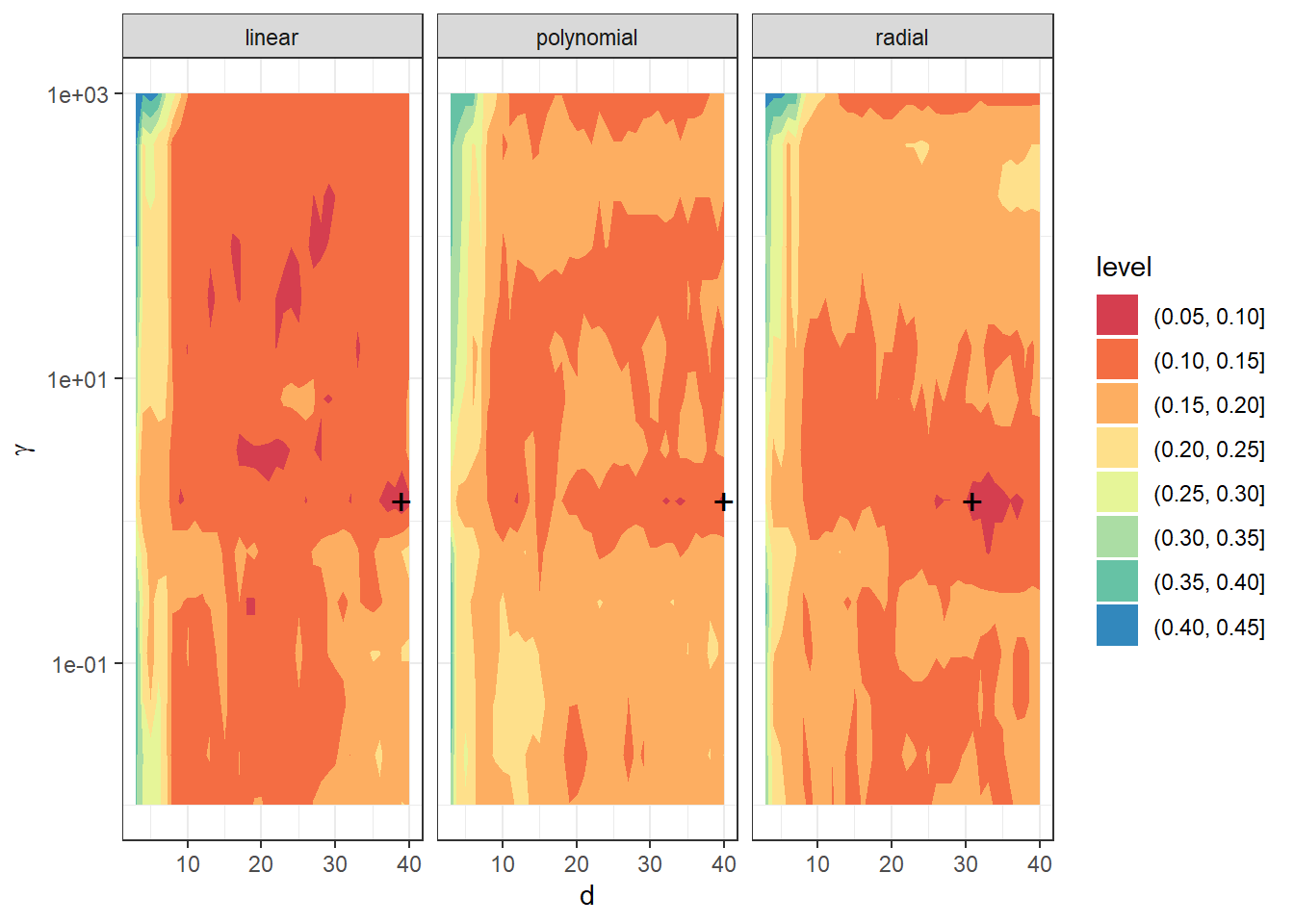

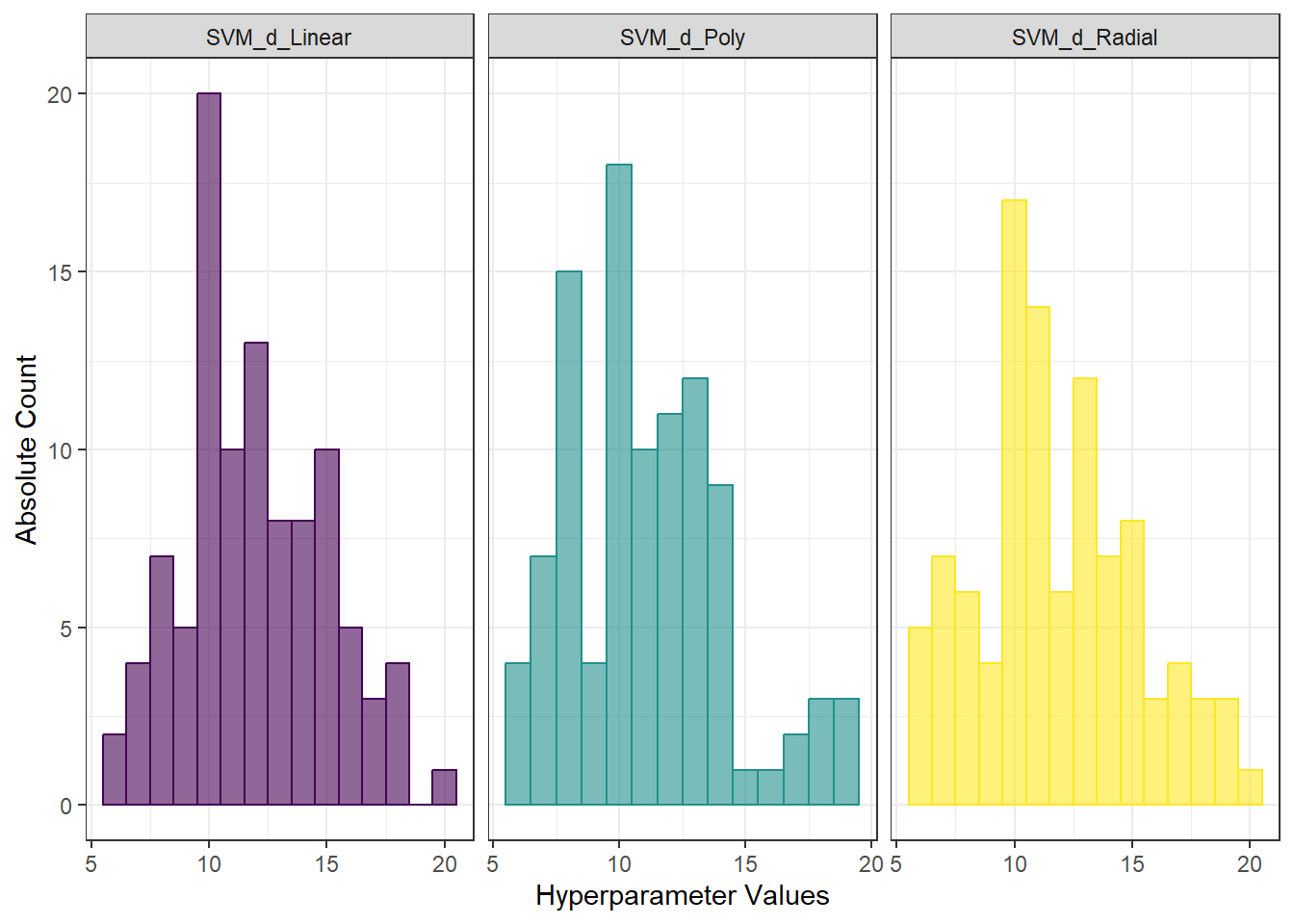

## radial 7 0.2012821We see that the best value for the parameter \(d\) is 11 for the linear kernel, with an error rate calculated using 10-fold CV of 0.189, 13 for the polynomial kernel with an error rate of 0.187, and 7 for the radial kernel with an error rate of 0.2013.

To clarify, let’s plot the validation error rates as a function of the dimension \(d\).

Code

CV.results <- data.frame(d = dimensions |> rep(3),

CV = c(CV.results$SVM.l,

CV.results$SVM.p,

CV.results$SVM.r),

Kernel = rep(c('linear', 'polynomial', 'radial'),

each = length(dimensions)) |> factor())

CV.results |> ggplot(aes(x = d, y = 1 - CV, colour = Kernel)) +

geom_line(linetype = 'dashed') +

geom_point(size = 1.5) +

geom_point(data = data.frame(d.opt,

presnost.opt.cv),

aes(x = d.opt, y = 1 - presnost.opt.cv), colour = 'black', size = 2) +

theme_bw() +

labs(x = bquote(paste(d)),

y = 'Validation error rate') +

theme(legend.position = "bottom") +

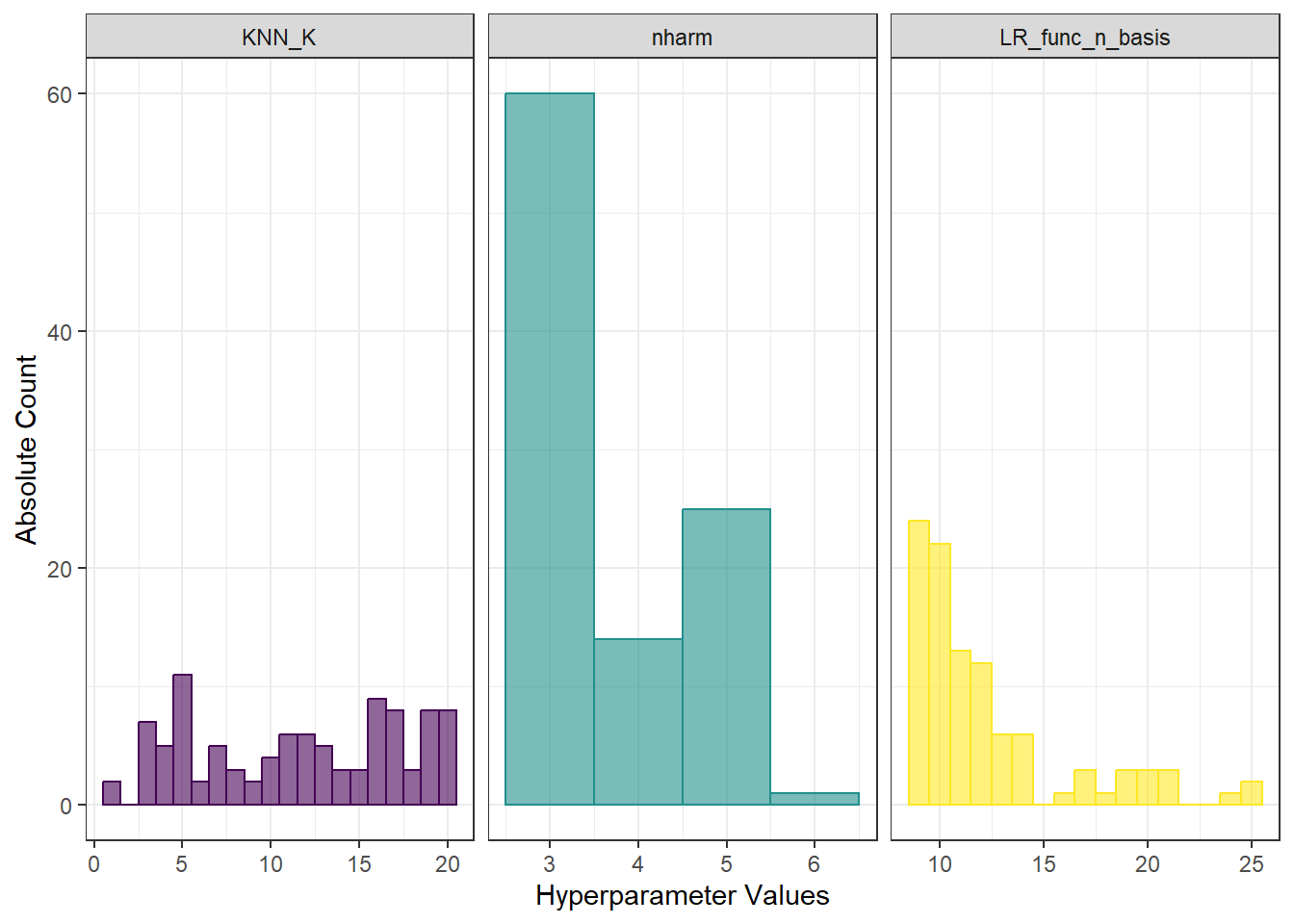

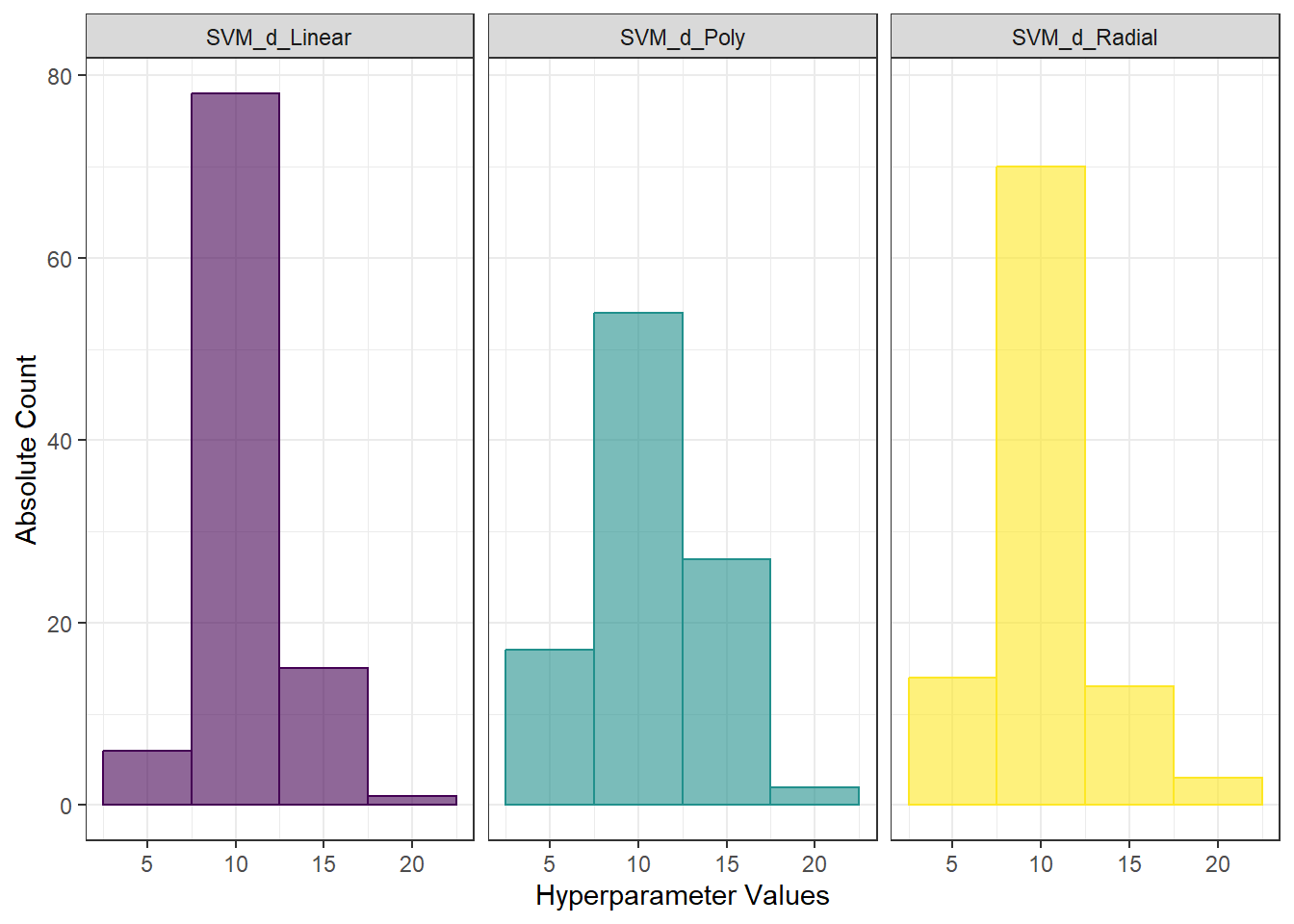

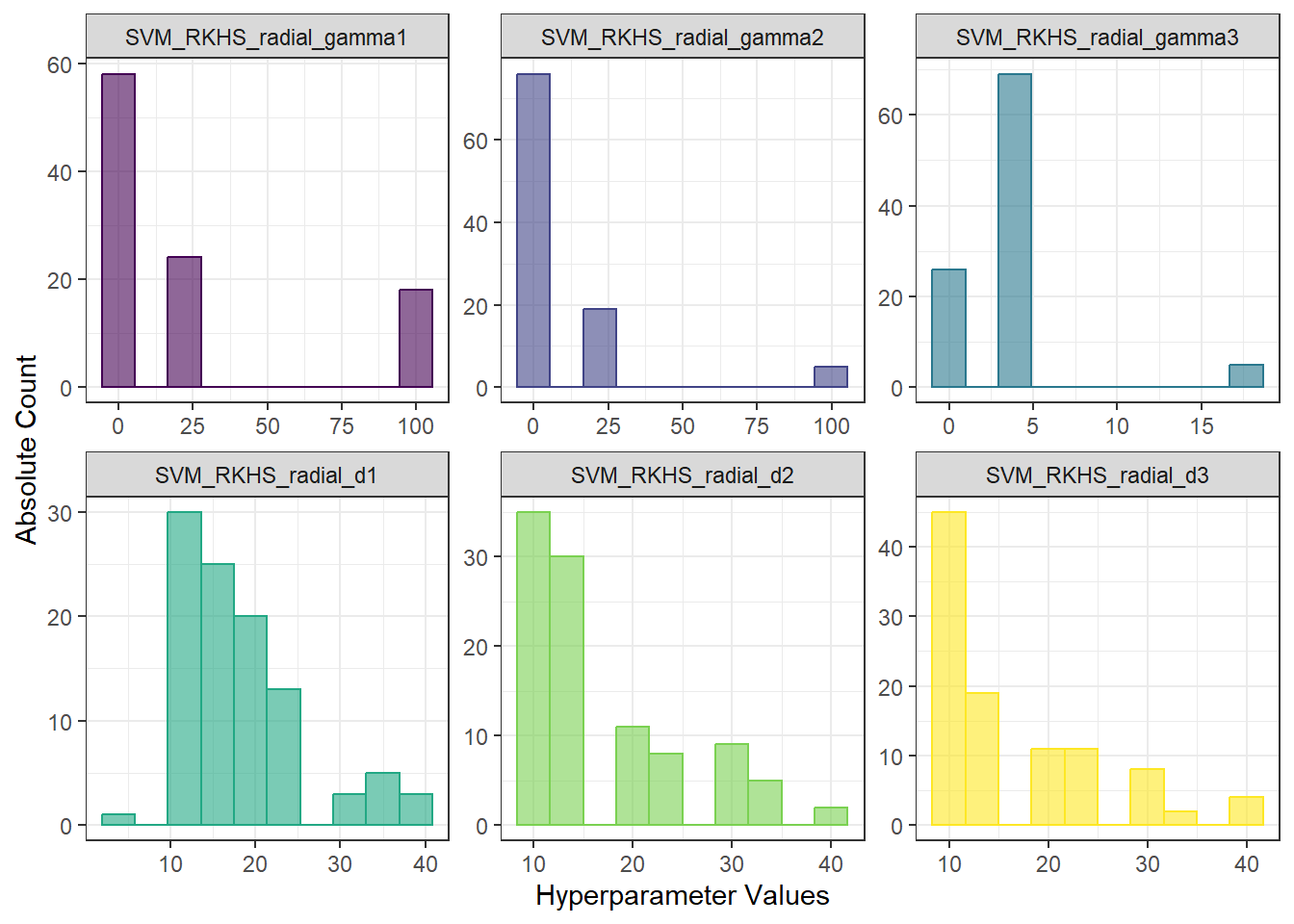

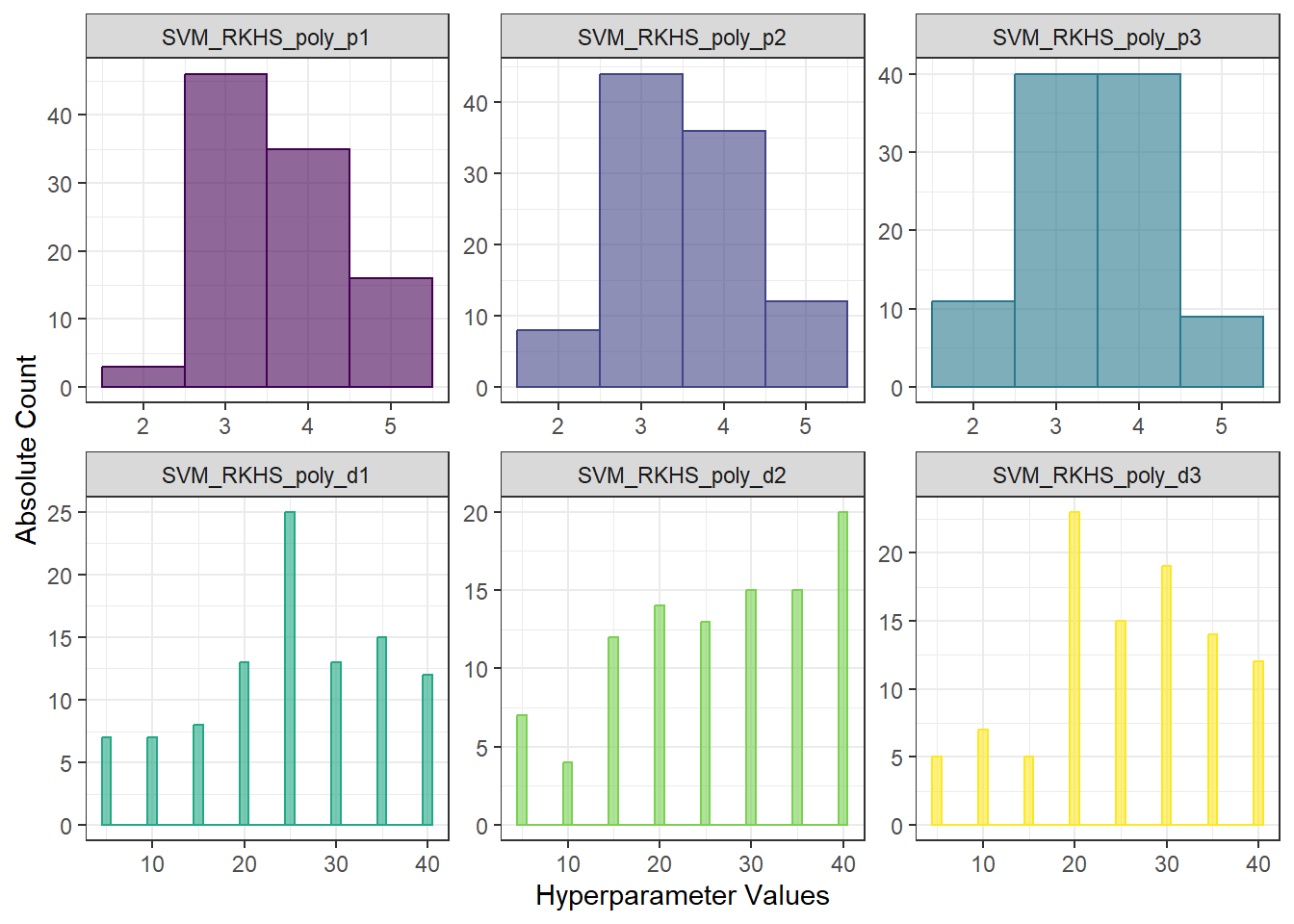

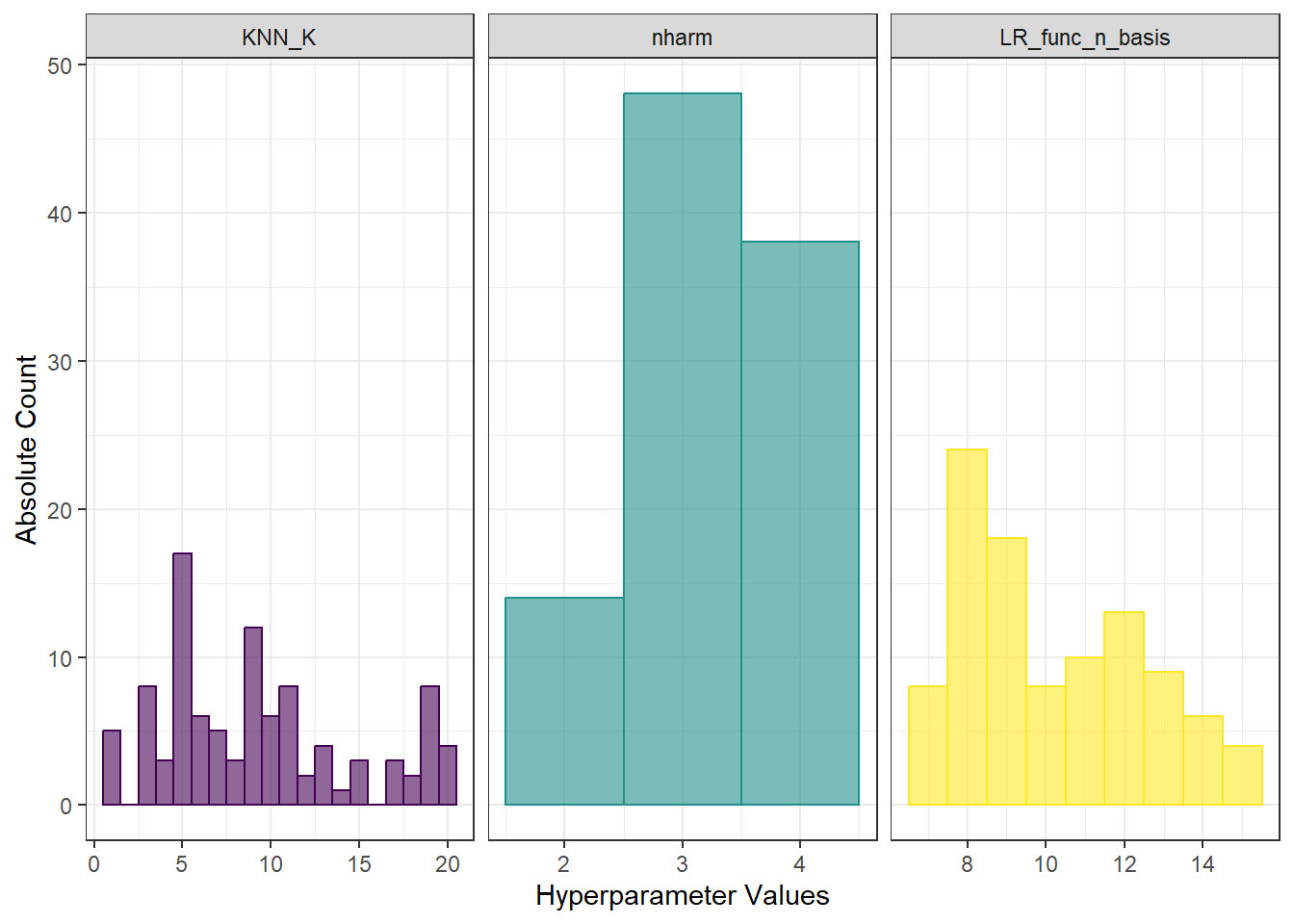

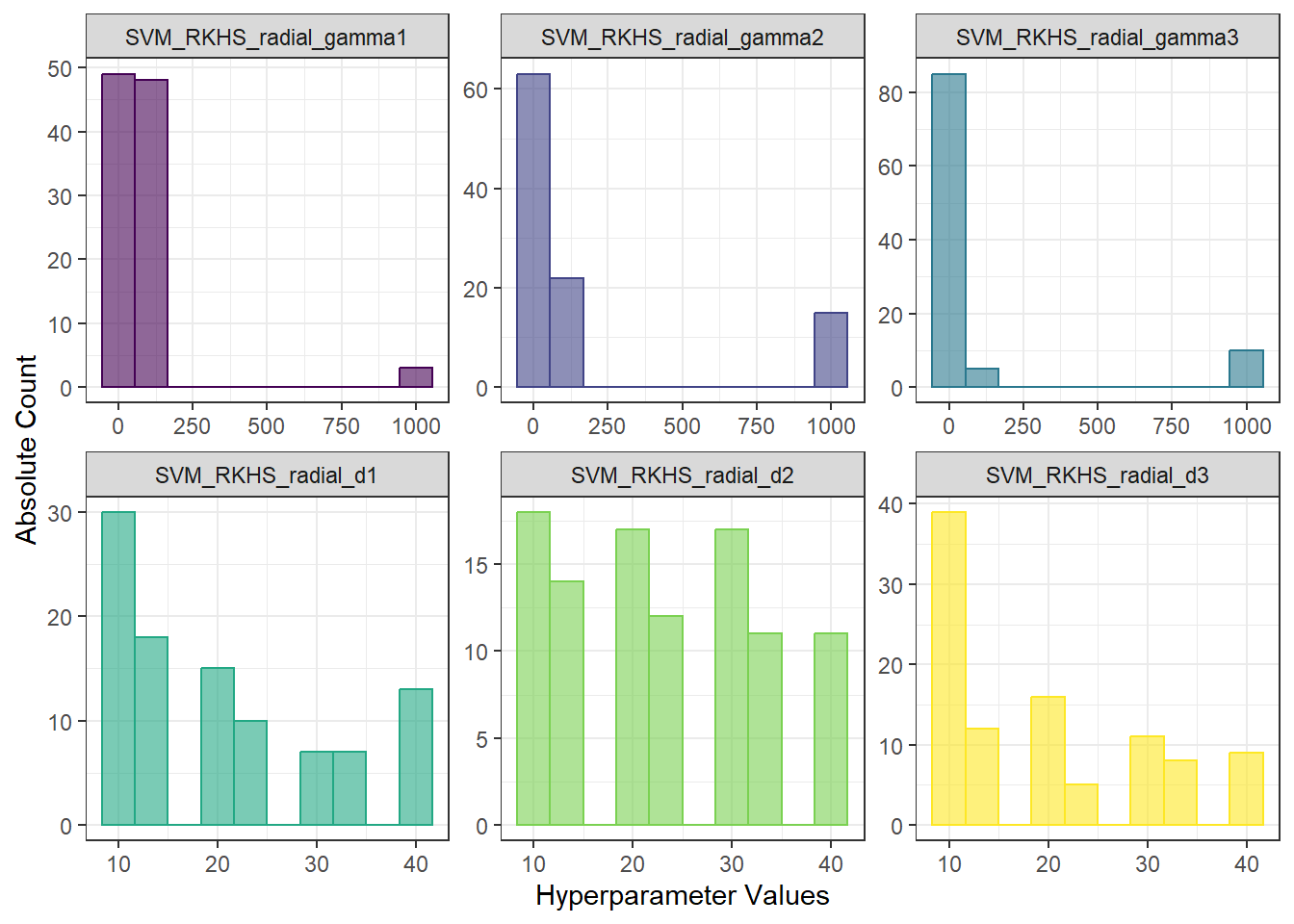

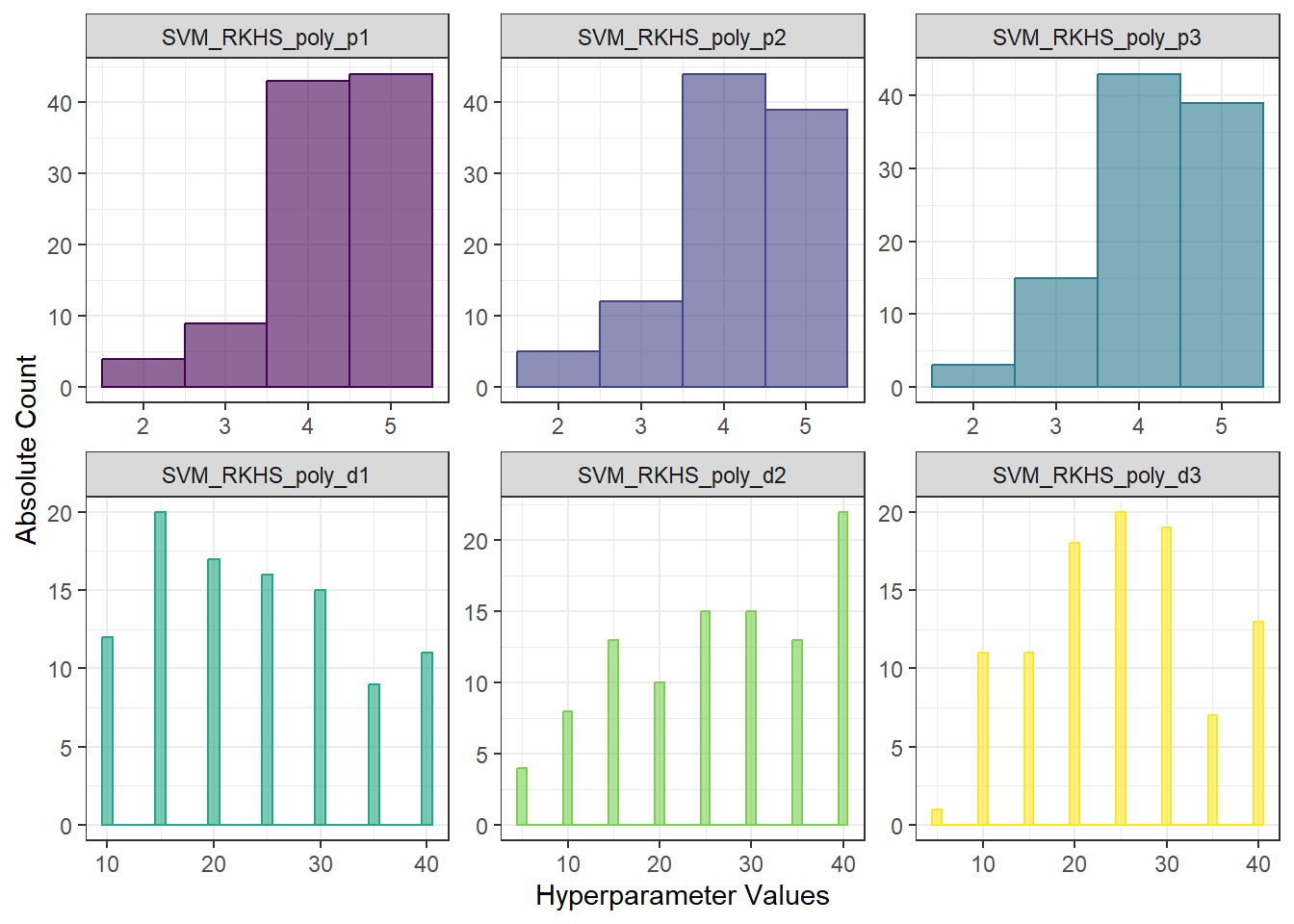

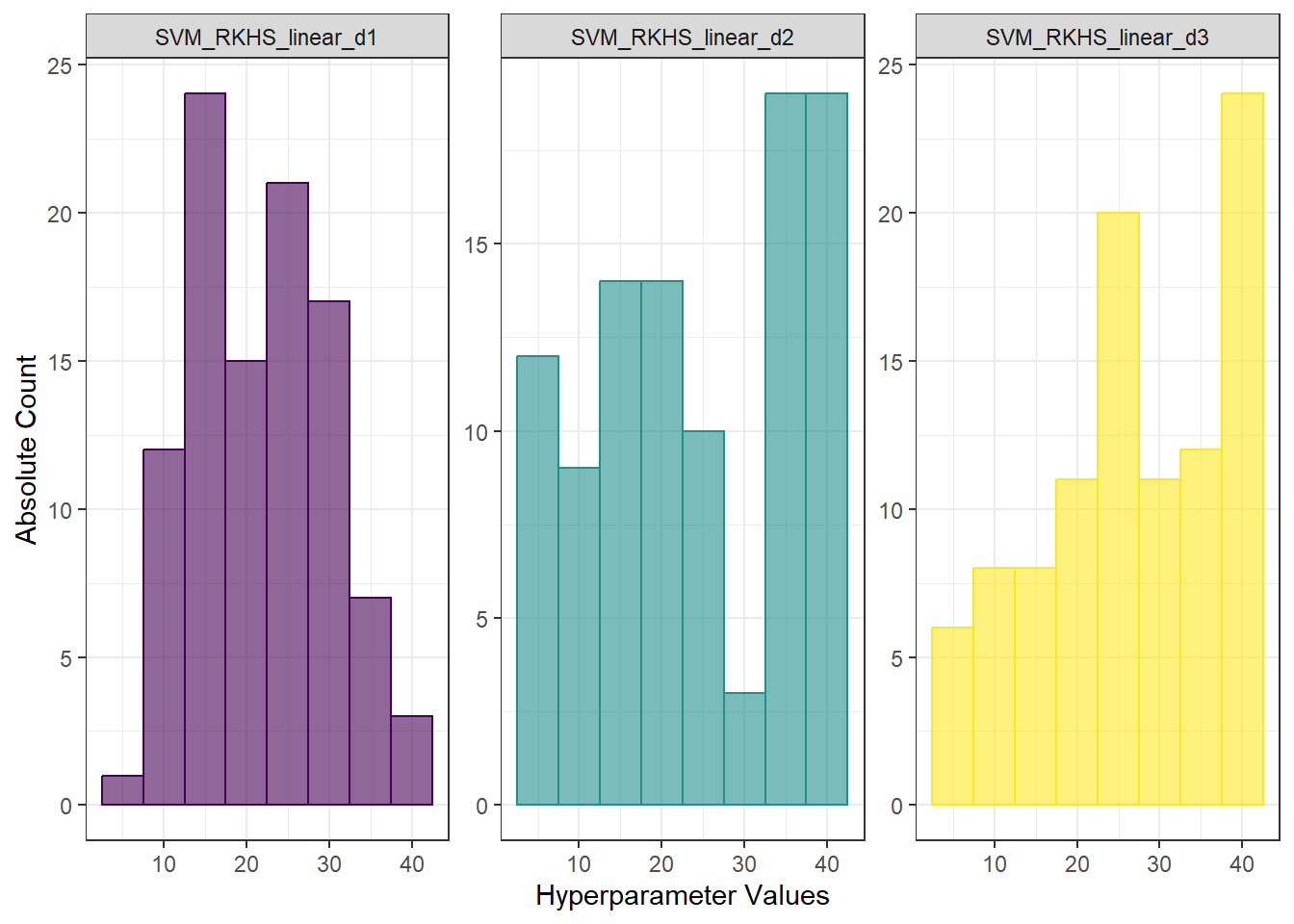

scale_x_continuous(breaks = dimensions)